Hire Data Scientists

Hire the Top 3% of Freelance Data Scientists

Hire vetted data scientists, engineers, analysts, specialists, experts, and developers on demand. Leading companies choose data science freelancers from Toptal for their most important development projects.

No-Risk Trial, Pay Only If Satisfied.

Hire Freelance Data Scientists

Adrian Curic

Adrian is a software engineer and data scientist working at the intersection of software engineering, computer vision, and machine learning. He has experience in research, multinational corporations, and startup environments and was awarded patents for real estate and financial modeling projects. Adrian also contributed to surveillance systems for Singapore's border security and participated in competitive coding and hackathons, consistently ranking in the top 1% on platforms like HackerRank.

Show MoreDenis Volk

Denis is a senior full-stack AI engineer and data scientist, highly skilled in modern generative tech (GPT-4, Midjourney, and more), machine learning, ETL pipelines, data analysis, mathematical modeling, big data, and MLOps. He has a PhD in mathematics, and his data science expertise includes probabilistic risk modeling, revenue forecasting, geospatial data analysis, handwriting recognition, anomaly detection in time series, data engineering, and team leading.

Show MoreKarol Kulasiński

Karol is a highly experienced senior data scientist with a strong focus on NLP and wide AI applications. He has a unique academic background in physics and large-scale models, in addition to relevant experience in the customer-facing data science industry. He enjoys working with data and leading and implementing R&D projects. With his PhD in physics and the recent MBA degree, Karol combines easy technology and business.

Show MoreA. Rosa Castillo, PhD

Rosa is a full-stack developer and data scientist with a PhD, solid research skills, and extensive software engineering experience. Combining the academy and industry approaches to data sciences, she can contribute to the whole data pipeline—from exploratory data analysis to prototyping and production. Rosa has also efficiently worked on projects across different countries using her professional English, Italian, German, and Spanish proficiency.

Show MoreCornelis Jan Drost

Cornelis is a developer, mathematical modeler, and researcher with experience working in tech at Amazon and Biomatters, and startups at Fetch.ai and Ubiquetherm. He thrives on solving new and complex problems, learning new skills and background knowledge, and excels at communicating requirements and results. Cornelis has experience in software development and supporting skills, such as data analysis, visualization, math, simulation, and complex systems.

Show MoreJayesh Menon

Jayesh is a seasoned data product and data management professional with over 16 years of experience delivering products and programs in data quality management, governance, and migration. He has an extensive track record in various industries, including transport, consumer goods, energy, and telecommunications. Jayesh looks for newer and enriching opportunities that will allow him to leverage his expertise in growing businesses through effective results.

Show MoreDogugun Ozkaya

Dogugun is a skilled data scientist with expertise in machine learning and data-centric solutions. Proficient in Python and SQL, he drives data-driven decisions and delivers innovative AI solutions. His strengths lie in B2B ML projects, automated pipelines, and real-time APIs. Dogugun's predictive modeling and visualization dashboards empower businesses to optimize processes and gain strategic insights. Dogugun is a valuable asset in advancing businesses with ML-driven solutions.

Show MoreJesse Moore

Jesse is an experienced data scientist, CTO, and founder who solves complex problems. He has founded four companies, including Sigmai, an automated news parsing company for hedge funds that was acquired in 2018; Mobilads, reaching an annual run rate of $5 million a year; Bluescribe, a bi-directional English and French translation engine for Canadian legal documents; and Relu Analyticsa, a data-science consulting company. He is currently at ThinkAlpha Securities as head of data science.

Show MoreNicolas Mallison

Nicolas is an expert data scientist with over 24 years of experience using programming languages, including R and Python, to design and develop AI/ML data products, combined with strong practice leadership and people management skills. Nicolas is a published author and thought leader with a vast track record of success in implementing new and innovative ways of achieving the most scalable, data-centric outcomes to drive new business while promoting a consultative and collaborative environment.

Show MoreGregory Kott

Greg is a senior data analytics consultant with over 26 years of experience delivering advanced analytics solutions to Fortune 500 companies and government agencies. Specializing in business intelligence, workforce optimization, and decision-support modeling, he transforms complex operational data into strategic insights. Greg applies a broad range of analytical methods, modeling techniques, and visualization approaches to drive data-informed decisions and improve organizational performance.

Show MoreChristophe Williams

Christophe holds an MBA from Wharton and leads Cedar Labs, a data science consultancy. He has ten years of data science experience across Capital One, Amazon, the 2012 Obama campaign, and several startups. Christophe has expertise in finance, marketing, and retail. His specialties include data strategy and architecture, hiring data teams, time series and forecasting, regression and classification, machine learning, NLP, analytics, and ETL/pipelines.

Show MoreDiscover More Data Scientists in the Toptal Network

Start HiringA Hiring Guide

Guide to Hiring a Great Data Scientist

Data science is the practice of extracting insights from data to help inform decisions. They wear many hats as master statisticians, business analysts, and database programmers. Secure the top candidates with this guide to hiring data scientists, including tips on writing an effective job description and examples of technical interview questions.

Read Hiring Guide... allows corporations to quickly assemble teams that have the right skills for specific projects.

Despite accelerating demand for coders, Toptal prides itself on almost Ivy League-level vetting.

How to Hire Data Science Engineers Through Toptal

Talk to One of Our Client Advisors

Work With Hand-selected Talent

The Right Fit, Guaranteed

EXCEPTIONAL TALENT

How We Source the Top 3% of Data Scientists

Our name “Toptal” comes from Top Talent—meaning we constantly strive to find and work with the best from around the world. Our rigorous screening process identifies experts in their domains who have passion and drive.

Of the thousands of applications Toptal sees each month, typically fewer than 3% are accepted.

Capabilities of Data Scientists

Leverage the expertise of our data scientists to transform complex datasets into actionable insights. Our team employs advanced machine learning, analytics, and statistical methods to drive informed decision-making and foster innovation across various industries.

Data Analysis and Interpretation

Data Cleaning and Preprocessing

Big Data Processing and Analytics

Machine Learning Model Development

Predictive Analytics and Forecasting

Custom Algorithm Development

Natural Language Processing (NLP)

A/B Testing and Experimentation

Data Visualization and Reporting

Data-driven Decision Making

FAQs

How much does it cost to hire a data scientist?

The cost depends on various factors, including preferred talent location, complexity and size of the project you’re hiring for, seniority, engagement commitment (hourly, part-time, or full-time), and more. In the US, for example, Glassdoor’s reported average total annual pay for data scientists is $126,845 as of May 19, 2023. With Toptal, you can speak with an expert talent matcher who will help you understand the cost of talent with the right skills and seniority level for your needs. To get started, schedule a call with us — it’s free, and there’s no obligation to hire with Toptal.

How quickly can you hire with Toptal?

Typically, you can hire data scientists with Toptal in about 48 hours. For larger teams of talent or Managed Delivery, timelines may vary. Our talent matchers are highly skilled in the same fields they’re matching in—they’re not recruiters or HR reps. They’ll work with you to understand your goals, technical needs, and team dynamics, and match you with ideal candidates from our vetted global talent network.

Once you select your data science specialist, you’ll have a no-risk trial period to ensure they’re the perfect fit. Our matching process has a 98% trial-to-hire rate, so you can rest assured that you’re getting the best fit every time.

How do I hire data scientists?

To hire the right data science expert, it’s important to evaluate a candidate’s experience, technical skills, and communication skills. You’ll also want to consider the fit with your particular industry, company, and project. Toptal’s rigorous screening process ensures that every member of our network has excellent experience and skills, and our team will match you with the perfect data scientists for your project.

How in demand are Data Scientists?

Data science specialists are in extremely high demand. A shortage in the job market has caused increased competition when hiring top talent. And this field will only see increased demand: The employment growth rate over the next decade stands at a staggering 36%, one of the highest compared to an average growth rate of 5%.

How should you choose the best Data scientists for your project?

You can pinpoint the best data science experts for your project by thoroughly assessing a candidate’s skills and how closely they match your requirements. Quality data specialists generally possess specific foundational technical skills: programming (e.g., Python, SQL), statistics, data wrangling, data visualization, machine learning, and cloud computing. Candidates should also have experience with bias and risk assessment, and must be strong communicators who can understand business needs. Look for applicants with a proven track record of using these hard and soft skills to produce tangible data insights.

How is Data Science used in real life?

Most modern companies—big or small—work with considerable amounts of data daily. Therefore, data science can be applied to all kinds of industries: It can be used to ensure accurate diagnoses in healthcare, select products for customers in digital marketing, perform risk assessments and fraud detection in finance, and conduct sales forecasts in retail. Data science yields insights that empower companies to make intelligent decisions, automate tasks, and boost innovation.

How are Toptal Data Scientists different?

At Toptal, we thoroughly screen candidates to ensure we only match you with the highest caliber of talent. Of the more than 200,000 people who apply to join the Toptal network each year, fewer than 3% make the cut.

In addition to screening for industry-leading expertise, we also assess candidates’ language and interpersonal skills to ensure that you have a smooth working relationship.

When you hire with Toptal, you’ll always work with world-class, custom-matched talent ready to help you achieve your goals.

Can you hire talent on an hourly basis or for project-based tasks?

You can hire skilled professionals on an hourly, part-time, or full-time basis. Toptal can also manage the entire project from end-to-end with our Managed Delivery offering. Whether you hire an expert for a full- or part-time position, you’ll have the control and flexibility to scale your team up or down as your needs evolve. Our talent can fully integrate into your existing team for a seamless working experience.

How are Toptal data science engineers different?

At Toptal, we thoroughly screen our data science developers to ensure we only match you with the highest caliber of talent. Of the more than 200,000 people who apply to join the Toptal network each year, fewer than 3% make the cut.

In addition to screening for industry-leading expertise, we also assess candidates’ language and interpersonal skills to ensure that you have a smooth working relationship.

When you hire data science analysts with Toptal, you’ll always work with world-class, custom-matched data scientists ready to help you achieve your goals.

Can you hire data science analysts on an hourly basis or for project-based tasks?

You can hire data science specialists on an hourly, part-time, or full-time basis. Toptal can also manage the entire project from end-to-end with our Managed Delivery offering. Whether you hire a data scientist for a full- or part-time position, you’ll have the control and flexibility to scale your team up or down as your needs evolve. Our data scientists can fully integrate into your existing team for a seamless working experience.

What is the no-risk trial period for Toptal data science specialists?

We make sure that each engagement between you and your data scientist begins with a trial period of up to two weeks. This means that you have time to confirm the engagement will be successful. If you’re completely satisfied with the results, we’ll bill you for the time and continue the engagement for as long as you’d like. If you’re not completely satisfied, you won’t be billed. From there, we can either part ways, or we can provide you with another data scientist who may be a better fit and with whom we will begin a second, no-risk trial.

How to Hire Data Scientists

Edoardo is a data scientist with experience as a CTO and Vice President of Engineering, having founded multiple projects and businesses. He specializes in R&D initiatives, developing MLJ.ji, the largest machine learning framework for Julia, and contributing to detection algorithms at Shift Technology. Edoardo holds a master’s degree in applied mathematics from the University of Warwick.

Expertise

Previously at

The Demand for Data Science Tops the Charts Across Many Sectors

With a projected employment growth rate of 36% over the next decade (one of the highest compared to an average growth rate of 4%), data science has a long life ahead of it—and 90% of leading companies have recognized this fact by increasing their investments in generative artificial intelligence as of 2024.

Yet, the field is not simple to master—or hire for—due to its many required proficiencies. As sustained demand in the job market increases, there is a race to find vetted data scientists who can analyze data carefully, build unbiased algorithms, and present compelling insights to stakeholders.

At a minimum, candidates need an extensive background in statistics and programming, and strong experience with production data sets and models. This guide specifies the job description tips, interview questions, and project-specific skill requirements that inform how to hire data scientists and maximize your company’s data analytics capabilities.

What Attributes Distinguish Quality Data Scientists From Others?

Top-notch candidates will have a blend of statistical, programming, and business skill sets with corresponding experience. At a minimum, an experienced data scientist will be proficient in four key competency areas:

- A pragmatic, statistical, and data-driven mentality: Handling data, especially big data, requires a foundation in statistics and an understanding of potential pitfalls and biases. They must have an understanding of potential technical risks—drawing on concepts from statistics and computer science—such as selection bias, survivorship bias, or Simpson’s paradox.

- Good communication and business understanding: Data science is highly interdisciplinary. They should be able to translate business needs into practical solutions, present the insights gained, and explain answers in layperson’s terms. Soft skills are essential for communicating complex solutions and insights to both technical teams and stakeholders.

- Experience with programming languages and databases: To handle, analyze, and present data, data scientists must be proficient with a programming language (typically Python) and possess experience in querying databases (typically SQL databases, though NoSQL database skills may be required depending on your project).

- Experience with production data sets and models: High-quality candidates will have real-world experience with production data sets and models instead of having only used test data sets such as those found on Kaggle (i.e., data competition experience). Data competitions don’t teach all the skills needed to work with real-world data.

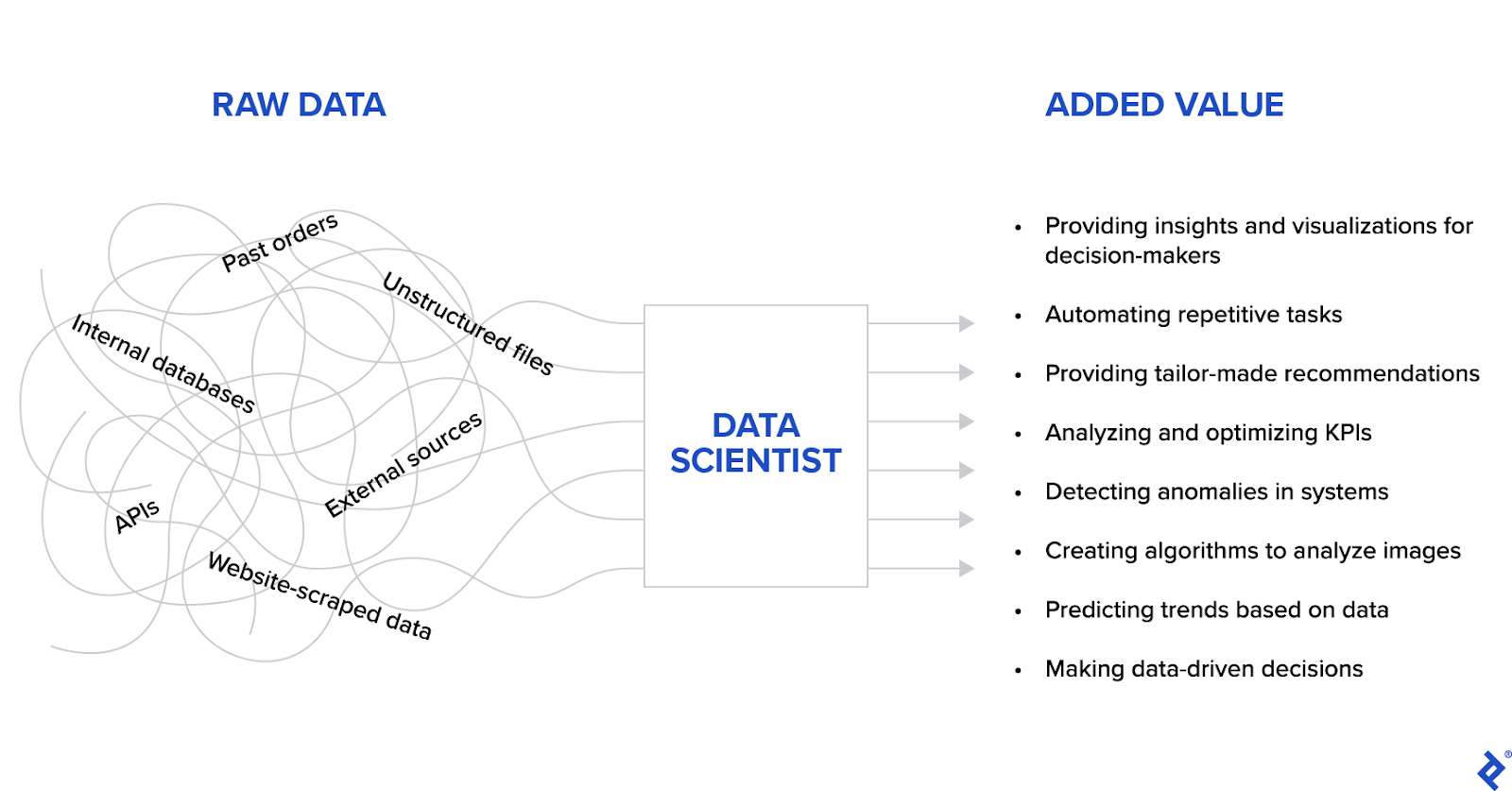

But what does a data scientist do, exactly? There is no simple answer. They are versatile, creative thinkers who can generate value from raw data in many ways—and they must have mastered many different concepts.

Let’s further break down the tangible data science skills required for success:

- Python: The ubiquitous language in data science and machine learning, supported by powerful libraries like pandas for data manipulation and NumPy for numerical computations.

- SQL: The language typically used to communicate with databases; most candidates should at least have rudimentary SQL experience.

- Statistics: The core mathematical foundation of data science, crucial for reducing biases, verifying conclusions, and deciding which model to use, including commonly used statistical analysis techniques such as regression and hypothesis testing.

- Data wrangling: The ability to transform raw data into a usable form; data is cleaned and organized during the extract, transform, and load (ETL) process.

- Data visualization: The visual presentation of data insights used to communicate key findings and verify results; visualizing and interpreting metrics specific to your problem is key to ensuring relevancy and avoiding harm.

- Machine learning: The ability to train models on past data to perform on unseen data; at a minimum, data scientists should know simple machine learning models, including techniques such as decision trees and neural networks.

- Cloud computing: A key component of modern data-driven businesses; platforms like Amazon Web Services (AWS), Google Cloud, or Azure allow scalable processing of big data and cutting-edge machine learning projects.

Finally, general software development skills like debugging and using version control tools (e.g., Git is most commonly used for version control) are also mandatory for working with code.

How Can You Identify the Ideal Data Scientist for You?

There are multiple considerations when finding a data scientist who matches your project requirements. When working with complex data or on more technical efforts, including research and automation, you should focus on specialized candidates.

For all types of projects, to ensure you have a good fit, explain your problems, your business goals, and the data available, and then ask the candidate to describe their relevant experience.

Complex data—text, images, audio, video, and time-dependent data—should be treated carefully, as it is handled very differently from tabular data and requires special training and methods. In this case, a candidate should provide a detailed synopsis of similar projects they have worked on previously and how they will apply their skills to your project. You may also consider formal certifications, such as those from Microsoft, AWS, or SAS, to verify that candidates have completed recognized training programs.

If you are working with simpler data (e.g., structured, clean data), you may be able to meet your needs with a less technical data analyst. When should you hire for data science versus data analyst skills? This is a standing debate in the community, and there is no universal answer. However, some differences are generally agreed upon:

Skill | Data Scientist | Data Analyst |

Programming | Has strong programming experience (typically Python) | May not possess knowledge of programming languages |

Working with data types | Can work on raw, unstructured data | Usually works with structured, clean data only |

Technical specializations | Builds processing pipelines and advanced models (e.g., prediction, classification, and automation) | Creates reports, visualizations, and insights aimed at nontechnical audiences |

Collaboration | Primarily works with technical team members | Primarily works with business team members |

If your project includes advanced technical goals—performing task automation, solving open research problems, or implementing global business improvements (e.g., researching how statistical models or AI techniques improve business needs)—then your needs extend beyond simple data analysis, and you should focus on hiring data scientists.

Additionally, you will benefit from identifying the precise specialization that your project requires:

- Data mining specialists extract information from large data sets.

- Data engineering specialists format and structure data for analysis.

- Database management specialists organize data on a company-wide scale.

- Data visualization specialists prepare interactive visual representations of data.

- Machine learning specialists create advanced models to solve complex problems.

Commonly, multiple data science experts across varying specializations will work together to achieve a team’s goals.

How Do You Write a Data Science Job Description?

When you have identified the skills necessary for your project-specific requirements, the next step is to write your job description. In order to attract the best candidates, a job description should include:

- The data at hand, problem statement, and project goals (e.g., analysis, visualization, prediction model creation, data cleaning, etc.).

- The technology stack and available resources, including the project’s software languages and frameworks, cloud providers required, and database type.

- The flexibility data scientists will have in how they can approach the problem, which models they can use, and what the data processing pipeline might look like; good candidates will be able to suggest different approaches tailored to your problem.

You may reference a data scientist job description template as a starting point and adjust it depending on your needs to pinpoint the best data scientist for the job.

Keep in mind that this is a highly technical role, and it is important to verify a candidate’s background with multiple assessment rounds once you have identified suitable applicants from your job posting. It may be helpful to prepare a screening test with standard programming and theoretical questions before interviewing. Also, you may want to vet senior candidates with a take-home project with deliverables relevant to your company’s goals.

What Are the Most Important Data Science Interview Questions?

Your selected data science interview questions will be informed primarily by your business requirements. However, there are some standard questions all candidates should answer correctly before moving on to your project-tailored questions.

You may start with more basic concepts as a warm up. A candidate who cannot answer these questions may not have an adequate background to move forward:

What is a graph, and why is it useful?

A graph (or network) is a data structure generally used to make data analysis and visualization easier. It represents information using nodes connected by edges:

- Nodes represent entities such as a person, an address, or a movie listing.

- Edges connect nodes; they represent relationships between nodes.

Let’s consider a simple example: A graph might have a user node connected to other nodes representing related user information (e.g., the user’s residence country or several of the user’s topics of interest). Businesses can use this graph and all of its information for applications such as producing recommendations tailored to each user.

How is SQL used in data science?

SQL is the standard language used to make queries when working with relational databases. It can make simple queries (e.g., fetching all users older than 21) and complex queries that aggregate or calculate statistical values and other counts. For example, a more complex query might identify all users older than 16, group them by their jobs, and return their sorted count, average credit score, and average salary.

After verifying a candidate’s knowledge of the fundamentals, you should assess their understanding of skills related to working with large amounts of data:

What can you do with data wrangling?

Data wrangling makes data sets easier to analyze and interpret. It is a necessary step when the starting data is not well organized or lacks a standard structure. It typically formats values in a standard way, such as putting all dates and times in ISO 8601 format or organizing all phone numbers with prefixes. Data wrangling can also assist with data validation: For example, it could handle a case where a person’s age is 734 years or has a negative value.

What are the benefits of cloud computing in data processing and analysis?

In short, cloud computing reduces machine learning costs. Machine learning models are typically resource intensive in the training phase. Though they can use any machine (e.g., a laptop) for testing, once models are validated and ready for real training, they require much more computation time and power—and, in many cases, specific hardware, which is extremely expensive to buy. Cloud computing allows companies to rent the hardware (and execute computation from the cloud), which makes training a model much more affordable.

We have covered basic questions applicable to many projects that act as a starting point and demonstrate the level of detail to expect in a candidate’s answers. However, every candidate should be skilled in various programming languages and statistical concepts. You should cherry-pick additional questions from the following guides based on your requirements:

- 11 Essential Python Interview Questions and Answers

- 41 Essential SQL Interview Questions and Answers

- 10 Essential Data Analysis Interview Questions and Answers

- 10 Essential Machine Learning Interview Questions and Answers

Data scientists serve many different roles depending on a company’s needs; for such a broad role, there is no one-size-fits-all list of interview questions applicable to every project.

Why Do Companies Hire Data Scientists?

Modern companies collect and process large amounts of data daily, whether from their internal processes, their customers, or other external sources. After being treated, the data is stored and often left unused. If you sell any product, you likely have years’ worth of order history records lying around. Past data yields future value—with the right data scientist.

The short answer to the question “When should I hire a data scientist?” is “Almost always,” especially when you are working with large or complex data sets and want to make data-driven business decisions. In smaller businesses, a data scientist can set up a data pipeline and provide guidelines on collecting data based on the company’s future endeavors. For companies collecting larger amounts of data, they can provide valuable insights, suggest data-driven decisions, and train prediction models.

Since data is highly company-specific and business concerns can vary widely, it’s difficult to make generalizations about the role. However, we can examine a few example scenarios:

- Creating a system capable of suggesting tailored recommendations for past and future clients.

- Predicting required maintenance, reducing unexpected repair costs.

- Automating tasks currently done manually, saving countless hours of work per year.

- Analyzing social media data to monitor brand sentiment and improve customer engagement strategies.

Data science is increasingly becoming an essential aspect of business decision-making, automation, and analysis. It is wise to leverage all available data to provide better customer experiences, increase sales, and drive innovation. Businesses that don’t maximize the potential of data will be left behind, and hiring the best data scientists will allow your products to yield more value than those of competitors.

The technical content presented in this article was reviewed by Amanbir Singh.

Featured Toptal Data Science Publications

Theory, Tools, and Business Applications: An In-depth Look at Quantum Computing

Top Data Scientists Are in High Demand.