HSA For Developers: Heterogeneous Computing For The Masses

HSA is a set of standards and specifications designed to allow further integration of CPUs and GPUs on the same bus. This is not an entirely new concept, but HSA takes it to the next level. HSA would effectively take the developer out of the equation, at least when it comes to assigning different loads to different processing cores.

In this post, Toptal Technical Editor and resident chip geek Nermin Hajdarbegovic takes a closer look at HSA and the future of heterogeneous computing in general. Get ready for some good news, but don’t forget to brace for bad news first.

HSA is a set of standards and specifications designed to allow further integration of CPUs and GPUs on the same bus. This is not an entirely new concept, but HSA takes it to the next level. HSA would effectively take the developer out of the equation, at least when it comes to assigning different loads to different processing cores.

In this post, Toptal Technical Editor and resident chip geek Nermin Hajdarbegovic takes a closer look at HSA and the future of heterogeneous computing in general. Get ready for some good news, but don’t forget to brace for bad news first.

Nermin Hajdarbegovic

As a veteran tech writer, Nermin helped create online publications covering everything from the semiconductor industry to cryptocurrency.

Expertise

What do chipmakers like AMD, ARM, Samsung, MediaTek, Qualcomm, and Texas Instruments have in common? Well, apart from the obvious similarities between these chip-making behemoths, they also happen to be founders of the HSA Foundation. What’s HSA, and why does it need a foundation backed by industry heavyweights?

In this post I will try to explain why HSA could be a big deal in the near future, so I’ll start with the basics: What is HSA and why should you care?

HSA stands for Heterogeneous System Architecture, which sounds kind of boring, but trust me, it could become very exciting, indeed. HSA is essentially a set of standards and specifications designed to allow further integration of CPUs and GPUs on the same bus. This is not an entirely new concept; desktop CPUs and mobile SoCs have been employing integrated graphics and using a single bus for years, but HSA takes it to the next level.

Rather than simply using the same bus and shared memory for the CPU and GPU, HSA also allows these two vastly different architectures to work in tandem and share tasks. It might not sound like a big deal, but if you take a closer look, and examine the potential long-term effects of this approach, it starts to look very “sweet” in a technical sense.

Oh No! Here’s Another Silly Standard Developers Have To Implement

Yes and no.

The idea of sharing the same bus is not new, and neither is the idea of employing highly parallelised GPUs for certain compute tasks (which don’t involve rendering headshots). It’s been done before, and I guess most of our readers are already familiar with GPGPU standards like CUDA and OpenCL.

However, unlike the CUDA or OpenCL approach, HSA would effectively take the developer out of the equation, at least when it comes to assigning different loads to different processing cores. The hardware would decide when to offload calculations from the CPU to the GPU and vice versa. HSA is not supposed to replace established GPGPU programming languages like OpenCL, as they can be implemented on HSA hardware as well.

That’s the whole point of HSA: It’s supposed to make the whole process easy, even seamless. Developers won’t necessarily have to think about offloading calculations to the GPU. The hardware will do it automatically.

To accomplish this, HSA will have to enjoy support from multiple chipmakers and hardware vendors. While the list of HSA supporters is impressive, Intel is conspicuously absent from this veritable who’s who of the chip industry. Given Intel’s market share in both desktop and server processor markets, this is a big deal. Another name you won’t find on the list is Nvidia, which is focused on CUDA, and is currently the GPU compute market leader.

However, HSA is not designed solely for high performance systems and applications, on hardware that usually sports an Intel Inside sticker. HSA can also be used in energy efficient mobile devices, where Intel has a negligible market share.

So, HSA is supposed to make life easier, but is it relevant yet? Will it catch on? This is not technological question, but an economic one. It will depend on the invisible hand of the market. So, before we proceed, let’s start by taking a closer look at where things stand right now, and how we got here.

HSA Development, Teething Problems And Adoption Concerns

As I said in the introduction, HSA is not exactly a novel concept. It was originally envisioned by Advanced Micro Devices (AMD), which had a vested interest in getting it off the ground. A decade ago, AMD bought graphics specialists ATI, and since then the company has been trying to leverage its access to cutting edge GPU technology to boost overall sales.

On the face of it, the idea was simple enough: AMD would not only continue developing and manufacturing cutting-edge discrete GPUs, it would also integrate ATI’s GPU technology in its processors. AMD’s marketing department called the idea ‘Fusion’, and HSA was referred to as Fusion System Architecture (FSA). Sounds great, right? Getting a decent x86 processor with good integrated graphics sounded like a good idea, and it was.

Unfortunately, AMD ran into a number of issues along the way; I’ll single out a few of them:

- Any good idea in tech is bound to be picked up by competitors, in this case – Intel.

- AMD lost the technological edge to Intel and found it increasingly difficult to compete in the CPU market due to Intel’s foundry technology lead.

- AMD’s execution was problematic and many of the new processors were late to market. Others were scrapped entirely.

- The economic meltdown of 2008 and subsequent mobile revolution did not help.

These, and a number of other factors, conspired to blunt AMD’s edge and prevent market adoption of its products and technologies. AMD started rolling out processors with the new generation of integrated Radeon graphics in mid-2011, and it started calling them Accelerated Processing Units (APUs) instead of CPUs.

Marketing aside, AMD’s first generation of APUs (codenamed Llano), was a flop. The chips were late and could not keep up with Intel’s offerings. Serious HSA features were not included either, but AMD started adding them in its 2012 platform (Trinity, which was essentially Llano done right). The next step came in 2014, with the introduction of Kaveri APUs, which supported heterogeneous memory management (the GPU IOMMU and CPU MMU shared the same address space). Kaveri also brought about more architectural integration, enabling coherent memory between the CPU and GPU (AMD calls it hUMA, which stands for Heterogeneous Unified Memory Access) . The subsequent Carizzo refresh added even more HSA features, enabling the processor to context switch compute tasks on the GPU and do a few more tricks.

The upcoming Zen CPU architecture, and the APUs built on top of it, promises to deliver even more, if and when it shows up on the market.

So what’s the problem?

AMD was not the only chipmaker to realise the potential of on-die GPUs. Intel started adding them to its Core CPUs as well, as did ARM chipmakers, so integrated GPUs are currently used in virtually every smartphone SoC, plus the vast majority of PCs/Macs. In the meantime, AMD’s position in the CPU market was eroded. The market share slump made AMD’s platforms less appealing to developers, businesses, and even consumers. There simply aren’t that many AMD-based PCs on the market, and Apple does not use AMD processors at all (although it did use AMD graphics, mainly due to OpenCL compatibility).

AMD no longer competes with Intel in the high-end CPU market, but even if it did, it wouldn’t make much of a difference in this respect. People don’t buy $2,000 workstations or gaming PCs to use integrated graphics. They use pricey, discrete graphics, and don’t care much about energy efficiency.

How About Some HSA For Smartphones And Tablets?

But, wait. What about mobile platforms? Couldn’t AMD just roll out similar solutions for smartphone and tablet chips? Well, no, not really.

You see, a few years after the ATI acquisition, AMD found itself in a tough financial situation, compounded by the economic crisis, so it decided to sell off its Imageon mobile GPU division to Qualcomm. Qualcomm renamed the products Adreno (anagram of Radeon), and went on to become the dominant player in the smartphone processor market, using freshly repainted in-house GPUs.

As some of you may notice, selling a smartphone graphics outfit just as the smartphone revolution was about to kick off, does not look like a brilliant business move, but I guess hindsight is always 20/20.

HSA used to be associated solely with AMD and its x86 processors, but this is no longer the case. In fact, if all HSA Foundation members started shipping HSA-enabled ARM smartphone processors, they would outsell AMD’s x86 processors several fold, both in terms of revenue and units shipped. So what happens if they do? What would that mean for the industry and developers?

Well, for starters, smartphone processors already rely on heterogeneous computing, sort of. Heterogeneous computing usually refers to the concept of using different architectures in a single chip, and considering all the components found on today’s highly integrated SoCs, this could be a very broad definition. As a result, nearly every SoC may be considered a heterogeneous computing platform, depending on one’s standards. Sometimes, people even refer to different processors based on the same instruction set as a heterogeneous platform (for example, mobile chips with ARM Cortex-A57 and A53 cores, both of which are based on the 64-bit ARMv8 instruction set).

Many observers agree that most ARM-based processors may now be considered heterogeneous platforms, including Apple A-series chips, Samsung Exynos SoCs and similar processors from other vendors, namely big players like Qualcomm and MediaTek.

But why would anyone need HSA on smartphone processors? Isn’t the whole point of using GPUs for general computing to deal with professional workloads, not Angry Birds and Uber?

Yes it is, but that does not mean that a nearly identical approach can’t be used to boost efficiency, which is a priority in mobile processor design. So, instead of crunching countless parallelized tasks on a high-end workstation, HSA could also be used to make mobile processors more efficient and versatile.

Few people take a close look at these processors, they usually check the spec sheet when they’re buying a new phone and that’s it: They look at the numbers and brands. They usually don’t look at the SoC die itself, which tells us a lot, and here is why: GPUs on high-end smartphone processors take up more silicon real estate than CPUs. Considering they’re already there, it would be nice to put them to good use in applications other than gaming, wouldn’t it?

A hypothetical, fully HSA-compliant smartphone processor could allow developers to tap this potential without adding much to the overall production costs, implement more features, and boost efficiency.

Here is what HSA could do for smartphone processors, in theory at least:

- Improve efficiency by transferring suitable tasks to the GPU.

- Boost performance by offloading the CPU in some situations.

- Utilize the memory bus more effectively.

- Potentially reduce chip manufacturing costs by tapping more silicon at once.

- Introduce new features that could not be handled by the CPU cores in an efficient way.

- Streamline development by virtue of standardisation.

Sounds nice, especially when you consider developers are unlikely to waste a lot of time on implementation. That’s the theory, but we will have to wait to see it in action, and that may take a while.

How Does HSA Work Anyway?

I already outlined the basics in the introduction, and I am hesitant to go into too much detail for a couple of reasons: Nobody likes novellas published on a tech blog, and HSA implementations can differ.

Therefore, I will try to outline the concept in a few hundred words.

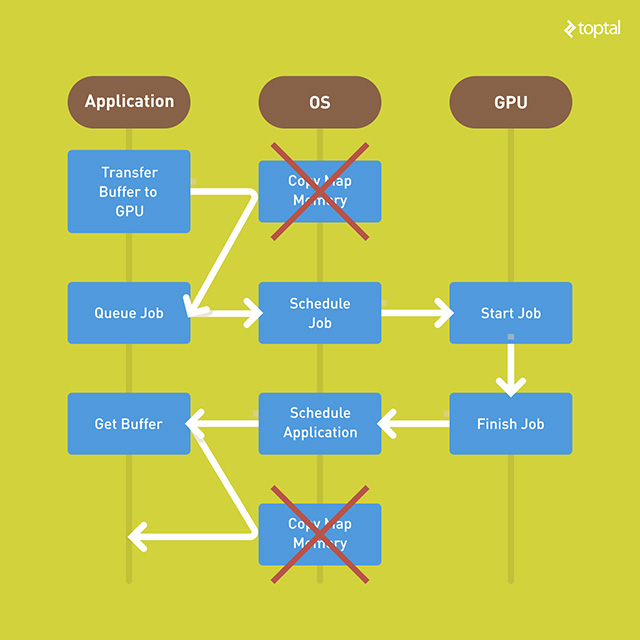

On a standard system, an application would offload calculations GPU by transferring the buffers to the GPU, which would involve a CPU call prior to queuing. The CPU would then schedule the job and pass it to the GPU, which would pass it back to the CPU upon completion. Then the application would get the buffer, which would again have to be mapped by the CPU before it is ready. As you can see, this approach involves a lot of back-and-forths.

On an HSA system, the application would queue the job, the HSA CPU would take over, hand it off to the GPU, get it back, and get it to the application. Done.

This is made possible by sharing system memory directly between the CPU and GPU, although other computing units could be involved too (DSPs for example). To accomplish this level of memory integration, HSA employs a virtual address space for compute devices. This means CPU and GPU cores can access the memory on equal terms, as long as they share page tables, allowing different devices to exchange data through pointers.

This is obviously great for efficiency, because it is no longer necessary to allocate memory to the GPU and CPU using virtual memory for each. Thanks to unified virtual memory, both of them can access the system memory according to their needs, ensuring superior resource utilization and more flexibility.

Imagine a low-power system with 4GB of RAM, 512MB of which is allocated for the integrated GPU. This model is usually not flexible, and you can’t change the amount of GPU memory on the fly. You’re stuck with 256MB or 512MB, and that’s it. With HSA, you can do whatever the hell you want: If you offload a lot of stuff to the GPU, and need more RAM for the GPU, the system can allocate it. So, in graphics-bound applications, with a lot of hi-res assets, the system could end up allocating 1GB or more RAM to the GPU, seamlessly.

All things being equal, HSA and non-HSA systems will share the same memory bandwidth, have access to the same amount of memory, but the HSA system could end up using it much more efficiently, thus improving performance and reducing power consumption. It’s all about getting more for less.

What Would Heterogeneous Computing Be Good For?

The simple answer? Heterogeneous computing, or HSA as one if its implementations, should be a good choice for all compute tasks better suited to GPUs than CPUs. But what does that exactly mean, what are GPUs good at anyway?

Modern, integrated GPUs aren’t very powerful compared to discrete graphics (especially high-end gaming graphics cards and workstation solutions), but they are vastly more powerful than their predecessors.

If you haven’t been keeping track, you might assume that these integrated GPUs are a joke, and for years they were just that: graphics for cheap home and office boxes. However, this started changing at the turn of the decade as integrated GPUs moved from the chipset into the CPU package and die, becoming truly integrated.

While still woefully underpowered compared to flagship GPUs, even integrated GPUs pack a lot of potential. Like all GPUs, they excel at single instruction, multiple data (SIMD) and single instruction, multiple threads (SIMT) loads. If you need to crunch a lot of numbers in repetitive, parallelised loads, GPUs should help. CPUs, on the other hand, are still better at heavy, branched workloads.

That’s why CPUs have fewer cores, usually between two and eight, and the cores are optimized for sequential serial processing. GPUs tend to have dozens, hundreds, and in flagship discrete graphics cards, thousands of smaller, more efficient cores. GPU cores are designed to handle multiple tasks simultaneously, but these individual tasks are much simpler than those handled by the CPU. Why burden the CPU with such loads, if the GPU can handle them with superior efficiency and/or performance?

But if GPUs are so bloody good at it, why didn’t we start using them as general computing devices years ago? Well, the industry tried, but progress was slow and limited to certain niches. The concept was originally called General Purpose Computing on Graphics Processing Units (GPGPU). In the old days, potential was limited, but the GPGPU concept was sound and was subsequently embraced and standardized in the form of Nvidia’s CUDA and Apple’s/Khronos Group’s OpenCL.

CUDA and OpenCL made a huge difference since they allowed programmers to use GPUs in a different, and much more effective, way. They were, however, vendor-specific. You could use CUDA on Nvidia hardware, while OpenCL was reserved for ATI hardware (and was embraced by Apple). Microsoft’s DirectCompute API was released with DirectX 11, and allowed for a limited, vendor agnostic approach (but was limited to Windows).

Let’s sum up by listing a few applications for GPU computing:

-

Traditional high-performance computing (HPC) in the form of HPC clusters, supercomputers, GPU clusters for compute loads, GRID computing, load-balancing.

-

Loads that require physics, which can, but don’t have to, involve gaming or graphics in general. They can also be used to handle fluid dynamics calculations, statistical physics, and a few exotic equations and algorithms.

-

Geometry, almost everything related to geometry, including transparency computations, shadows, collision detection and so on.

-

Audio processing, using a GPU in lieu of DSPs, speech processing, analogue signal processing and more.

-

Digital image processing, is what GPUs are designed for (obviously), so they can be used to accelerate image and video post processing and decoding. If you need to decode a video stream and apply a filter, even an entry-level GPU will wipe the floor with a CPU.

-

Scientific computing, including climate research, astrophysics, quantum mechanics, molecular modelling, and so on.

-

Other computationally intensive tasks, namely encryption/decryption. Whether you need to “mine” cryptocurrencies, encrypt or decrypt your confidential data, crack passwords or detect viruses, the GPU can help.

This is not a complete list of potential GPU compute applications, but readers unfamiliar with the concept should get a general idea of what makes GPU compute different. I also left out obvious applications, such as gaming and professional graphics.

A comprehensive list does not exist, anyway, because GPU compute can be used for all sorts of stuff, ranging from finance and medical imaging, to database and statistics loads. You’re limited by your own imagination. So-called computer vision is another up and coming application. A capable GPU is a good thing to have if you need to “teach” a drone or driverless car to avoid trees, pedestrians, and other vehicles.

Feel free to insert your favourite Lindsay Lohan joke here.

Developing For HSA: Time For Some Bad News

This may be my personal opinion rather than fact, but I am an HSA believer. I think the concept has a lot of potential, provided it is implemented properly and gains enough support among chipmakers and developers. However, progress has been painfully slow, or maybe that’s just my feeling, with a pinch of wishful thinking. I just like to see new tech in action, and I’m anything but a patient individual.

The trouble with HSA is that it’s not there, yet. That does not mean it won’t take off, but it might take a while. After all, we are not just talking about new software stacks; HSA requires new hardware to do its magic. The problem with this is that much of this hardware is still on the drawing board, but we’re getting there. Slowly.

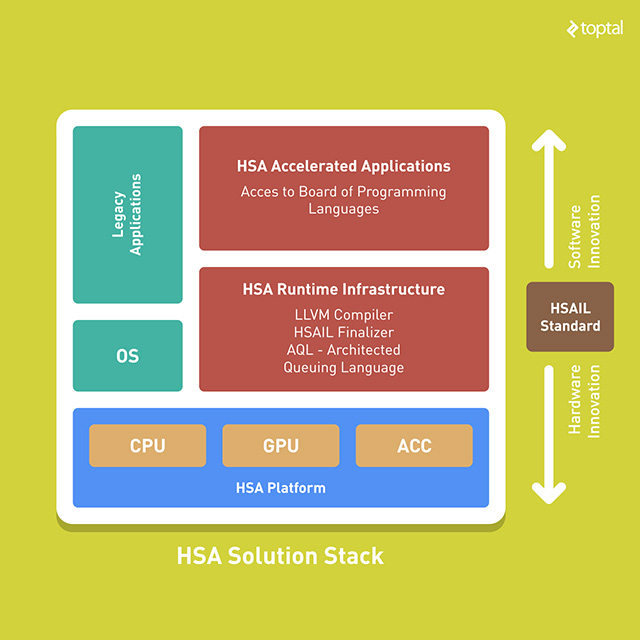

This does not mean developers aren’t working on HSA-related projects, but there’s not a lot of interest, or progress, for that matter. Here are a few resources you should check out if you want to give HSA a go:

-

HSA Foundation @ GitHub is, obviously, the place for HSA-related resources. The HSA Foundation publishes and maintains a number of projects on GitHub, including debuggers, compilers, vital HSAIL tools, and much more. Most resources are designed for AMD hardware.

-

HSAIL resources provided by AMD allows you to get a better idea of the HSAIL spec. HSAIL stands for HSA Intermediate Language, and it’s basically the key tool for back-end compiler writers and library writers who want to target HSA devices.

-

HSA Programmer’s Reference Manual (PDF) includes the complete HSAIL spec, plus a comprehensive explanation of the intermediate language.

-

HSA Foundation resources are limited for the time being and the foundation’s Developers Program is “coming soon,” but there are a number of official developer tools to check out. More importantly, they will give you a good idea of the stack you’ll need to get started.

-

The official AMD Blog features some useful HSA content as well.

This should be enough to get you started, provided you are the curious type. The real question is whether or not you should bother to begin with.

The Future Of HSA And GPU Computing

Whenever we cover an emerging technology, we are confronted with the same dilemma: Should we tell readers to spend time and resources on it, or to keep away, taking the wait and see approach?

I have already made it clear that I am somewhat biased because I like the general concept of GPU computing, but most developers can do without it, for now. Even if it takes off, HSA will have limited appeal and won’t concern most developers. However, it could be important down the road. Unfortunately for AMD, it’s unlikely to be a game-changer in the x86 processor market, but it could prove more important in ARM-based mobile processors. It may have been AMD’s idea, but companies such as Qualcomm and MediaTek are better positioned to bring HSA-enabled hardware to hundreds of millions of users.

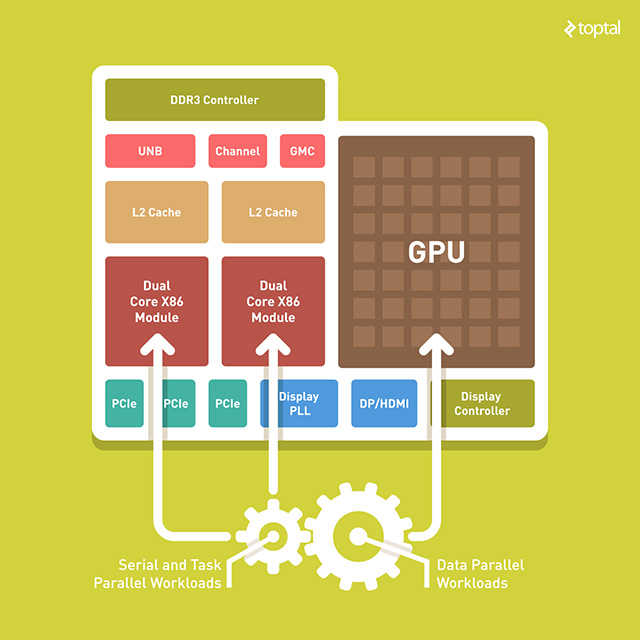

It has to be a perfect symbiosis of software and hardware. If mobile chipmakers go crazy over HSA, it would be a big deal. A new generation of HSA chips would blur the line between CPU and GPU cores. They would share the same memory bus on equal terms, and I think companies will start marketing them differently. For example, AMD is already marketing its APUs as “compute devices” comprised of different “compute cores” (CPUs and GPUs).

Mobile chips could end up using a similar approach. Instead of marketing a chip with eight or ten CPU cores, and such and such GPU, chipmakers could start talking about clusters, modules and units. So, a processor with four small and four big CPU cores would be a “dual-cluster” or “dual-module” processor, or a “tri-cluster” or “quad-cluster” design, if they take into account GPU cores. A lot of tech specs tend to become meaningless over time, for example, the DPI on your office printer, or megapixel count on your cheap smartphone camera.

It’s not just marketing though. If GPUs become as flexible as CPU cores, and capable of accessing system resources on equal terms as the CPU, why should we even bother calling them by their real name? Two decades ago, the industry stopped using dedicated mathematical coprocessors (FPUs) when they became a must-have component of every CPU. Just a couple of product cycles later, we forgot they ever existed.

Keep in mind that HSA is not the only way of tapping GPUs for computation.

Intel and Nvidia are not on board, and their approach is different. Intel has quietly ramped up GPU R&D investment in recent years, and its latest integrated graphics solutions are quite good. As on-die GPUs become more powerful and take up more silicon real estate, Intel will have to find more ingenious ways of using them for general computing.

Nvidia, on the other hand, pulled out of the integrated graphics market years ago (when it stopped producing PC chipsets), but it did try its luck in the ARM processor market with its Tegra-series processors. They weren’t a huge success, but they’re still used in some hardware, and Nvidia is focusing its efforts on embedded systems, namely automotive. In this setting, the integrated GPU pulls its own weight since it can be used for collision detection, indoor navigation, 3D mapping, and so on. Remember Google’s Project Tango? Some of the hardware was based on Tegra chips, allowing for depth sensing and a few other neat tricks. On the opposite side of the spectrum, Nvidia’s Tesla product line covers the high-end GPU compute market, and ensures Nvidia’s dominance in this niche for years to come.

Bottom line? On paper, GPU computing is a great concept with loads of potential, but the current state of technology leaves much to be desired. HSA should go a long way towards addressing most of these problems. What’s more, it’s not supported by all industry players, which is bound to slow adoption further.

It may take a few years, but I am confident GPUs will eventually rise to take their rightful place in the general computing arena, even in mobile chips. The technology is almost ready, and economics will do the rest. How? Well, here’s a simple example. Intel’s current generation Atom processors feature 12 to 16 GPU Execution Units (EUs), while their predecessors had just four EUs, based on an older architecture. As integrated GPUs become bigger and more powerful, and as their die area increases, chipmakers will have no choice but to use them to improve overall performance and efficiency. Failing to do so would be bad for margins and shareholders.

Don’t worry, you’ll still be able to enjoy the occasional game on this new breed of GPU. However, even when you’re not gaming, the GPU will do a lot of stuff in the background, offloading the CPU to boost performance and efficiency.

I think we can all agree this would be a huge deal, especially on inexpensive mobile devices.