ARM Servers: Mobile CPU Architecture For Datacentres?

Boring. That’s a word many people use to describe the server industry, although unexciting and uneventful would be a better fit. This is not necessarily a bad thing, because when something “exciting” happens to a server, it usually involves blue smoke and downtime. Luckily, the server space is about to get a bit more exciting, thanks to the introduction of servers based on ARM processors.

In this post, Toptal Technical Editor and resident chip geek Nermin Hajdarbegovic explains why ARM processors could end up powering a server near you, and what this means for the software industry. The potential implications of ARM servers are huge, but there is no cause for alarm. This industry segment does not tend to evolve fast, and developers will have plenty of time to get ready.

Boring. That’s a word many people use to describe the server industry, although unexciting and uneventful would be a better fit. This is not necessarily a bad thing, because when something “exciting” happens to a server, it usually involves blue smoke and downtime. Luckily, the server space is about to get a bit more exciting, thanks to the introduction of servers based on ARM processors.

In this post, Toptal Technical Editor and resident chip geek Nermin Hajdarbegovic explains why ARM processors could end up powering a server near you, and what this means for the software industry. The potential implications of ARM servers are huge, but there is no cause for alarm. This industry segment does not tend to evolve fast, and developers will have plenty of time to get ready.

I am getting old. Back in my day, if you wanted top notch CPU performance, you had to go with a high-end x86 chip, or, if you had deeper pockets, you could get something exotic, like a PowerPC system. The industry’s dependence on x86 processors appeared to be increasing, not declining.

Ten years ago, Apple joined the x86 club, and this prompted many observers to conclude the era of non-x86 processors in the mass market was over. Just a few years later, they had to eat their words, and yet again, Apple had something to do with it. ARM servers are coming, and they could revitilise the server industry.

Rethinking Processor Design

As the paradigm shifted and mainstream users embraced smartphones and tablets, it quickly became apparent that x86 chips from Intel, AMD, and VIA, simply weren’t up to the task. While x86 was the most prolific instruction set on the planet, it wasn’t a good choice for mobile devices for a number of reasons. In fact, Intel’s instruction set still isn’t a popular choice for mobile processors, although this is starting to change thanks to Intel’s foundry tech lead. In any case, when it comes to this market segment, x86 is not as efficient as other CPU architectures out there, namely processors based on ARM’s 32-bit ARMv7 and 64-bit ARMv8 instruction sets.

Over the past decade, and especially over the past five years, ARM processors have come to dominate the smartphone and tablet landscape, and they had a lot going for them. They offered a lot of performance-per-watt, they were cheap to design, produce and deploy. Big vendors could buy the necessary building blocks and design their own processors based on ARMv7 or ARMv8, adding other components according to their needs (high-speed modems and different GPUs to name a couple).

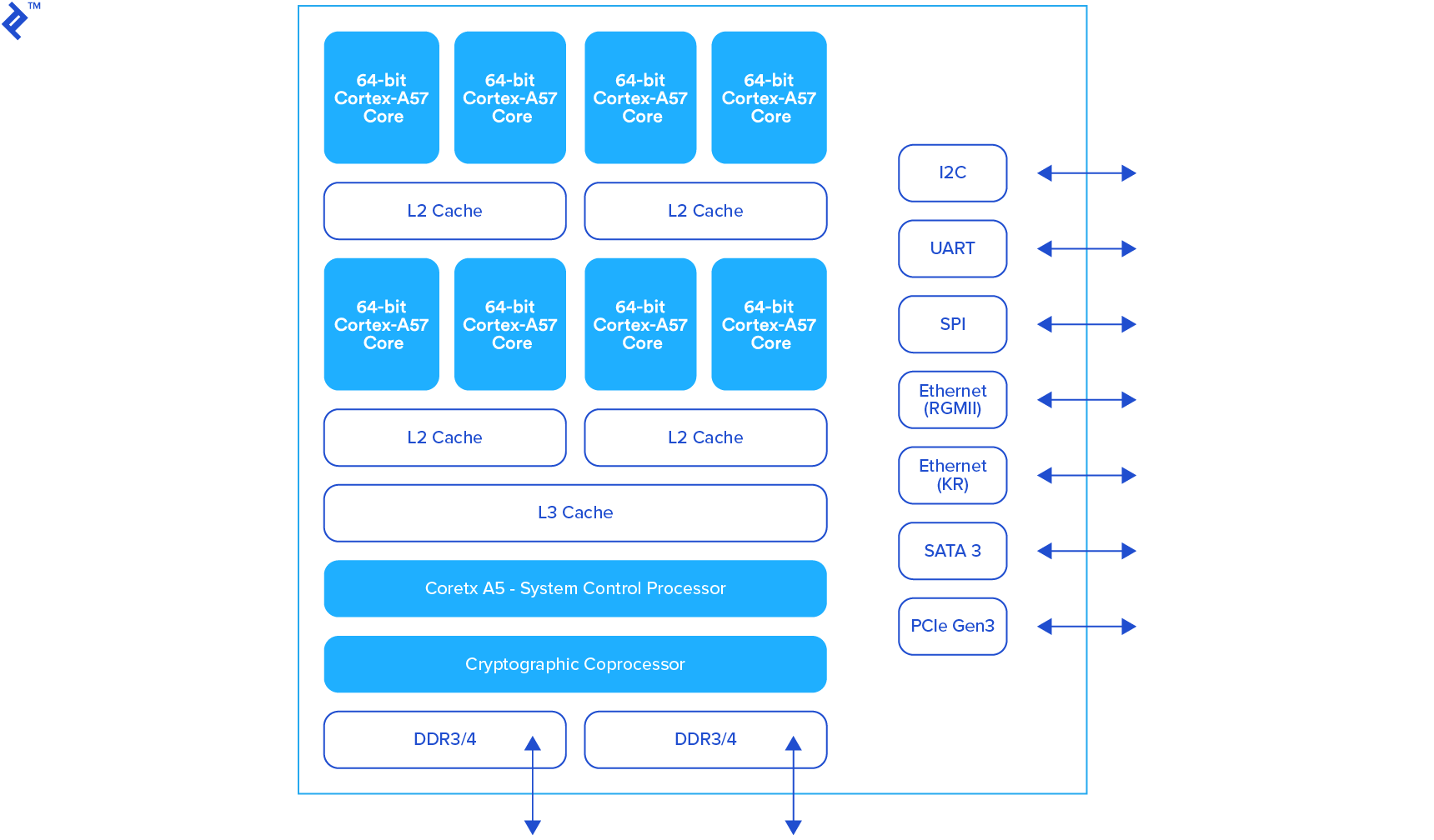

This led some chip designers to take a somewhat different approach and design their own, custom CPU cores. Qualcomm and Apple led the way, both companies became big players on the mobile System-on-Chip (SoC) market, and their development of sophisticated, custom cores, played an instrumental role in their success. However, custom ARM cores were still used in high-end processors, while all other market segments were covered by standard ARM Cortex CPU cores, like the 32-bit Cortex-A8, A9, A7, and A15, followed up by 64-bit designs like the Cortex-A53, A57, and the new A72 core, which is about to start shipping.

The other prerequisite for ARM’s success was Microsoft’s failure.

Windows only ran on x86 processors, so if Microsoft were to gain a foothold in mobile, it would tip the scales in Intel’s favour. However, by the close of the last decade, it became apparent that Redmond had dropped the ball and ceded this lucrative market to Google and Apple. Speaking of balls, long-time Microsoft CEO Steve Ballmer departed the company a couple of years ago, admitting he and his team failed to recognize the potential of smartphone and tablets. Anyway, it’s not Ballmer’s problem anymore: he has other balls on his mind right now, basketballs to be exact.

However, mobile is not the first or only market segment to witness a Microsoft failure of epic proportions. The other is the server market. On the face of it, smartphones and data centres don’t have much in common, but from a technological and business perspective, they have some overlap.

Whether you’re designing a smartphone or a server, you need to emphasise similar aspects of your hardware platform, such as power efficiency, good thermals, performance per dollar, and so on. Most importantly, you don’t really need an x86-based processor for smartphones and many types of servers. Thanks to Microsoft’s failures, these market segments aren’t dominated by any flavour of Windows. They rely on UNIX-based operating systems instead: Android, iOS, and various Linux distributions.

Microsoft also attempted to leverage the potential of ARM processors, so it tried developing a version of Windows that would run on ARM hardware, which conveniently brings me to the next Microsoft fail: Windows RT. Microsoft eventually pulled the plug on Windows RT, or “Windows on ARM” as it was originally called. Microsoft’s latest Surface tablets employ x64 processors and standard Windows 10. Microsoft’s Lumia smartphone line (née Nokia Lumia) still uses ARM processors from the House of Qualcomm, but Windows Phone is all but dead as a mainstream smartphone platform.

Servers Don’t Have To Cost An ARM And A Leg

Right now, we have a couple of billion smartphone and tablets in the wild, and the vast majority are based on ARM processors. However, ARM chips aren’t making their way into other market segments. There are just a handful high-volume computing platforms based on ARM that don’t fall into the smartphone and tablet category. Google Chromebooks are probably the best-known example. However, ARM chips are used in heaps of other devices: routers, set-top boxes and smart TVs, smartwatches, some gaming devices, automotive infotainment systems and so on.

What about ARM servers?

This is where it gets tricky. I’ve been hearing talk of ARM servers since 2010, but progress has been slow and limited. ARM’s market share in the server segment remains negligible and the ecosystem remains dominated by x86 Xeon and Opteron parts from Intel and AMD respectively. Since AMD is in a world of trouble on the CPU front, Intel has managed to extend its market share lead in recent years.

But why did ARM servers sound like a good idea to begin with?

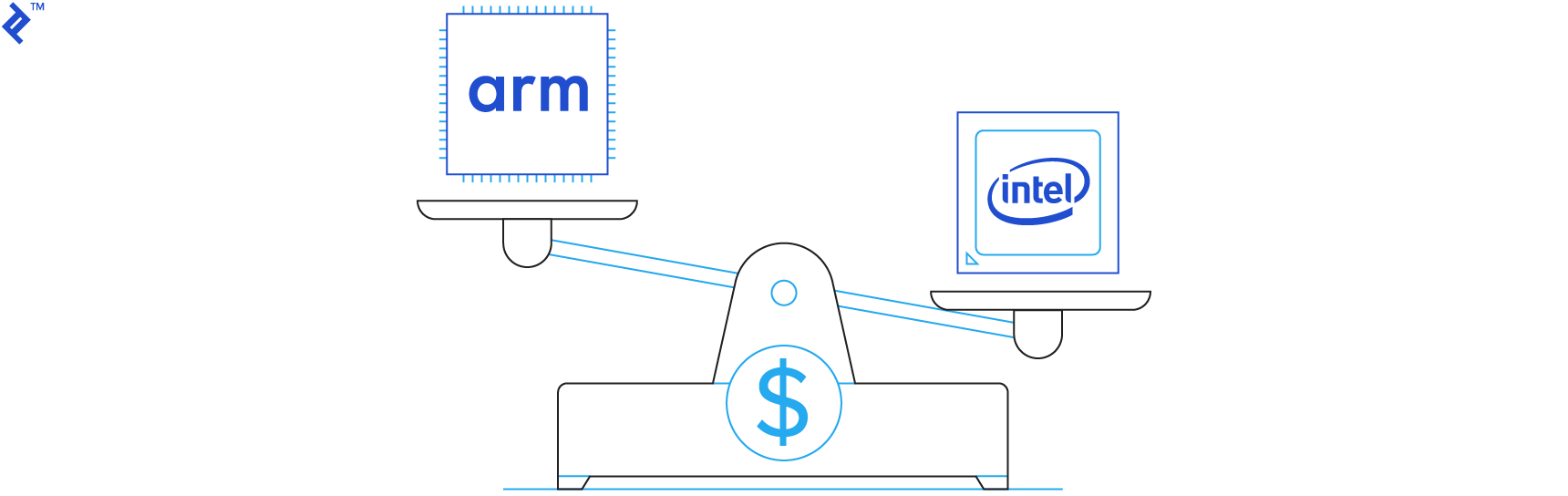

Money. I could try listing all the geeky points that make ARM a viable alternative to x86 in the server market, but at the end of the day it’s mostly about money, so I will attempt to explain it in a few lines.

- Price/Performance

- Data centre workloads are evolving and changing

- Ability to source processors from various suppliers

- Use of custom designed chips for various niches

- ARM chips are more suitable to some infrastructure applications

- It’s a good way to stick it to Intel and erode its market position (Intel is on the verge of becoming a monopoly in the server space)

We don’t need a huge and expensive Xeon processor for everything. Moreover, using obsolete x86 processors to handle undemanding workloads isn’t a good option due to their power draw. Remember, we are talking about servers, not your MacBook or desktop PC. Servers run around the clock, so every efficiency gain, including relatively small ones, tends to be important. It’s not just about getting a bigger electric bill; data centres have to be cooled and maintained, so processors with a lower Thermal Design Power (TDP) rating are much more valuable to enterprise users than individuals.

Why Use ARM Servers?

So, what sort of enterprise application are ARM processors good for?

Well, ARM expects to get the vast majority of design wins for networking infrastructure applications. Due to their flexibility, small size, efficiency, and low price, ARM processors are a great choice for infrastructure. You can use ARM processors in routers, high-performance storage solutions, and certain types of servers.

However, ARM expects the majority of enterprise growth this decade to come from servers since its other segments are already mature and it has a healthy market share in them. Server workloads are changing as well, and this trend is tied to the growth of cloud services. As a result, servers have to deal with an increasing number of smaller tasks.

Many organisations prefer to keep their options open, so they source hardware from multiple vendors. This is good news for ARM server processors because they could be marketed by a number of different companies. In addition, ARM’s licensing policies and modular approach to processor design can be utilised to design custom processors for specific applications. This is, obviously, something that’s not an option for small companies, but what might happen if big players like Amazon, Facebook or Google start asking for bespoke server processors, designed to excel at one particular application?

As for “sticking it to Intel,” I should note that I don’t mean Intel any harm, and I don’t want to see it fail or be pushed out of various market segments, but at the same time I am concerned that Intel’s domination could end up stifling growth and innovation. More competition should result in lower prices for end-users, and this is what ARM servers are all about.

Multithreading: How Many CPU Cores Is Enough?

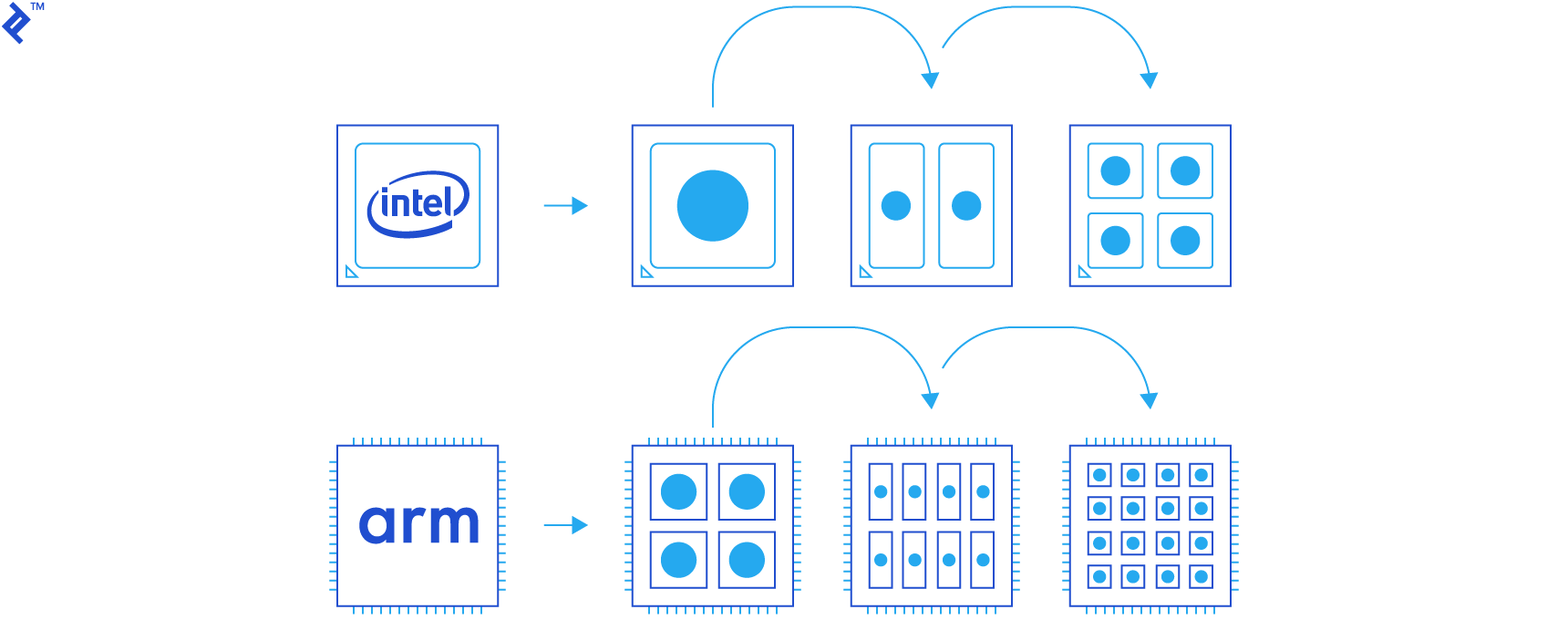

Only a decade ago, multicore x86 processors were reserved for high-performance computers and servers, but now you can get quad-core x86 chips in $100 tablets.

In the early days of multicore computing, you still needed big CPU cores to get adequate levels of performance. Lots of software was not able to take advantage of these new processors and their extra cores, so good single-thread performance was vital. Things sure have changed; nowadays, we have octa-core smartphones, quad-core Intel tablets and phones, and 16-core x86 server processors.

There is a good reason for this. Building a multi-core processor makes perfect sense from a technological and financial perspective. It’s a lot easier to distribute the load to a few smaller, more efficient CPU cores than to develop a single, huge core capable of running at high frequencies. The multicore approach ensures superior efficiency and chip yields.

ARM has the potential to take the core craze to the next level. ARM CPU cores tend to be smaller than Intel’s so called “big cores” used in server and desktop parts (Intel’s “small core” Atoms are reserved for mobile, although Atom-based server parts are available too). However, this does not mean we will see 128-core or 256-core ARM processors anytime soon, although in theory, they are possible. It depends on how the new crop of ARMv8 server processors handles multithreaded loads. There are some encouraging signs, and chances are that ARM servers will be a good choice for a range of workloads that could benefit from their multicore processors.

Qualcomm’s first server processor has 24 ARMv8 CPU cores, and the chipmaker made it clear that future models will sport even more cores. Remember AMD and its server market woes? Well, the company introduced its long overdue ARM-based Opteron A1100 processor just a couple of weeks ago. Qualcomm made the announcement in October, so both these products will become available over the next few months.

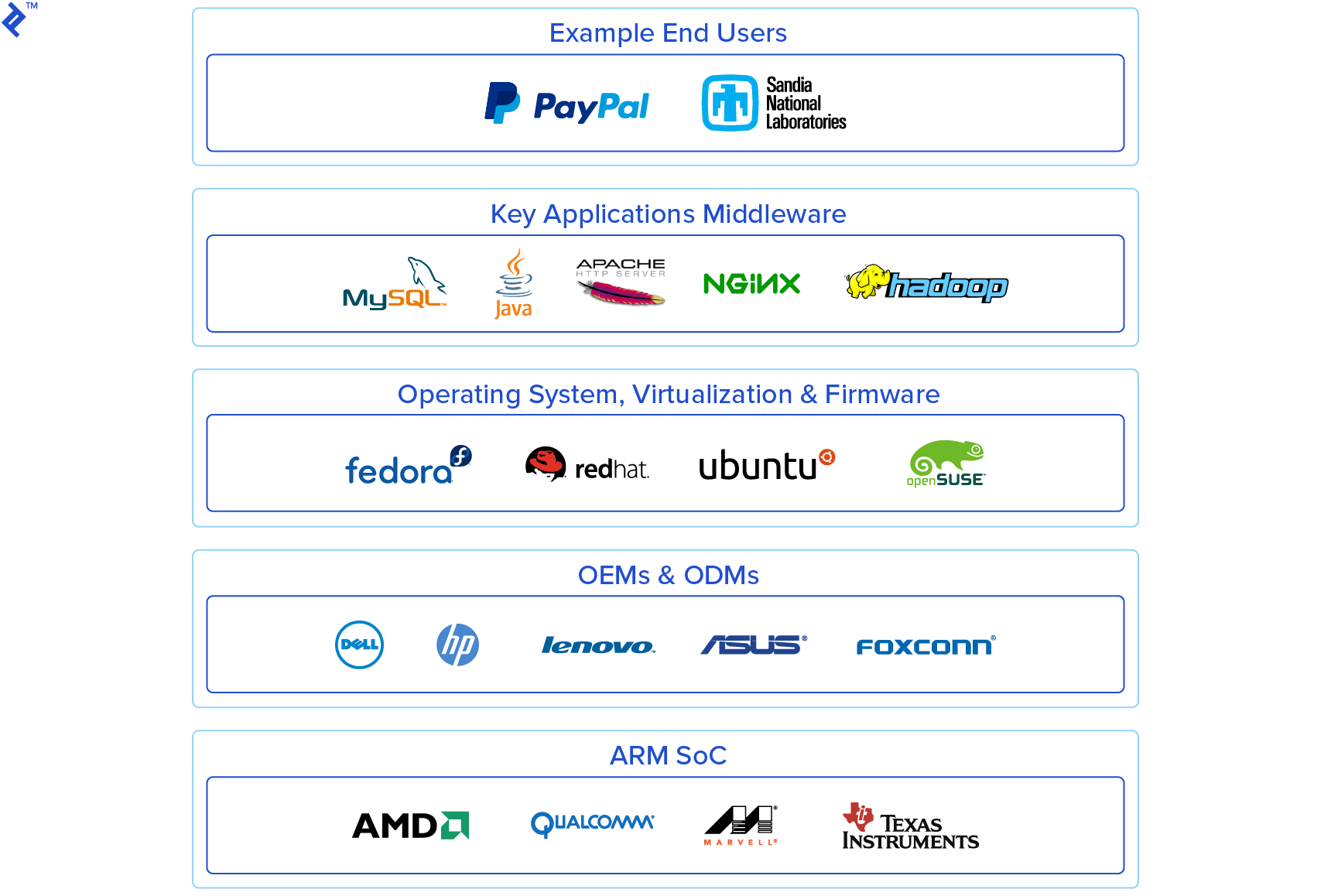

Of course, Intel will not be attending this ARM party, but Qualcomm and AMD are not the only chip outfits working on ARM-based enterprise chips. Chipmakers like Broadcom, Calxeda, Cavium Networks and Huawei HiSilicon have worked on ARM-based server products as well. Nvidia and Samsung, two heavyweights in the SoC and GPU business, also experimented with ARM server parts until a couple of years ago when they decided to halt development. Texas Instruments, Xilinx and Marvell are also exploring ARM server parts.

Some of these companies worked on custom ARM cores, too, but the only non-Apple 64-bit custom ARM core available today is Nvidia’s Denver, which only got a handful of design wins.

What Are ARM Custom Cores?

I know most people can’t be bothered keeping track of all industry niches, including the CPU space, so I think now would be a good time to explain what makes ARM cores different and what custom cores actually are. I will not dissect processors and explain the difference between x86 and ARM instruction sets, but I will outline the differences from a business perspective.

You see, ARM isn’t different just because it uses a different instruction set, although that would make for a quick and geeky explanation, in my opinion, the biggest difference between Intel, AMD and ARM is not the architecture, it’s the business model. Besides, architectures change, new CPU designs are unveiled on a regular basis, but ARM’s approach to marketing and licensing its technology hasn’t changed in years.

Here is a simple example.

An Intel processor is developed by Intel, using Intel instruction sets. It is manufactured in an Intel foundry, packaged and shipped with “Intel Inside” branding. It might sound simple, but let’s not forget the billions that went into R&D over the decades, or the fact that Intel relies on its own fabs for manufacturing (and if you are in the market for a 14nm foundry, make sure you have some spare change on you, because a chip fab costs as much as a nuclear aircraft carrier).

What about ARM products? Well, ARM is not a chipmaker, it’s a chip designer, or a “fabless” chip company, so it doesn’t deal with manufacturing and does not sell own-brand chips. ARM sells something much more interesting: intellectual property. This means ARM clients can choose any of a number of different licensing plans and start making their own designs. Most of them choose ARM’s in-house designs (Cortex series CPUs, Mali series GPUs), so they pay a licensing fee for every CPU/GPU core they produce.

However, a client does not have to license these ready-to-go CPUs; it can license the architecture set instead and develop a custom core based on an ARM instruction set. This is what Apple does. It uses the ARMv8 instruction set to build big and powerful 64-bit CPU cores for its iOS devices. Nvidia’s Denver CPU is similar in this respect, and so are Qualcomm’s custom cores (32-bit Krait and 64-bit Kryo series).

Designing a custom CPU core is not easy. It’s not like you’ll find chip designers out of work and offering to design a custom processor on Craigslist, so this approach is usually reserved for big players who have the necessary technical, financial and human resources to pull it off. Therefore, most companies use off-the-shelf ARM Cortex cores instead (the 64-bit Cortex-A57 core can be employed in a server environment and it’s used by most next-generation ARM server processors).

It is important to note that virtually ARM-based chips are custom designed but the CPU cores used in most are not.

The vast majority of ARM processors rely on standard ARM CPU designs (Cortex CPUs) rather than custom CPU cores. This means chipmakers can choose any of a number of ARM CPU cores, third-party GPUs and other components, and tailor a processor to meet their needs without having to develop a custom CPU core. It’s a cheap way of making the architecture more flexible, and it has more to do with ARM’s licensing policies than engineering.

It is also important to note that these forthcoming ARM servers, based on the latest ARM 64-bit CPU architecture, don’t have much in common with the experimental ARM servers of years gone by. For example, one of our colleagues played around with Scaleway ARM servers, but they are based on ARMv7 processors and have a number of hardware limitations (for example, Scaleway used shared I/O controllers, and the lack of 64-bit support created another set of challenges). The new generation of ARM-based servers won’t suffer from these teething problems; they are much closer to Intel hardware in terms of features and standards.

ARM Server Pros And Cons

The problem with ARM servers is that they tend to be used for small niches, and they are not suitable for small developers who can live with any server. While some big companies find them attractive, the ARM servers that are currently available aren’t suitable for most individual developers.

However, the forthcoming server solutions are different and should appeal to more niches. This is what could make them appeal to a much wider user-base:

- Reduced hardware costs, potentially superior efficiency (performance per dollar, performance per Watt).

- Increasing compatibility and availability of popular ports.

- Support for cutting-edge technology and new industry standards.

- Ability to excel at certain types of workloads (simple but multithreaded loads).

- Potential for more competition and product diversity than in x86 space.

I have to stress that, at this stage, some of these points are theoretical since the hardware isn’t out yet. However, while I can’t categorically claim to know what will happen over the next few quarters, I am confident the new breed of ARM servers will deliver these (and more) benefits. Why am I so confident? Well, if they didn’t have the potential to make a difference, ARM, Qualcomm, AMD, and other companies wouldn’t be wasting their time and burning money on their development.

So, what about ARM server downsides? There are quite a few, and some of them are big. Luckily, the industry is working hard to address them.

- Hit and miss software support

- Availability, potential deployment issues

- ROI concerns

- Tiny ecosystem

- Old habits die hard

Software-related issues will, probably, be the biggest immediate concern. While a lot of popular services will run on ARM servers, software support will be a problem. It’s not enough to merely port stuff to new hardware; we have to make sure everything functions properly so there are no performance hits or failures. In other words, the ported software has to be mature. Nobody will develop and deploy a service built on buggy foundations.

With all the money to be made in the server market, one would expect to see fast progress, but that isn’t always the case. Adopting new hardware and tweaking all the software that runs on it is never easy, and the pace depends on market adoption. The size of the ARM server ecosystem is (very) limited, and I doubt a couple of new processors would make much of a difference in the short run. While influential companies like ARM and Qualcomm have a vested interest in seeing a pickup in demand for ARM servers, there is not much they can do about software. They have next to no influence over software developers, so they can’t force them to add ARM support to existing products.

Long story short: Take a good look at your stack and try to figure out whether everything will run properly on ARM hardware. Given enough time, developers will start adding support for ARM hardware, but this won’t be a fast process. They will have to tweak frameworks and applications to take into account a new architecture, and I suspect, many of them won’t bother until there are enough ARM servers out there (which may take years). Support for legacy software is another obvious problem.

This brings us to the next point: Market availability and potential deployment issues. There aren’t that many ARM servers out there, so choice is limited, and so is availability. A year or two down the road, we could see a number of ARM-based hosting packages on offer, but we won’t see too many. Worse, there’s a good chance these servers will be concentrated in certain parts of the world, making them less attractive to some developers. There are a lot of unknowns related to deployment, so it’s still too early to say how things will pan out.

Slow adoption could create another set of challenges. These aren’t restricted to ARM servers; they apply to most enterprise technology. A lot of organisations are bound to explore the possibility of using ARM servers, but that doesn’t necessarily mean they will actually use them. In order to ensure enough development and consumer demand, market adoption needs to grow steadily. Otherwise, risk-averse people will probably stay away, taking the wait-and-see approach. The other potential problem is economic: If developers aren’t sure the ecosystem is growing fast enough, they might conclude the potential return is simply not worth the effort.

What about these old habits? Well, since server space doesn’t evolve fast, people tend to stick to proven platforms, namely x86 hardware. The motto is simple: If it ain’t broke, don’t fix it. Industry veterans might see ARM servers as an opportunity and take a gamble on them. It would take a fair amount of courage and confidence to tie part of a complex project to what many people still perceive as an untested or immature hardware platform. I fear many people won’t be willing to take the plunge, at least not this early on.

Bright Future And A Pinch Of Hype

I’ve spent the better part of my adult life covering cutting edge silicon, and my personal take on ARM servers is that they have a lot of potential, but, they’re not for everyone. They could play a vital role in the Internet of tomorrow by providing cheap building blocks for infrastructure and handling niche server workloads.

However, at the same time, I cannot escape the feeling that ARM servers tend to be overhyped. Despite this, I don’t see them as a fad. I think they are here to stay, but vendors must carve out a few specific niches that can truly benefit from the new architecture.

In other words, we won’t see a lot of simple LAMP web hosting servers based on ARM, but we could see loads of them in more exotic niches (and some horribly boring ones). ARM processors could be a perfect fit for specific loads, especially those that can take advantage of a large number of small physical CPU cores, stuff that’s not CPU-bound. It might not sound like much, but this actually covers a lot of potential uses: data logging, large volumes of simple queries, certain types of databases, various storage services and so on.

I could go on, listing various use-cases, pros and cons of ARM servers, and potential problems, but at the end of the day, I suspect ARM server adoption will depend on good old cash. Technology aside, ARM servers will only make sense if the economic component checks out. In other words, they will have to offer a lot more bang for buck than x86 processors if they are to justify their existence.

Since this is more or less the whole point of introducing this new architecture to the server industry, I expect attractive pricing, but it will be a few months before we know for sure.