HTTP Request Testing: A Developer's Survival Tool

It’s tragically common for developers to come into a project where proper automated testing has been and will continue to be overlooked. It’s a situation Freelance Developer Bhushan Lodha has found himself in all too often; fortunately, he’s found a solution. In this article, he briefly covers the reasons why testing is overlooked and ultimately explains his “coding life hack” to ensure quality control even when he can’t introduce a testing framework.

It’s tragically common for developers to come into a project where proper automated testing has been and will continue to be overlooked. It’s a situation Freelance Developer Bhushan Lodha has found himself in all too often; fortunately, he’s found a solution. In this article, he briefly covers the reasons why testing is overlooked and ultimately explains his “coding life hack” to ensure quality control even when he can’t introduce a testing framework.

Bhushan is a Hacker School alum and a developer proficient Ruby, Rails, and Backbone.js. He has a knack for design and UX.

What To Do When A Testing Suite Isn’t Feasible

There are times that we – programmers and/or our clients – have limited resources with which to write both the expected deliverable and the automated tests for that deliverable. When the application is small enough you can cut corners and skip tests because you remember (mostly) what happens elsewhere in the code when you add a feature, fix a bug, or refactor. That said, we won’t always work with small applications, plus, they tend to get bigger and more complex over time. This makes manual testing difficult and super annoying.

For my last few projects, I was forced to work without automated testing and honestly, it was embarrassing to have the client email me after a code push to say that the application was breaking in places where I hadn’t even touched the code.

So, in cases where my my client either had no budget or intention of adding any automated test framework, I started testing the whole website’s basic functionality by sending an HTTP request to each individual page, parsing the response headers and looking for the ‘200’ response. It sounds plain and simple, but there is a lot you can do to ensure fidelity without actually having to write any tests, unit, functional, or integration.

Automated Testing

In web development, automated tests comprise of three major test types: unit tests, functional tests and integration tests. We often combine unit tests with functional and integration tests to make sure everything runs smoothly as a whole application. When these tests are run in unison, or sequentially (preferably with single command or click), we start calling them automated tests, unit or not.

Largely the purpose of these tests (at least in web dev) is to make sure all application pages are rendered without trouble, free from fatal (application halting) errors or bugs.

Unit Testing

Unit testing is a software development process in which the smallest parts of code – units – are independently tested for correct operation. Here’s an example in Ruby:

test “should return active users” do

active_user = create(:user, active: true)

non_active_user = create(:user, active: false)

result = User.active

assert_equal result, [active_user]

end

Functional Testing

Functional testing is a technique used to check the features and functionality of the system or software, designed to cover all user interaction scenarios, including failure paths and boundary cases.

Note: all our examples are in Ruby.

test "should get index" do

get :index

assert_response :success

assert_not_nil assigns(:object)

end

Integration Testing

Once the modules are unit tested, they are integrated one by one, sequentially, to check the combinational behavior, and to validate that the requirements are implemented correctly.

test "login and browse site" do

# login via https

https!

get "/login"

assert_response :success

post_via_redirect "/login", username: users(:david).username, password: users(:david).password

assert_equal '/welcome', path

assert_equal 'Welcome david!', flash[:notice]

https!(false)

get "/articles/all"

assert_response :success

assert assigns(:articles)

end

Tests in an Ideal World

Testing is widely accepted in the industry and it makes sense; good tests let you:

- Quality assure your whole application with the least human effort

- Identify bugs more easily because you know exactly where your code is breaking from test failures

- Create automatic documentation for your code

- Avoid ‘coding constipation’, which, according to some dude on Stack Overflow, is a humorous way of saying, “when you don’t know what to write next, or you have a daunting task in front of you, start by writing small.”

I could go on and on about how awesome tests are, and how they changed the world and yada yada yada, but you get the point. Conceptually, tests are awesome.

Tests in the Real World

While there are merits to all three types of testing, they don’t get written in most of projects. Why? Well, let me break it down:

Time/Deadlines

Everyone has deadlines, and writing fresh tests can get in the way of meeting one. It can take time and a half (or more) to write an application and its respective tests. Now, some of you do not agree with this, citing time saved ultimately, but I don’t think this is the case and I’ll explain why in ‘Difference of Opinion’.

Client Issues

Often, the client doesn’t really understand what testing is, or why it has value for the application. Clients tend to be more concerned with rapid product delivery and therefore see programmatic testing as counterproductive.

Or, it may be as simple as the client not having the budget to pay for the extra time needed to implement these tests.

Lack of Knowledge

There is a sizeable tribe of developers in the real world that doesn’t know testing exists. At every conference, meetup, concert, (even in my dreams), I meet developers that don’t know how to write tests, don’t know what to test, don’t know how to setup the framework for testing, and so on. Testing isn’t exactly taught in schools, and it can be a hassle to set up/learn the framework to get them running. So yes, there’s a definite barrier to entry.

‘It’s a Lot of Work’

Writing tests can be overwhelming for both new and experienced programmers, even for those world-changer genius types, and to top it off, writing tests isn’t exciting. One may think, “Why should I engage in unexciting busywork when I could be implementing a major feature with results that will impress my client?” It’s a tough argument.

Last, but not least, it is hard to write tests and computer-science students are not trained for it.

Oh, and refactoring with unit tests is no fun.

Difference in Opinion

In my opinion, unit testing makes sense for algorithmic logic but not so much for coordinating living code.

People claim that even though you’re investing extra time up front in writing tests, it saves you hours later when debugging or changing code. I beg to differ and offer one question: Is your code static, or ever changing?

For most of us, it’s ever changing. If you are writing successful software, you’re always adding features, changing existing ones, removing them, eating them, whatever, and to accommodate these changes, you must keep changing your tests, and changing your tests takes time.

But, You Need Some Kind Of Testing

No one will argue that lacking any sort of testing is the worst possible case. After making changes in your code, you need to confirm that it actually works. A lot of programmers try to manually test the basics: Is the page rendering in the browser? Is the form being submitted? Is the correct content being displayed? And so on, but in my opinion, this is barbaric, inefficient and labour intensive.

What I Use Instead

The purpose of testing a web app, be it manually or automated, is to confirm that any given page is rendered in the user’s browser without any fatal errors, and that it shows its content correctly. One way (and in most cases, an easier way) to achieve this is by sending HTTP requests to the endpoints of the app and parse the response. The response code tells you whether the page was delivered successfully. It’s easy to test for content by parsing the response body of the HTTP request and searching for specific text string matches, or, you can be one step fancier and use web scraping libraries such as nokogiri.

If some endpoints require a user login, you can use libraries designed for automating interactions (ideal when doing integration tests) such as mechanize to login or click on certain links. Really, in the big picture of automated testing, this looks a lot like integration or functional testing (depending on how you use them), but it’s a lot quicker to write and can be included in an existing project, or added to a new one, with less effort than setting up whole testing framework. Spot on!

Edge cases present another problem when dealing with large databases with a wide range of values; testing whether our application is working smoothly across all anticipated datasets can be daunting.

One way to go about it is to anticipate all the edge cases (which is not merely difficult, it’s often impossible) and write a test for each one. This could easily become hundreds of lines of code (imagine the horror) and cumbersome to maintain. Yet, with HTTP requests and just one line of code, you can test such edge cases directly on the data from production, downloaded locally on your development machine or on a staging server.

Now of course, this testing technique is not a silver bullet and has lots of shortcomings, the same as any other method, but I find these types of tests faster, and easier, to write and modify.

In Practice: Testing with HTTP requests

Since we’ve already established that writing code without any kind of accompanying tests isn’t a good idea, my very basic go-to test for an entire application is to send HTTP requests to all its pages locally and parse the response headers for a 200 (or desired) code.

For example, if we were to write the above tests (the ones looking for specific content and a fatal error) with an HTTP request instead (in Ruby), it would be something like this:

# testing for fatal error

http_code = `curl -X #{route[:method]} -s -o /dev/null -w "%{http_code}" #{Rails.application.routes.url_helpers.articles_url(host: 'localhost', port: 3000)

}`

if http_code !~ /200/

return “articles_url returned with #{http_code} http code.”

end

# testing for content

active_user = create(:user, name: “user1”, active: true)

non_active_user = create(:user, name: “user2”, active: false)

content = `curl #{Rails.application.routes.url_helpers.active_user_url(host: 'localhost', port: 3000)

}`

if content !~ /#{active_user.name}/

return “Content mismatch active user #{active_user.name} not found in text body” #You can customise message to your liking

end

if content =~ /#{non_active_user.name}/

return “Content mismatch non active user #{active_user.name} found in text body” #You can customise message to your liking

end

The line curl -X #{route[:method]} -s -o /dev/null -w "%{http_code}" #{Rails.application.routes.url_helpers.articles_url(host: 'localhost', port: 3000)

} covers a lot of test cases; any method raising an error on the article’s page will be caught here, so it effectively covers hundreds of lines of code in one test.

The second part, which catches the content error specifically, can be used multiple times to check the content on a page. (More complex requests can be handled using mechanize, but that’s beyond the scope of this blog.)

Now, in cases where you want to test if a specific page works on a large, varied set of database values (for example, your article page template is working for all the articles in the production database), you could do:

ids = Article.all.select { |post| `curl -s -o /dev/null -w “%{http_code}” #{Rails.application.routes.url_helpers.article_url(post, host: 'localhost', port: 3000)

}`.to_i != 200).map(&:id)

return ids

This will return an array of IDs of all the articles in the database that were not rendered, so now you can manually go to the specific article page and check out the problem.

Now, I understand that this way of testing might not work in certain cases, such as testing a standalone script or sending an email, and it is undeniably slower than unit tests because we are making direct calls to an endpoint for each test, but when you can’t have unit tests, or functional tests, or both, this is better than nothing.

How would you go about structuring these tests? With small, non-complex projects, you can write all your tests in one file and run that file each time before you commit your changes, but most projects will require a suite of tests.

I usually write two to three tests per endpoint, depending on what I’m testing. You can also try testing individual content (similar to unit testing), but I think that would be redundant and slow since you will be making an HTTP call for every unit. But, on the other hand, they will be cleaner and easy to understand.

I recommend putting your tests in your regular test folder with each major end point having its own file (in Rails, for example, each model/controller would have one file each), and this file can be divided into three parts according to what we are testing. I often have at least three tests:

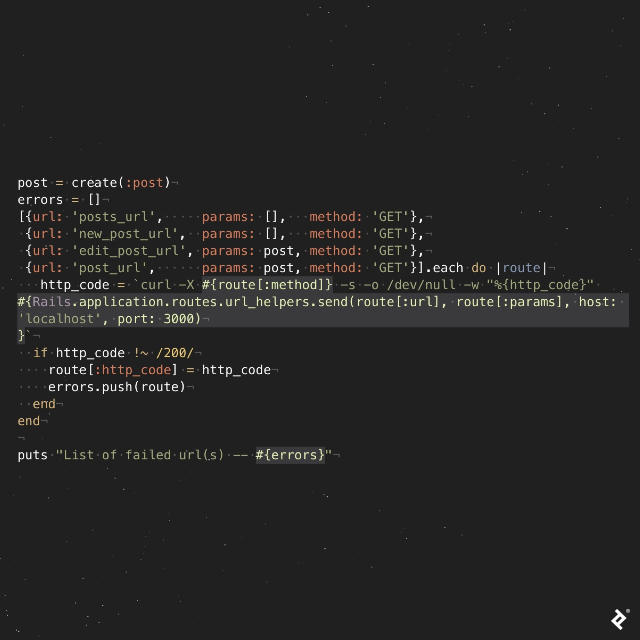

Test One

Check that the page returns without any fatal errors.

Note how I made a list of all the endpoints for Post and iterated over it to check that each page is rendered without any error. Assuming everything went well, and all the pages were rendered, you will see something like this in the terminal:

➜ sample_app git:(master) ✗ ruby test/http_request/post_test.rb

List of failed url(s) -- []

If any page is not rendered, you will see something like this (in this example, the posts/index page has error and hence is not rendered):

➜ sample_app git:(master) ✗ ruby test/http_request/post_test.rb

List of failed url(s) -- [{:url=>”posts_url”, :params=>[], :method=>”GET”, :http_code=>”500”}]

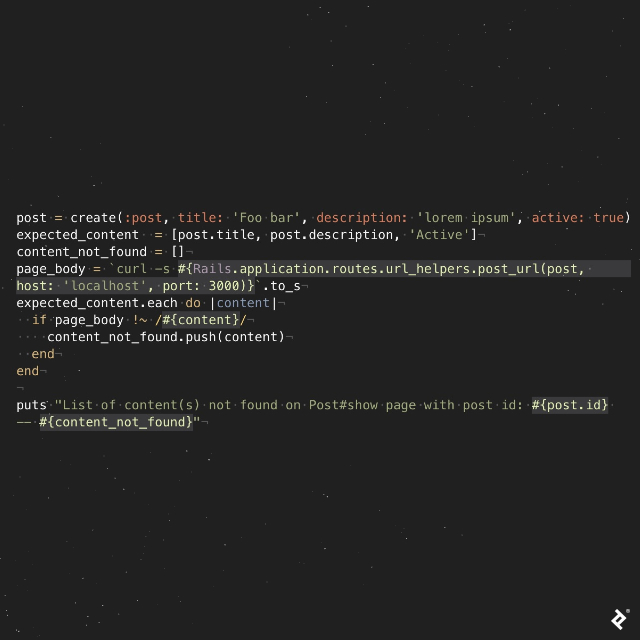

Test Two

Confirm that all the expected content is there:

If all the content we expect is found on the page, the result looks like this (in this example we make sure posts/:id has a post title, description and a status):

➜ sample_app git:(master) ✗ ruby test/http_request/post_test.rb

List of content(s) not found on Post#show page with post id: 1 -- []

If any expected content is not found on the page (here we expect the page to show status of post - ‘Active’ if post is active, ‘Disabled’ if post is disabled) the result looks like this:

➜ sample_app git:(master) ✗ ruby test/http_request/post_test.rb

List of content(s) not found on Post#show page with post id: 1 -- [“Active”]

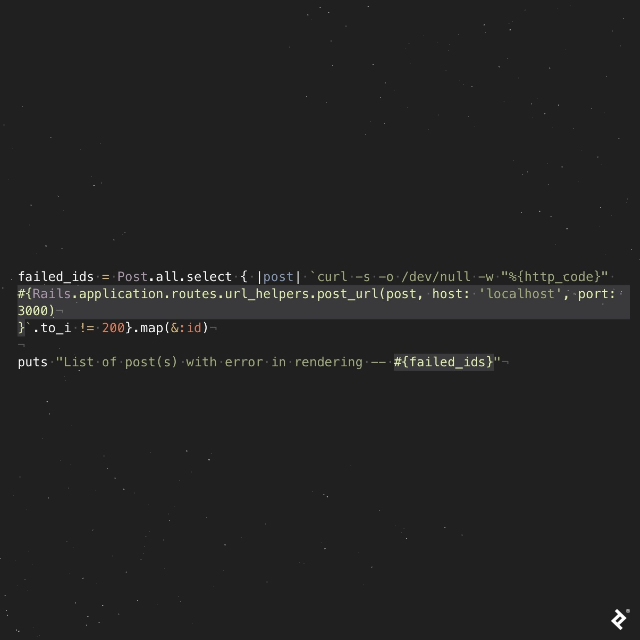

Test Three

Check that the page renders across all datasets (if any):

If all the pages are rendered without any error, we will get an empty list:

➜ sample_app git:(master) ✗ ruby test/http_request/post_test.rb

List of post(s) with error in rendering -- []

If the content of some of the records has a problem rendering (in this example, pages with the ID 2 and 5 are giving an error) the result looks like this:

➜ sample_app git:(master) ✗ ruby test/http_request/post_test.rb

List of post(s) with error on rendering -- [2,5]

If you want to fiddle around with the above demonstration code, here’s my github project.

So Which Is Better? It Depends…

HTTP Request testing might be your best bet if:

- You’re working with a web app

- You’re in a time crunch and want to write something fast

- You’re working with a big project, pre-existing project where tests were not written, but you still want some way to check code

- Your code involves simple request and response

- You don’t want to spend a large portion of your time maintaining tests (I read somewhere unit test = maintenance hell, and I partially agree with him/her)

- You want to test if an application works across all the values in an existing database

Traditional testing is ideal when:

- You’re dealing with something other than a web application, such as scripts

- You’re writing complex, algorithmic code

- You have time and budget to dedicate to writing tests

- The business requires bug-free or a low-error rate (finance, large user base)

Thanks for reading the article; you should now have a method for testing you can default to, one you can count on when you’re pressed for time.

Further Reading on the Toptal Blog:

About the author

Bhushan is a Hacker School alum and a developer proficient Ruby, Rails, and Backbone.js. He has a knack for design and UX.