A Look at JavaScript’s Future

In the past few years, we’ve seen the introduction of a lot of new technologies in JavaScript, but we needed time to see how the market was going to adopt them.

In this article, Toptal Freelance JavaScript Developer Alejandro Hernandez takes a look at how popular JavaScript is becoming and the factors that may have affected this popularity, and he tries to predict what the future of JavaScript will look like.

In the past few years, we’ve seen the introduction of a lot of new technologies in JavaScript, but we needed time to see how the market was going to adopt them.

In this article, Toptal Freelance JavaScript Developer Alejandro Hernandez takes a look at how popular JavaScript is becoming and the factors that may have affected this popularity, and he tries to predict what the future of JavaScript will look like.

Alejandro is a full-stack architect working on JavaScript projects, where his experience and understanding of architecture is most impactful.

Expertise

Previously At

Every market is ruled by certain common concepts, and JavaScript development is no exception.

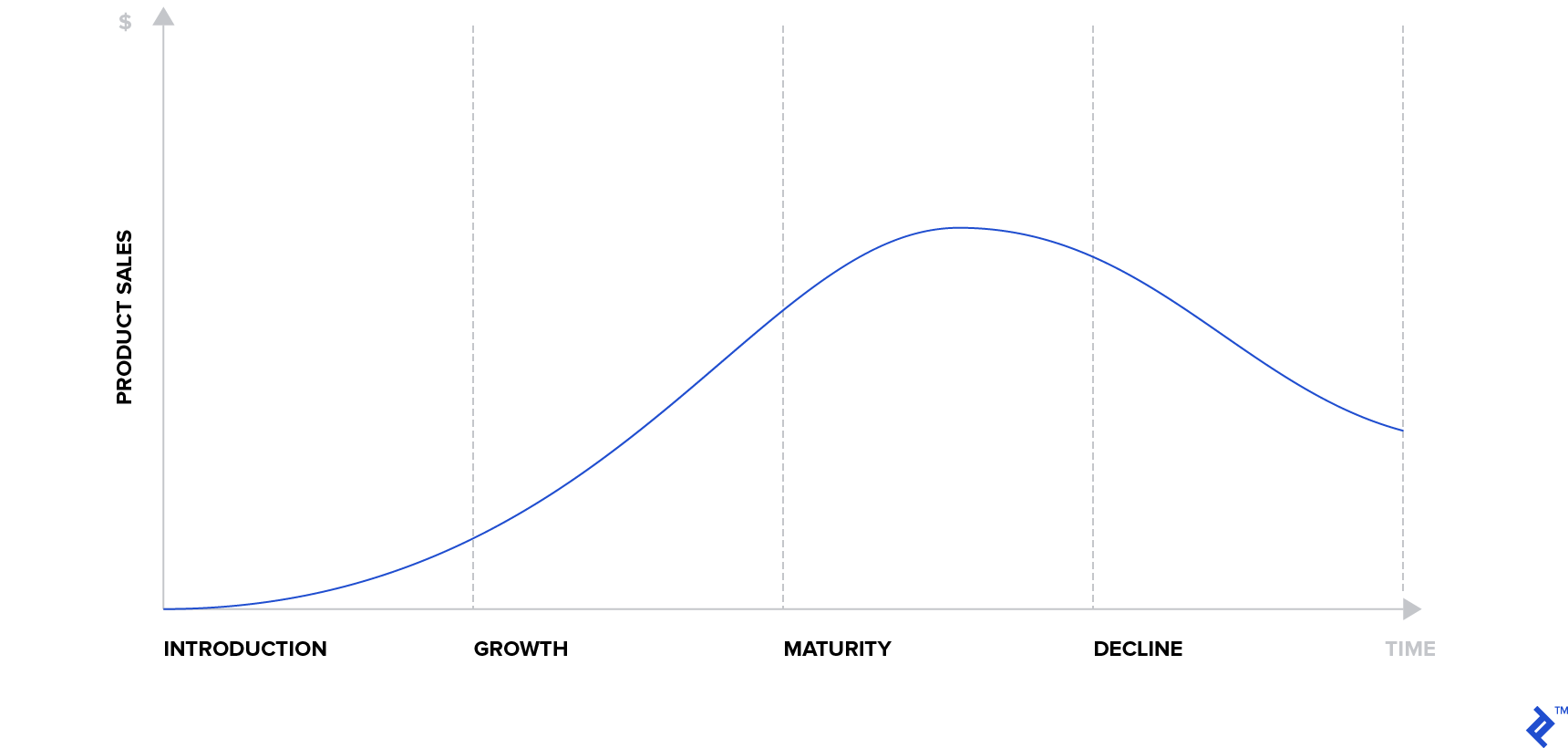

The product lifecycle is a concept that you can apply to several different environments to understand and predict their behavior. It is a business concept that helps us understand the stages that a product goes through during its life, explaining the impact of these stages on its popularity measure—in most cases, sales. If we observe market behavior patterns, we can estimate the current stage of a product and therefore make some predictions about its popularity.

There are four stages: introduction, growth, maturity, and decline, and on the chart above, you can see the impact on expected product sales for each stage. For example, smartphones sales aren’t growing like five years ago—actually, quite the opposite is true—so we can fairly say that smartphones are getting into their maturity stage.

In the past few years, we’ve seen the introduction of a lot of new technologies in JavaScript, but we needed time to see how the market was going to adopt them. Nobody wants to be the specialist on another promising technology that ends with zero adoption. Now, however, is the time to take another look. In this article, I will take a look at how popular JavaScript is becoming and the factors that may have affected this popularity, and I will try to predict what the future of JavaScript will look like.

The Future of JavaScript Language Features

Since the European Computer Manufacturers Association (ECMA) established the year-based release cycle for ECMAScript, a standardized JavaScript specification, we haven’t seen a lot of new features coming to the language—just a few each year. This could be one of the reasons we saw an increase of adoption of languages that compile to ES5 like TypeScript or ReasonML, both bringing features to the language that are highly requested by the community. This is not new—JavaScript went through this process before (CoffeeScript) and, in the end, those features ended up being merged into the language standard itself, and that’s probably the future that we can expect for these new typed features, too.

But now we are starting to see a game changer move in the compile-to-js market with the increasing availability of WebAssembly in the browsers. Now, we can use almost any language and compile it to run at almost native speed in a browser and, more importantly, we are starting to see support for future-proof features like support for threads that will allow us to take advantage of the multi-processor architecture that represents the inevitable future of all devices.

The official toolchain for WebAssembly will help you to compile C/C++, but there are a lot of community provided compilers for different languages, like Rust, Python, Java, and Blazor (C#).

Particularly, the Rust community is pretty active and we started to see complete front-end frameworks like Yew and Dodrio.

This brings a lot of new possibilities to browser-based apps, and you only need to test some of the great apps built with WebAssembly to see that near-native browser-based apps are a reality now, e.g., Sketchup or Magnum.

Adoption of typed languages that compile to ES5 is mature enough, the players are well established, and they won’t disappear (or be merged with ES) in the near future, but we’ll see a slow shift in favor of typed languages with WebAssembly.

Web

Front-end Frameworks

Every year, we see a big fight on the front-end frameworks market for the web, and React has been the indisputable winner for the past few years—since the introduction of their game-changer technology, the Virtual DOM, we saw an almost obligated adoption from their counterparts in order to remain relevant in the battle.

Some years ago, we saw the introduction of a radical new approach to web application development with Svelte, the “compiler framework” that disappears at compile time leaving small and highly efficient JavaScript code. However, that feature was not enough to convince the community to move to Svelte, but with the recent launch of Svelte 3.0, they introduced real reactive programming into the framework and the community is thrilled, so perhaps we are witnessing the next big thing in front-end frameworks.

Inspired by the destiny operator:

var a = 10;

var b <= a + 1;

a = 20;

Assert.AreEqual(21, b);

Svelte brings reactivity to JavaScript by overloading the use of label statements with reactivity at compile time by instructing the code to be executed in topological order:

var a = 10;

$: b = a + 1;

a = 20;

Assert.AreEqual(21, b);

This is a radical new idea that might help in different contexts, so the creator of Svelte is also working on svelte-gl, a compiler framework that will generate low-level WebGL instructions directly from a 3D scene graph declared in HTMLx.

Needless to say that React, Angular, and Vue.js won’t disappear overnight, their communities are huge, and they’ll remain relevant for several years to come—we are not even sure if Svelte will be the actual successor, but we can be sure of something: We’ll be using something different sooner or later.

WebXR and the Future of the Immersive Web

Virtual reality has been struggling for the past 60 years to find a place in the mainstream, but the technology was just not ready yet. Less than ten years ago, when Jon Carmack joined Oculus VR (now part of Facebook Technologies, LLC), a new wave of VR started to rise, and since then, we’ve seen a lot of new devices supporting different types of VR and of course the proliferation of VR-capable applications.

Browser vendors didn’t wanted to lose this opportunity, so they joined with the WebVR specification allowing the creation of virtual worlds in JavaScript with WebGL and well-established libraries like three.js. However, the market share of users with 6dof devices was still insignificant for massive web deployments, but the mobile web was still able to provide a 3D experience with the device orientation API, so we saw a bunch of experiments and a lot of 360 videos for a while.

In 2017, with the introduction of ARKit and ARCore, new capabilities were brought to mobile devices and all sorts of applications with AR and MR experiences.

However it still feels a little unnatural to download one specific app for one specific AR experience when you are exploring your world around you. If we could only have one app to explore different experiences… This sounds familiar. We solved that problem in the past with the browser, so why not give it another shot?

Last year, Mozilla introduced the WebXR Device API Spec (whose last working draft, at the time of this writing, is from two weeks ago) to bring AR, VR, and MR (ergo XR) capabilities to the browser.

A few of the most important browser vendors followed with their implementation, with an important exception: Safari mobile, so to prove their point, Mozilla released a WebXR capable browser under the iOS platform WebXR Viewer.

Now, this is an important step because the combination of AR and VR brings 6dof to mobile devices and mobile device-based headsets like Google Cardboard or the Samsung Gear VR, as you can see in this example, increasing the market share of 6dof devices by a large margin and enabling the possibility of a large-scale web deployment.

At the same time, the guys at Mozilla have been working on a new web framework to facilitate the creation of 3D worlds and applications called A-Frame, a component-based declarative framework with HTML syntax based on three.js and WebGL, having just one thing in mind—to bring back the fun and ease of use to web programming.

This is part of their crusade to the immersive web, a new set of ideas on how the web should look like in the future. Luckily for us, they are not alone, and we’ll start to see more and more immersive experiences on the web.

If you want to give it a try, go ahead download the WebXR Viewer and visit this site to see the possibilities of the immersive web.

Once again, standard browser-based apps won’t fade in a year or two—we’ll probably always have them. But 3D apps and XR experiences are growing and the market is ready and eager to have them.

Native Support for ES6

Almost every technology invented in JavaScript in the past decade was created to solve problems generated by the underlying implementation of the browsers, but the platform itself has matured a lot over these past few years, and most of those problems have disappeared, as we can see with Lodash, which once reigned the performance benchmarks.

The same is happening with the DOM, whose problems once were the actual inspiration for the creation of web application frameworks. Now, it is a mature API that you can use without frameworks to create apps—actually, that’s what web components are. They are the “framework” of the platform to create component-based apps.

Another interesting part of the platform evolution is the language itself. We’ve been using Babel.js for the past few years to be able to use the latest features of ECMAScript, but since the standard itself started to stagnate a little bit in the last few years, that was enough time to allow the browser vendors to implement most of their features, including native support of the static import statement. So now, we can start to consider the creation of applications without Babel.js or other compilers since we have (again) the support of the language features in the platform it self, and since Node.js uses the same V8 VM as Google Chrome, we’ve started to see stronger support of ES6 in Node.js, even with the static import statement under the experimental-modules flag.

This doesn’t mean that we’ll stop seeing apps being compiled at a professional level, but it means that starting with a browser-based application will be easy and fun as it once was.

Server-side JavaScript

Even though JavaScript started with server side in 1995 with the Netscape Enterprise Server, it wasn’t until Ryan’s Dahl presentation in 2009 that JavaScript started to be seriously considered for server-side apps. A lot of things happened in the past decade to Node.js. It evolved and matured a lot, creating once again the opportunity for disruption and new technologies.

In this case, it comes from the hand of its very own creator, Ryan Dahl, who has been working on a new perspective of server-side secured apps with Deno, a platform that supports natively the latest language features as async/await, and also the most popular compile-to-js language TypeScript, targeting the best performance thanks to their implementation in Rust and the usage of Tokio, but more importantly with a new security philosophy that differentiates it from most of the server-side platforms like Python, Ruby, or Java). Inspired by the browser security model, Deno will let you use the resources of the host only after the user explicitly granted the permissions to the process, which might sound a bit tedious at the beginning, but it might result in a lot of implications by allowing us to run unsecured code in a secured environment by just trusting the platform.

Node.js will still be there in the future but may be we’ll start to see serverless services like AWS Lambda and Azure Functions to provide the Deno functionality as an alternative to provide unsecured server-side code execution on their systems.

Conclusion

These are exciting times in the JavaScript world—a lot of technologies have matured enough to leave space for innovation, the active community never stopped to amaze us with their brilliant and incredible ideas, and we expect a lot of new alternatives to well-established tools since their mature stages are arriving quickly; we won’t stop using them since a lot of them are really good and there is plenty of proof in the battlefield, but new and exciting markets will start to emerge, and you’d better be prepared.

Staying up to date with the latest in JavaScript world isn’t easy, because of the pace of development, but there are some sources that can really help. First, the most important news source, in my opinion, is Echo JS, where you can an incredible amount of new content every hour. However, if you don’t have the time, the JavaScript Weekly newsletter is an excellent summary of the week in JS. Besides this, it is also important to keep an eye on the conferences around the world, and YouTube channels like, JSConf, React Conf, and Google Chrome Developers are wonderfully helpful.

Conversely, if you’re interested in seeing some constructive critique of where JavaScript is heading, I recommend reading As a JS Developer, This Is What Keeps Me Up at Night by fellow JavaScript developer Justen Robertson.

Further Reading on the Toptal Blog:

Understanding the basics

If you want to work with the web, eventually you’ll have to deal with JavaScript. Also, due to its popularity, JavaScript has a lot of chances to become the “lingua franca” of programming languages. All these things make it very important.

JavaScript will be replaced… eventually, in the distant future. But for now, and at least for the next decade or so, we can be sure that our knowledge in JavaScript will be useful.

Yes, it is. We usually tend to think in terms of the number of JavaScript compilers in the world, but now we can take into account the success of platforms like Node.js, React Native, Electron, and Johnny-Five, as well as the community asking to use JS outside the web.

Alejandro Hernandez

Córdoba, Cordoba, Argentina

Member since October 30, 2012

About the author

Alejandro is a full-stack architect working on JavaScript projects, where his experience and understanding of architecture is most impactful.

Expertise

PREVIOUSLY AT