Hire PostgreSQL Developers

Hire the Top 3% of Freelance PostgreSQL Developers

Hire PostgreSQL developers, consultants, experts, and professionals on demand. Top companies and startups choose PostgreSQL developers from Toptal for database design, performance tuning, complex queries, data migration, and more.

No-Risk Trial, Pay Only If Satisfied.

Hire Freelance PostgreSQL Developers

Donald Windrem

Donald has a wealth of experience—ten plus years with Oracle Database, five years with PostgreSQL, SQL and other databases, and five years with Python development. Recently, he’s been working with Django, Linux Shell, JavaScript, HTML, among others. Environment-wise, Donald has worked with global, multicultural development teams, has AWS and GCP experience, and is equally comfortable with agile and waterfall methodologies.

Show MoreLuis Henrique Vibranovski

Luis's strongest skill is SQLPerformance. After more than twenty years of hands-on practice and training on SQL Server and gaining the SQL Server Microsoft certification, Luis is ready to work with MySQL and PostgreSQL, developing and fixing queries, indexes and procedures, and other SQL performance-related issues.

Show MoreVicki Alden

Vicki is a highly skilled full-stack software engineer with six years of experience designing, developing, and delivering innovative web apps. She is proficient in JavaScript and TypeScript and has extensive knowledge of React, Node.js, PostgreSQL, TypeORM, and Docker. She is committed to continuous learning, mentorship, and collaborating with cross-functional teams to achieve project success. Vicki has a good track record of owning projects and consistently delivered outstanding results.

Show MoreGordan Bobic

Gordon is an expert database developer and database administrator (DBA) who has over 20 years of experience with Linux (preference for CentOS/RHEL), databases (MySQL, MariaDB, PostgreSQL), and development in a variety of languages. Besides having multiple years of in-depth experience under his belt, he also has a bachelor's degree in computer science and is certified as a MySQL administrator and developer.

Show MoreIvan Paz

Ivan is a data architect whose expertise includes PostgreSQL, MySQL, Oracle, ElasticSearch, OpenSearch, EMR, Spark, software development in Java and Python, and cloud servers (Digital Ocean, Google Cloud, AWS). He has designed and administrated huge SQL databases that handles millions of requests daily, developed PL/pgSQL procedures for high-performance data manipulation, configured Elasticsearch/OpenSearch clusters for vast amounts of data, and built entire BigData environments for BI.

Show MoreMikhail Fishzon

Mikhail is an alumnus of Google and Lyft and a data engineer who enjoys turning real-life business problems into scalable data platforms. Over the last two decades, he has helped companies architect, build, and maintain performance-optimized databases and data pipelines. Mikhail is knowledgeable in database optimization techniques and has achieved a 99% increase in slow-running query performance.

Show MoreJohan Dahlin

Johan is a developer with 18 years of professional experience—his key technologies are Python, JavaScript, Flask, GTK/GNOME, PostgreSQL, Jenkins, and Gerrit. Johan has been contributing to open-source communities for many years, including maintaining the key technologies used in the GNOME desktop environment.

Show MoreBerenice Harillo

Berenice is a senior database engineer with solid experience working with relational and non-relational databases on-premises and in different clouds. She excels in managing massive data sets with various tools and dealing with performance issues. As a database expert, Berenice enjoys migrating it to the cloud and creating models and ETL in data warehousing.

Show MorePhilip McClarence

Philip is an experienced database engineer and administrator (DBA) with over 20 years of experience. His primary focus is PostgreSQL, but he has worked with several other databases, including Oracle and the AWS suite. He has worked on every aspect of the database lifecycle, from designing and building greenfield projects to maintaining and tuning high throughput 24/7 systems and everything in between, including complex data migration projects.

Show MoreSoni Maula Harriz

With 10+ years in the computer industry, Soni's worked mainly as a PostgreSQL DBA and SQL developer—managing PostgreSQL servers, including Slony replication, streaming replication, Londiste 3, and Pacemaker-Corosync-DRBD stack. He's done performance tuning, data-center migrations, routine maintenance, troubleshooting, and daily DBA tasks. Soni's also experienced in Business Intelligence Analytics software using Looker and Amazon Redshift.

Show MoreVitaly Fomichev

Vitaly is a senior web application developer with more than two decades of professional experience, working primarily with Ruby on Rails and PostgreSQL. He is comfortable working as a team lead and as an individual contributor. Vitaly loves solving technical challenges, but his main focus is to understand business needs and provide significant value for modern business as a whole. He believes the most amazing thing about modern technologies is how they change our world.

Show MoreDiscover More PostgreSQL Developers in the Toptal Network

Start HiringA Hiring Guide

Guide to Hiring a Great PostgreSQL Developer

PostgreSQL developers are skilled in creating systems for gathering, processing, and storing data. This guide to hiring PostgreSQL developers features interview questions and answers, as well as best practices that will help you identify the best candidates for your company.

Read Hiring Guide... allows corporations to quickly assemble teams that have the right skills for specific projects.

Despite accelerating demand for coders, Toptal prides itself on almost Ivy League-level vetting.

How to Hire PostgreSQL Consultants Through Toptal

Talk to One of Our Client Advisors

Work With Hand-selected Talent

The Right Fit, Guaranteed

EXCEPTIONAL TALENT

How We Source the Top 3% of PostgreSQL Developers

Our name “Toptal” comes from Top Talent—meaning we constantly strive to find and work with the best from around the world. Our rigorous screening process identifies experts in their domains who have passion and drive.

Of the thousands of applications Toptal sees each month, typically fewer than 3% are accepted.

Toptal PostgreSQL Case Studies

Discover how our PostgreSQL developers help the world’s top companies drive innovation at scale.

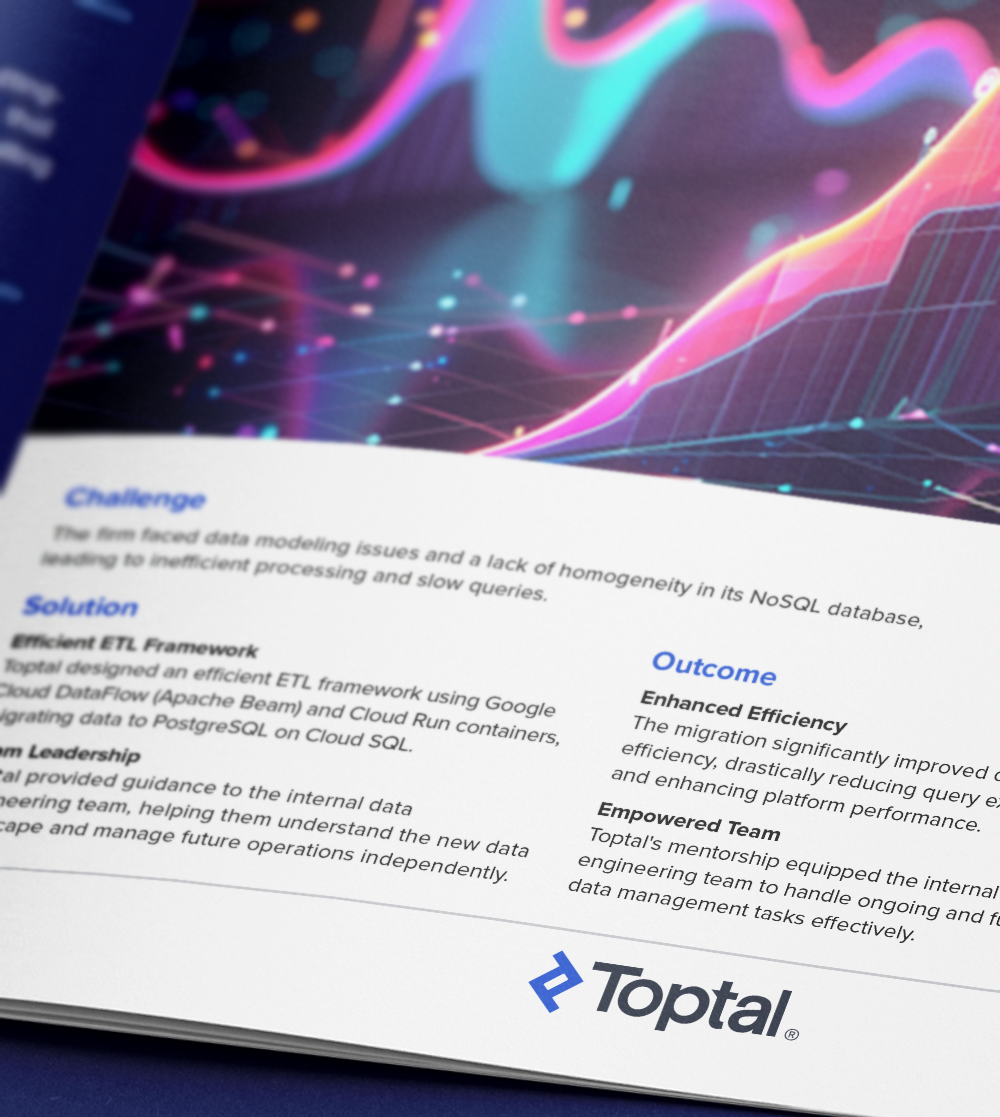

Toptal enhances data processing efficiency with database cloud migration.

Challenge: A healthcare compliance firm improved performance and resolved data issues by migrating from Cloud Firestore to PostgreSQL on Cloud SQL with Toptal’s expertise. The firm faced data modeling issues and a lack of homogeneity in its NoSQL database, leading to inefficient processing and slow queries.

Solution: Toptal’s PostgreSQL developer created an efficient ETL framework using Google Cloud DataFlow (Apache Beam) and Cloud Run containers, migrating data to PostgreSQL on Cloud SQL. Toptal provided guidance to the internal data engineering team, helping them understand the new data landscape and manage future operations independently.

Outcome: The migration significantly improved data processing efficiency, drastically reducing query execution times and enhancing platform performance. Toptal’s PostgreSQL developer equipped the internal data engineering team to handle ongoing and future data management tasks effectively.

- Data Engineering

- ETL

- Cloud Engineering

Capabilities of PostgreSQL Developers

PostgreSQL developers design and manage databases that support large-scale, data-intensive applications. They apply advanced PostgreSQL capabilities—such as custom indexing, query tuning, and replication—to ensure fast data access, minimal downtime, and secure operations. By aligning database architecture with application logic, they enable systems that can grow efficiently and deliver consistent performance under heavy load.

Schema Design for Scalable Architecture

Advanced SQL Query Engineering

Stored Procedure and Function Development

Strategic Indexing for Performance Gains

Cross-platform Database Migration

Replication Setup and Management

Performance Tuning and Query Optimization

Robust Security Implementation

Scalable ETL Pipeline Development

Proactive Maintenance and Upgrades

Find the Right Talent for Every Project

Full-stack PostgreSQL Developers

Dedicated PostgreSQL Developers

Offshore PostgreSQL Developers

Remote PostgreSQL Developers

FAQs

How much does it cost to hire a PostgreSQL developer?

The cost associated with hiring a PostgreSQL developer depends on various factors, including preferred talent location, complexity and size of the project you’re hiring for, seniority, engagement commitment (hourly, part-time, or full-time), and more. In the US, for example, Glassdoor’s reported average total annual pay for PostgreSQL developers is $111,830 as of May 4, 2023. With Toptal, you can speak with an expert talent matcher who will help you understand the cost of talent with the right skills and seniority level for your needs. To get started, schedule a call with us — it’s free, and there’s no obligation to hire with Toptal.

How quickly can you hire with Toptal?

Typically, you can hire PostgreSQL developers with Toptal in about 48 hours. For larger teams of talent or Managed Delivery, timelines may vary. Our talent matchers are highly skilled in the same fields they’re matching in—they’re not recruiters or HR reps. They’ll work with you to understand your goals, technical needs, and team dynamics, and match you with ideal candidates from our vetted global talent network.

Once you select your PostgreSQL professional, you’ll have a no-risk trial period to ensure they’re the perfect fit. Our matching process has a 98% trial-to-hire rate, so you can rest assured that you’re getting the best fit every time.

Are PostgreSQL developers in demand?

Yes, PostgreSQL developers are in high demand. According to Gartner, the DBMS market has seen consistent growth (it approached $80 billion in 2021) that continues to accelerate. PostgreSQL is a top choice for database management (and the preferred database technology among professional developers). It is a versatile tool with advanced features and it supports relational databases while also offering NoSQL functionality. The need for PostgreSQL engineers continues to increase.

How should I choose the best PostgreSQL developers for my project?

The best PostgreSQL developers for your project should have a foundation in relational databases and SQL expertise. They should have prior PostgreSQL experience and understand its features as they relate to data, performance, and reliability. Developers should also possess certain complementary skills: proficiency with a procedural language (e.g., PL/pgSQL or PL/Python), familiarity with PostgreSQL’s specific JSON operators and functions (if NoSQL functionalities are required), and mastery of C-family languages (in cases where in-house PostgreSQL extensions need creation or maintenance). Finally, it’s crucial for every engineer to have debugging and problem-solving abilities.

How do I hire PostgreSQL developers?

To hire the right PostgreSQL developer, it’s important to evaluate a candidate’s experience, technical skills, and communication skills. You’ll also want to consider the fit with your particular industry, company, and project. Toptal’s rigorous screening process ensures that every member of our network has excellent experience and skills, and our team will match you with the perfect PostgreSQL developers for your project.

Is PostgreSQL a good option for my project?

PostgreSQL is an open-source relational database that is currently the most popular of its kind. It offers many relevant extensions to the relational model, such as PostGIS geospatial support, ACID compliance, easy implementation of custom datatypes, custom functions, and the use of different programming languages without recompiling the engine. PostgreSQL is a very powerful database engine for a vast number of use cases. Extremely efficient and secure, with huge adoption, PostgreSQL is a solid choice when it comes to open-source databases for now and the foreseeable future.

How are Toptal PostgreSQL consultants different?

At Toptal, we thoroughly screen our PostgreSQL consultants to ensure we only match you with the highest caliber of talent. Of the more than 200,000 people who apply to join the Toptal network each year, fewer than 3% make the cut.

In addition to screening for industry-leading expertise, we also assess candidates’ language and interpersonal skills to ensure that you have a smooth working relationship.

When you hire PostgreSQL experts with Toptal, you’ll always work with world-class, custom-matched PostgreSQL developers ready to help you achieve your goals.

Can you hire PostgreSQL experts on an hourly basis or for project-based tasks?

You can hire PostgreSQL professionals on an hourly, part-time, or full-time basis. Toptal can also manage the entire project from end-to-end with our Managed Delivery offering. Whether you hire a PostgreSQL developer for a full- or part-time position, you’ll have the control and flexibility to scale your team up or down as your needs evolve. Our PostgreSQL developers can fully integrate into your existing team for a seamless working experience.

What is the no-risk trial period for Toptal PostgreSQL professionals?

We make sure that each engagement between you and your PostgreSQL developer begins with a trial period of up to two weeks. This means that you have time to confirm the engagement will be successful. If you’re completely satisfied with the results, we’ll bill you for the time and continue the engagement for as long as you’d like. If you’re not completely satisfied, you won’t be billed. From there, we can either part ways, or we can provide you with another PostgreSQL developer who may be a better fit and with whom we will begin a second, no-risk trial.

How to Hire PostgreSQL Developers

Jano is a full-stack developer and founder specializing in databases. Using PostgreSQL, he has worked on database services with government data, recommendation engines, and performance optimization projects, and has experience with startups, consulting, and leading small teams. He has a master’s (summa cum laude) in software engineering from the Slovak University of Technology in Bratislava.

Expertise

The Expanding Demand for PostgreSQL Developers

The database management systems (DBMS) market is booming—it approached $80 billion in 2021, following a trend of consistent growth over the five preceding years. PostgreSQL, also known as Postgres, is a top choice for database management and is the preferred database technology among professional developers. As the DBMS market expands, the need for PostgreSQL engineers continues to increase, leading companies to tap into a growing talent pool of specialized developers.

Hiring PostgreSQL developers is not always straightforward: While other SQL engineers do have some skill overlap with PostgreSQL developers, only experienced PostgreSQL specialists know how to leverage PostgreSQL’s advanced features and how to be effective at performance tuning to maintain data integrity—especially for large-scale PostgreSQL projects that demand stability, performance, and security..

In this Hiring Guide, we outline the critical components of a PostgreSQL job description, interview questions, and assessments. We also define the difference between PostgreSQL and SQL developers from a hiring perspective and the complementary technical skills your role might require—especially if you’re integrating PostgreSQL into broader app development or enterprise database solutions using stacks that include PHP, Java, or JavaScript.

What attributes distinguish quality PostgreSQL Developers from others?

When looking to hire a PostgreSQL developer, it’s important to understand how PostgreSQL developers stand out in a broader context of frameworks and database expertise (i.e., PostgreSQL versus SQL versus NoSQL skills). There are two basic types of databases:

-

Relational (RDBMS) or SQL databases use tables with rows and columns, which is ideal for structured data; these databases facilitate complex queries and help developers enforce data consistency. Examples include PostgreSQL, MySQL, and Microsoft (MS) SQL Server.

- With all of them, developers use Structured Query Language (SQL) to create and find data—but PostgreSQL extends the language with advanced features and customizations.

- Nonrelational or NoSQL databases use ad hoc, JSON-formatted “documents,” allowing for maximum flexibility amid changing requirements.

While most candidates should be savvy with relational databases, the best PostgreSQL developers will also identify which advanced PostgreSQL features are a good fit for your assignment.

You should require a high-level understanding of standard features and functionalities, such as:

Data | Foreign keys; stored procedures; support for a variety of data types |

Performance | Multiversion concurrency control (MVCC) and locking mechanisms; nested transactions; advanced query planner and optimizer |

Reliability | Point-in-time recovery (PITR); checkpoints and write-ahead logging (WAL); tablespaces; asynchronous replication |

Other | Secure access-control system; international character sets; support for a variety of languages; time stamps with time zones |

To gauge a candidate’s depth of knowledge, ask them about specific PostgreSQL abilities and limitations, using its feature matrix for reference.

How can you identify the ideal PostgreSQL Developer for you?

Unless you are hiring junior-level engineers and have the flexibility for additional onboarding and training time, you should focus your efforts on finding developers with significant hands-on experience with PostgreSQL, particularly for mission-critical development services that rely on scalable data management and data integrity. When reviewing résumés, be wary of candidates who list only experience with SQL: SQL generalists will likely need to unlearn some habits specific to other SQL systems lest they risk implementing antipatterns that may cost your company—even before your app scales.

Ideally, candidates will have plenty of experience with the PostgreSQL subfeatures that are crucial to your project (e.g., JSON datatypes and functions for an app using a hybrid table/document architecture across iOS and Android platforms).

The mastery of PostgreSQL requires several complementary skills, but not all of them may be relevant to a given project.

Procedural languages allow PostgreSQL developers to reuse SQL-related code from directly within the database for performance and data consistency. There are four procedural languages in PostgreSQL’s core distribution:

If your project already relies heavily on one or more of these, you may need to hire a developer with Python, Perl, or Tcl skills. However, that list has little to do with how developers interface with PostgreSQL from an app’s code. For example, if your app (or its back end) is written in Java, a Java developer will use PostgreSQL’s client libraries to run queries; a PostgreSQL developer can collaborate with them better if they also know Java, depending on team size and role overlap.

Certain database projects may also benefit from JSON-proficient developers. As mentioned, PostgreSQL is primarily a relational database, but PostgreSQL engineers can still harness the power of NoSQL functionality. Look to hire someone with strong JSON SQL experience and mastery of JSON datatype operators if you are considering a combined document/relational database model to support cost-effective performance at scale.

Finally, in rare cases, familiarity with C-family languages (C or C++) may be needed when hiring developers so they can create and maintain custom PostgreSQL extensions. However, many extensions exist as open-source projects and can be leveraged as is without C or C++ skills.

How to Write a PostgreSQL Job Description for Your Project

The next step in hiring a PostgreSQL developer is to customize role requirements based on your data management needs and business goals:

Scenario | Level | Requirements |

You are just getting started with PostgreSQL and searching for application developers. | Junior- or mid-level engineer | Look for candidates who are generally familiar with SQL. It may not make sense to have a hard requirement on PostgreSQL experience for all engineers, as this could limit your choices. Excellent application developers familiar with any relational database can learn PostgreSQL’s features relatively easily. It is helpful, however, for at least one person on the team to know advanced PostgreSQL features to ease the learning curve for other developers. In particular, a complex new product would benefit from having a PostgreSQL-savvy database architect available to shape the project during its infancy. |

You are already using PostgreSQL and hope to enhance your project’s performance. | Senior data engineer | Look for a PostgreSQL database expert with advanced SQL knowledge. Such a specialist can provide you with performance optimization suggestions and monitoring techniques, and guide the team’s best practices. Search for candidates with advanced knowledge of performance tuning and the ability to assess an application’s bottlenecks, as well as leadership experience in coaching more junior engineers on team standards. |

Regardless of their level of expertise in PostgreSQL, a solid candidate should have sufficient experience with databases and advanced knowledge of the SQL syntax as a foundation for additional PostgreSQL skills.

Budget-conscious teams should also consider whether to hire freelance or full-time candidates based on project needs and pricing. Clarify whether compensation is structured by salary or cost-effective hourly rate.

What are the most important PostgreSQL interview questions?

Effective interview questions are a critical piece in the hiring puzzle—and your ticket to top developers. You might ask standard SQL interview questions on topics like JOIN clauses and subqueries or more advanced questions on topics like window functions, CTEs, and recursive queries. The best interviews tailor questions based on project-specific factors.

After standard SQL questions, continue with more specific PostgreSQL interview questions, such as:

How would you handle deploying a database model change (e.g., an important column rename) with minimal downtime?

PostgreSQL supports transactional DDL, but even renaming a column can block a full table for reads and writes. This can cause downtime and deadlocks at the database level and may need nontrivial synchronization when deploying new application code that can work with both versions of the table. Splitting a model change into multiple steps that are safe to run at any time is the correct approach. Example action items might include:

- Notifying key stakeholders that production deployment may include possible downtime.

- Creating a backup copy of the table for later restoration, if required (i.e., when performing structural changes or bulk data updates in an important table).

- Running the column rename and subsequent possible data manipulation in the column.

- Committing changes (or rolling them back if unexpected issues occur).

- Verifying with stakeholders that the application works as expected after finishing deployment.

You should also test each candidate’s understanding of complex PostgreSQL features that are relevant to your project. For example:

When would you consider using a partial index?

Partial indexes are suitable for tables and queries in cases in which you are interested only in a subset of the data. A good example is indexing a status column that filters out most rows. This case might occur in the real world for a table that contains processed and unprocessed job data, where a business is only interested in quickly fetching unprocessed data using the status column.

When would you use table partitioning?

When large amounts of data are stored in databases, performance and scaling suffer. Partitioning helps by dividing big tables into smaller tables, which reduces memory swap problems and table scans. As a result, huge data sets become more accessible and manageable. Most of the operations on unpartitioned tables are applicable to partitioned ones.

Focusing on database administration tasks (e.g., optimization and monitoring) may also be useful when looking to hire seasoned PostgreSQL developers:

What are the key metrics in a PostgreSQL database, and which tools would you use to monitor them?

In terms of query performance, strategic logging is required for any nontrivial application. It is crucial to recognize long-running queries and optimize them (standard approaches include running EXPLAIN ANALYZE and checking query costs). On the application level, it is vital to have visibility into how many queries are issued for a single request to identify N+1 query problems. To handle scaling, it is a best practice to monitor the number of open connections and replication lags.

Services like Datadog and New Relic provide actionable insights regarding PostgreSQL performance and are easy to integrate into existing applications of various languages. pgAdmin is also an excellent open-source graphical tool for database administration; it can monitor database configuration and activity, track sessions and locks, catch long-running queries, and more, but much of this functionality can also be achieved by a PostgreSQL developer skilled with the psql command line.

What would you do if a client’s application (dependent on a database) is stuck and nonresponsive?

Cooperation with other application teams is crucial, as the root cause of the problem may be outside of the database layer (e.g., unresponsive application servers or network firewalls). If all other layers are functional, then engineers should use the performance optimization methods for debugging we’ve laid out here.

Finally, a case study approach may be useful while interviewing. The situation below assesses a candidate’s experience with at least one other relational database (e.g., MySQL, Oracle, MS SQL).

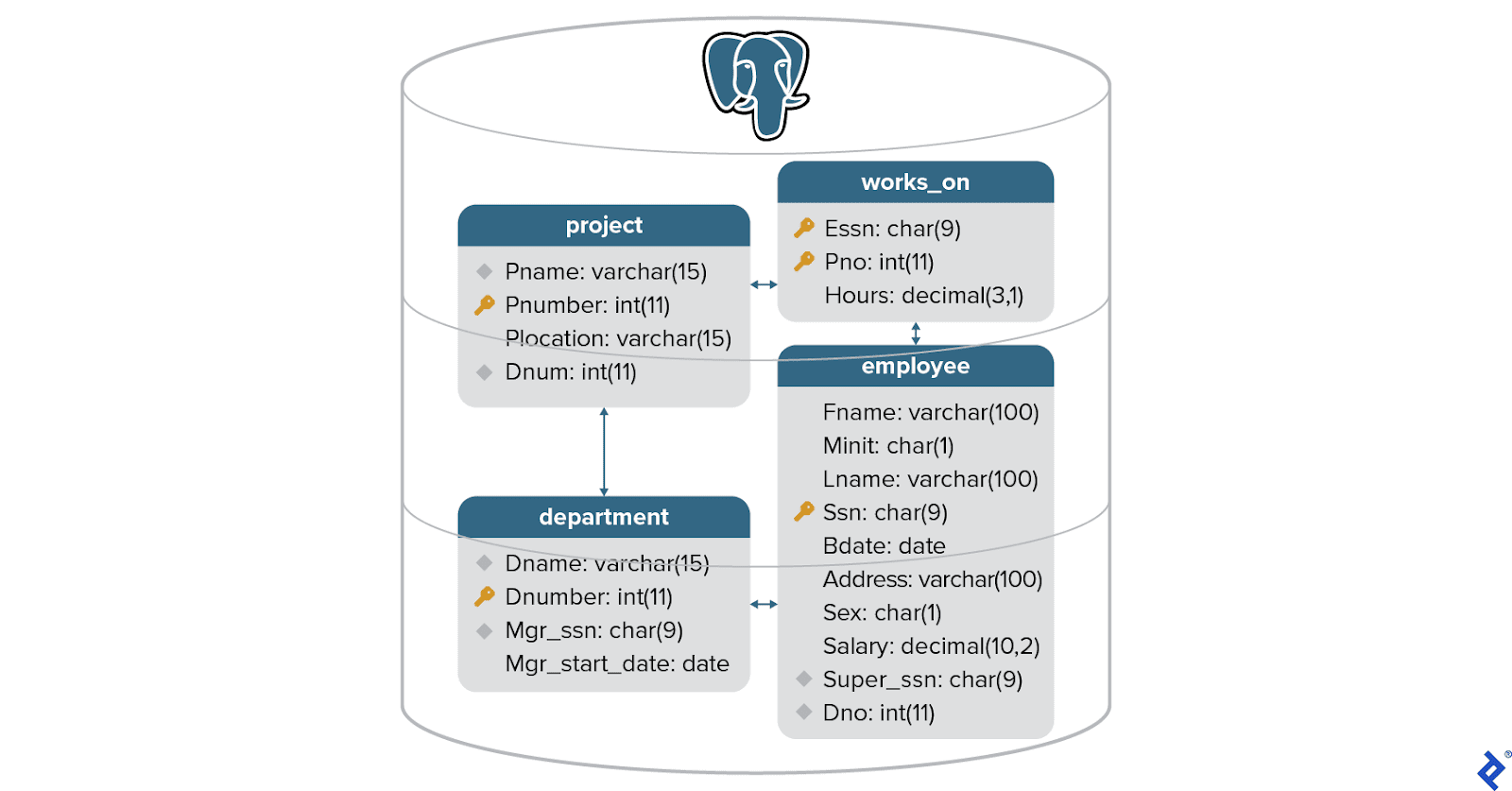

To determine each candidate’s ability to deal with real-world problems, ask them to create a relational database model that meets a set of business requirements that aligns with your project’s use cases.

Based on each candidate’s model, ask them:

- How would you query and manipulate data in the database?

- What trade-offs did you make in terms of performance, scalability, and maintenance?

- How would you run an

EXPLAINon a query? Please include a proposal to optimize the query plan.

All candidates should demonstrate a clear understanding of these relational database concepts.

While interviewing, consider other relevant developer strengths from the complementary technologies mentioned in the “How can you identify the ideal PostgreSQL Developer for you?” section, plus more general engineering topics:

- Dockerized and cloud technologies are prevalent in PostgreSQL application architectures. Ask candidates about their experience with cloud database configurations, including experience with dockerized environments (containers) and major cloud providers.

- No software engineering interview is complete without situational questions that assess problem-solving and debugging skills. These capabilities are essential for all developers, regardless of their technology or language specialization.

If you need support on high-level performance or architecture decisions, inquire directly about plans to assess your specific problem. Experts working at this level will have strong experience and referrals. They may suggest clear proposals for onboarding clients, defining the project’s next steps, and managing stakeholder expectations.

Why do companies hire PostgreSQL Developers?

PostgreSQL engineers can handle various data sets, whether serving small projects or global enterprises, and PostgreSQL is available for use with major cloud providers like Amazon Web Services, Microsoft Azure, and Google Cloud.

Common real-world use cases for PostgreSQL and other relational databases include transactional applications (e.g., customer relationship management systems) and other scenarios in which data does not change frequently (e.g., storing healthcare data, government data, electricity or mobile plan data, or financial data). An SQL generalist could be all that you need in such cases if there’s already enough PostgreSQL expertise on your team, but you’ll need a PostgreSQL specialist for your app to scale at an enterprise level.

PostgreSQL is an RDBMS first, but PostgreSQL developers can also harness the power of NoSQL functionality and work on projects with unstructured data using a combined document/relational data model. This approach is possible due to PostgreSQL’s rich JSON support, and it requires an engineer who has worked specifically with JSON datatypes in a production system.

While PostgreSQL is a versatile tool, it may not be suitable for every project. For example, other approaches offer better solutions when dealing with huge amounts of unstructured data or blob storage where you don’t need ACID compliance. But you still may benefit from PostgreSQL developers when using other technologies if you are migrating data from PostgreSQL.

As the DBMS market continues to grow, companies will increasingly look to expert PostgreSQL developers to harness the profits of large data sets and stay competitive. With the guidance offered here, you will be better prepared for every step of the hiring journey—from drafting your PostgreSQL job description to identifying the required advanced PostgreSQL skills for development. Zeroing in on the right PostgreSQL experts will drive your data—and your business—toward success.

The technical content presented in this article was reviewed by Tomislav Delas.

Featured Toptal PostgreSQL Publications

Top PostgreSQL Developers Are in High Demand.