Optimizing Website Performance and Critical Rendering Path

Does your web page’s rendering performance meet today’s standards? Bad rendering performance can translate into a relatively high bounce rate.

In this article, Toptal Freelance Web Developer Ilya Chernov explores the things that can lead to high rendering times, and how to fix them.

Does your web page’s rendering performance meet today’s standards? Bad rendering performance can translate into a relatively high bounce rate.

In this article, Toptal Freelance Web Developer Ilya Chernov explores the things that can lead to high rendering times, and how to fix them.

Ilya is an accomplished engineer with over five years of experience working on remote and onsite teams, as well as leading other developers.

Expertise

Does your web page’s rendering performance meet today’s standards? Rendering is the process of translating a server’s response into the picture the browser “paints” when a user visits a website. A bad rendering performance can translate into a relatively high bounce rate.

There are different server responses which determine whether or not a page is rendered. In this article, we are going to focus on the initial render of the web page, which starts with parsing HTML (provided the browser has successfully received HTML as the server’s response). We’ll explore the things that can lead to high rendering times, and how to fix them.

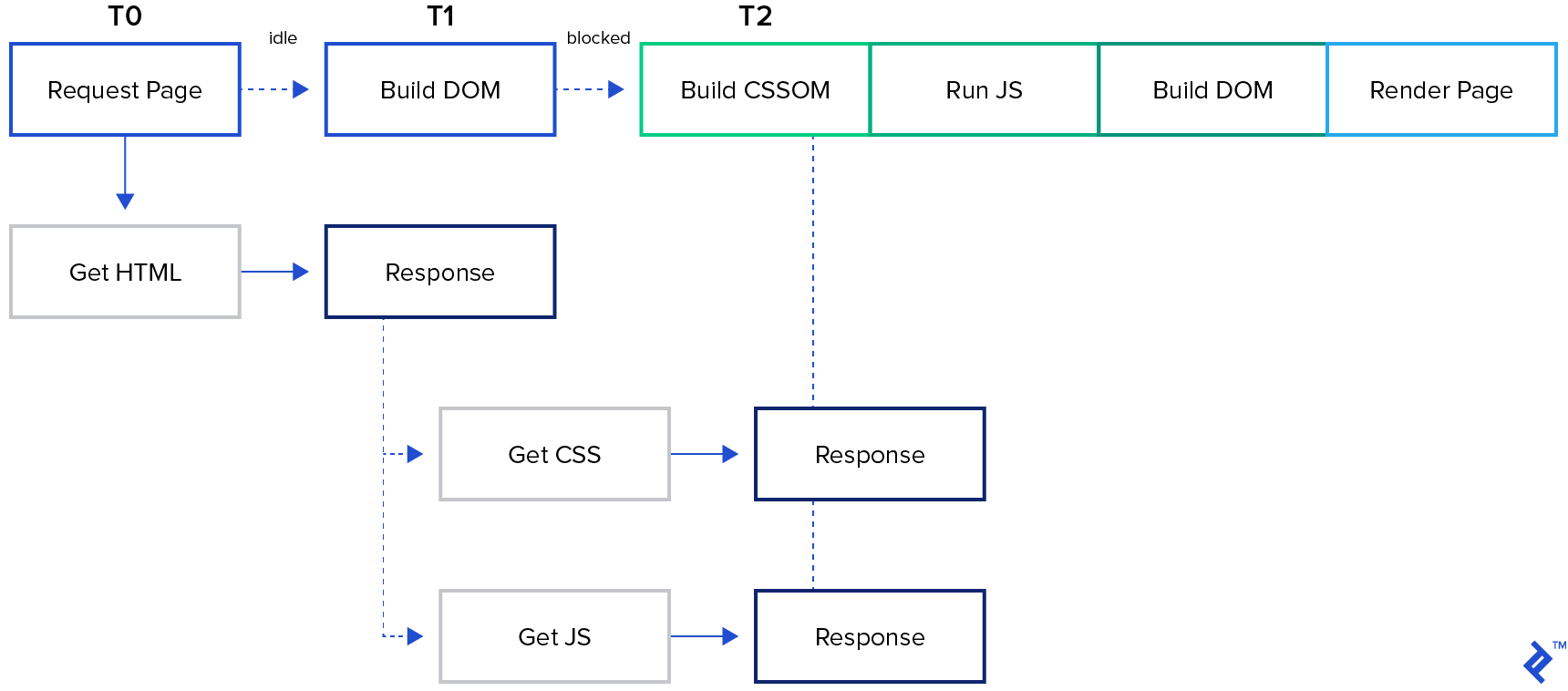

Critical Rendering Path

The critical rendering path (CRP) is the process your browser goes through to convert the code into displayable pixels on your screen. It has several stages, some of which could be performed in parallel to save time, but some parts have to be done consequentially. Here it is visualized:

First of all, once the browser gets the response, it starts parsing it. When it encounters a dependency, it tries to download it.

If it’s a stylesheet file, the browser will have to parse it completely before rendering the page, and that’s why CSS is said to be render blocking.

If it’s a script, the browser has to: stop parsing, download the script, and run it. Only after that can it continue parsing, because JavaScript programs can alter the contents of a web page (HTML, in particular). And that’s why JS is called parser blocking.

Once all the parsing is done, the browser has the Document Object Model (DOM) and Cascading Style Sheets Object Model (CSSOM) built. Combining them together gives the Render Tree. The non-displayed parts of the page don’t make it into the Render Tree, because it only contains the data necessary to draw the page.

The penultimate step is to translate the Render Tree into Layout. This stage is also called Reflow. That’s where every position of every Render Tree’s node, as well as its size, gets calculated.

Finally, the last step is Paint. It involves literally coloring the pixels according to the data the browser has calculated during the previous stages.

Optimization-related Conclusions

As you can guess, the process of website performance optimization involves changes to the website that reduce:

- The amount of data that has to be transferred

- The number of resources the browser has to download (especially the blocking ones)

- The length of CRP

Further, we’ll dive into the details of how it’s done, but first, there is an important rule to observe.

How To Measure Performance

An important rule of optimization is: Measure first, optimize as needed. Most browsers’ developer tools have a tab called Performance, and that’s where the measurements happen. When optimizing for the fastest initial render, modern performance measurement focuses on Core Web Vitals, which reflect real user experience. The most important metrics to monitor are:

- Largest Contentful Paint: Measures how quickly the main content of a page becomes visible.

- Cumulative Layout Shift: Measures visual stability and how much elements shift during loading.

- Interaction to Next Paint: Measures responsiveness by tracking how quickly the page reacts to user input.

Besides the rendering time, there are other things to take into account—most importantly, how many blocking resources are used and how long it takes to download them. This information is found in the Performance tab after the measurements are made.

Performance Optimization Strategies

Given what we’ve learned above, there are three main strategies for website performance optimization:

- Minimizing the amount of data to be transferred over the wire,

- Reducing the total number of resources to be transferred over the wire, and

- Shortening the critical rendering path

1. Minimize the Amount of Data To Be Transferred

First of all, remove all unused parts, such as unreachable functions in JavaScript, styles with selectors that never match any element, and HTML tags that are forever hidden with CSS. Secondly, remove all duplicates.

Then, I recommend putting an automatic process of minification in place. For example, it should remove all the comments from what your back end is serving (but not the source code) and every character that bears no additional information (such as whitespace characters in JS).

After this is done, what we are left with can be as text. It means we can safely apply a compression algorithm such as GZIP or Brotli, both of which are widely supported by modern browsers.

Finally, there is caching. It won’t help the first time a browser renders the page, but it’ll save a lot on subsequent visits. It is crucial to keep two things in mind, though:

- If you use a CDN, make sure caching is supported and properly set there.

- Instead of waiting for the resources’ expiration date to come, you might want to have a way to update it earlier from your side. Embed files’ “fingerprints” into their URLs to be able to invalidate local cache.

Of course, caching policies should be defined per resource. Some might rarely change or never change at all. Others are changing faster. Some contain sensitive information, others could be considered public. Use the “private” directive to keep CDNs from caching private data.

Optimizing web images could also be done, although image requests don’t block either parsing or rendering.

2. Reduce the Total Count of Critical Resources

“Critical” refers only to resources needed for the web page to render properly. Therefore, we can skip all the styles that are not involved in the process directly. And all the scripts too.

Stylesheets

In order to tell the browser that particular CSS files are not required, we should set media attributes to all the links referencing stylesheets. With this approach, the browser will only treat the resources that match the current media (device type, screen size) as necessary, while lowering the priority of all the other stylesheets (they will be processed anyway, but not as part of the critical rendering path). For example, if you add the media="print" attribute to the style tag that’s referencing the styles for printing out the page, these styles won’t interfere with your critical rendering path when media isn’t print (i.e., when displaying the page in a browser).

To further improve the process, you can also make some of the styles inlined. This saves us at least one roundtrip to the server that would have otherwise been required to get the stylesheet.

Scripts

As mentioned above, scripts are parser blocking because they can alter DOM and CSSOM. Therefore, the scripts that do not alter them should not be block parsing, thus saving us time.

In order to implement that, all script tags have to be marked with attributes—either async or defer.

Scripts marked with async do not block the DOM construction or CSSOM, as they can be executed before CSSOM is built. Keep in mind, though, that inline scripts will block CSSOM anyway unless you put them above CSS.

By contrast, scripts marked with defer will be evaluated at the end of the page load. Therefore, they shouldn’t affect the document (otherwise, it will trigger a re-render).

In other words, with defer, the script isn’t executed until after the page load event is fired, whereas async lets the script run in the background while the document is being parsed.

It’s worth noting that modern transport protocols such as HTTP/2 and HTTP/3 have changed how browsers load resources. In the past, combining files into a single request was important to avoid delays, but newer protocols handle multiple requests more efficiently. As a result, aggressive file concatenation is often less necessary, and keeping files separate can make projects easier to maintain without significantly affecting performance.

3. Shorten the Critical Rendering Path Length

Finally, the CRP length should be shortened to the possible minimum. In part, the approaches described above will do that.

Media queries as attributes for the style tags will reduce the total count of resources that have to be downloaded. The script tag attributes defer and async will prevent the corresponding scripts from blocking the parsing.

Minification, compression, and archiving resources with GZIP will reduce the size of the transferred data (thereby reducing the data transfer time as well).

Inlining some styles and scripts can reduce the number of roundtrips between the browser and the server.

What we haven’t discussed yet is the option to rearrange the code among the files. According to the latest idea of best performance, the first thing a website should do fastest is to display ATF content. ATF stands for above the fold. This is the area that’s visible right away, without scrolling. Therefore, it is best to rearrange everything related to rendering it in a way that the required styles and scripts are loaded first, with everything else stopping - neither parsing nor rendering. And always remember to measure before and after you make the change.

Modern Browser Optimization Features

Modern browsers provide additional ways to influence how resources are loaded and rendered.

The fetchpriority attribute allows developers to signal which resources are most important, helping the browser prioritize critical assets such as ATF images. This can improve metrics like Largest Contentful Paint by ensuring key content is loaded earlier.

The content-visibility CSS property enables the browser to skip rendering work for off-screen content until it becomes visible. This reduces the amount of work required during the initial render and can improve performance on content-heavy pages.

Conclusion: Optimization Encompasses Your Entire Stack

In summary, website performance optimization incorporates all aspects of your site response, such as caching, setting up a CDN, refactoring, resources optimization and others, however all of this can be done gradually. As a web developer, you should use this article as a reference, and always remember to measure performance before and after your experiments.

Browser developers do their best to optimize the website performance for every page you visit, which is why browsers usually implement the so-called “pre-loader.” This part of the programs scans ahead of a resource you’ve requested in HTML in order to make multiple requests at a time and have them run in parallel. This is why it is better to keep style tags close to each other in HTML (line-wise), as well as script tags.

Moreover, try to batch the updates to HTML in order to avoid multiple layout events, which are triggered not only by a change in DOM or CSSOM but also by a device orientation change and a window resize.

Useful resources and further reading:

- PageSpeed Insights

- Acing Google’s PageSpeed Insights Assessment

- Caching Checklist

- A way to test if GZIP is enabled for your website

- High Performance Browser Networking: A book by Ilya Grigorik

Understanding the basics

Website optimization is the process of analyzing a website and introducing changes to make it load faster and perform better. Measurements are a crucial part of that process, and without them, there is no way to decide if a particular change made things better or worse.

Try using online tools such as Google’s PageSpeed Insights. You could also use your browser in “Private Browsing” mode to load the site without having any of the data cached locally. Some browsers also allow you to use throttling, which helps you simulate slow connection speed.

Website speed can be defined as the average time it takes for a website to load. Alternatively, it might mean its framerate per second, especially during computation-heavy operations.

Preferably it should load in under one second. Of course, the time until the first meaningful paint matters more than the total loading time, as long as it keeps the user busy with the content.

In the context of browsers, a rendering engine is a piece of software that’s responsible for drawing data in the browser window. See Critical Rendering Path above to get the details.

Render blocking is what the browser has to do while parsing stylesheet files because it cannot render the page until all the referenced stylesheet files are parsed.

Moscow, Russia

Member since September 6, 2019

About the author

Ilya is an accomplished engineer with over five years of experience working on remote and onsite teams, as well as leading other developers.