Oracle to SQL Server and SQL Server to Oracle Migration Guide - Pt. 3

Migrating in either direction between Microsoft SQL Server and Oracle Database? Code migration tools, public synonyms, and change management are important to keep in mind.

Migrating in either direction between Microsoft SQL Server and Oracle Database? Code migration tools, public synonyms, and change management are important to keep in mind.

Leonid has 20+ years as a multi-platform developer and DBA, with special expertise in Oracle and SQL Server cross-platform migrations.

Expertise

PREVIOUSLY AT

The first and the second parts of this series discussed differences between Oracle Database and Microsoft SQL Server in their implementation of transactions, and the resulting conversion pitfalls, as well as some commonly used syntax elements.

This last installment will cover the notion of Oracle read consistency and how to convert architecture, based on this notion, into a Microsoft SQL Server version. It will also address the use of synonyms (and how NOT to use them) and the role of change-control process in managing your database environment.

Oracle Read Consistency and Its Equivalent in SQL Server

Oracle read consistency is a guarantee that all data returned by a single SQL statement comes from the same singular point in time.

It means that if you issued a SELECT statement at 12:01:02.345 and it ran for 5 minutes before returning result set, all data (and only data) that has been committed in the database as of 12:01:02.345 will make it into your return set. Your return set won’t have any new data added during those 5 minutes that it took the database to process your statement, nor any updates, and no deletes will be visible.

Oracle architecture achieves read consistency by internally time-stamping every change to the data and building a result set from two sources: permanent datafiles and an undo segment (or “rollback segment,” as it was known until version 10g).

In order to support it, the undo information should be preserved. If it’s overwritten, it results in the infamous ORA-01555: snapshot too old error.

Leaving aside undo segment management—and how to navigate the ORA-01555: snapshot too old error—let’s look at the implications of read consistency on any practical implementation in Oracle. Also, how should it be mirrored in SQL Server, which—as is the case with other RDBMS implementations, with the possible and qualified exception of PostgreSQL—does not support it?

The key is that Oracle reads and writes do not block each other. It also means that your long-running query return set may not have the latest data.

Non-blocking reads and writes is an advantage that Oracle has, and it affects transaction scoping.

But read consistency also means that you don’t have the latest state of the data. When in some scenarios it’s perfectly good (like producing a report for a particular time), it could create significant issues in others.

Not having the latest—even “dirty,” or uncommitted—data could be critical: The classic scenario is a hotel room reservation system.

Consider the following use case: You have two customer service agents who are simultaneously accepting room reservation orders. How can you ensure that rooms do not become overbooked?

In SQL Server, you can start an explicit transaction and SELECT a record from the list (which could be a table or a view) of available rooms. As long as this transaction is not closed (either by COMMIT or ROLLBACK), no one can get the same room record that you’ve selected. This prevents double-booking but also makes every other agent wait for each other to complete reservation requests one at a time, sequentially.

In Oracle, you can achieve the same result by issuing a SELECT ... FOR UPDATE statement against records matching your search criteria.

Note: Better solutions exist, like setting a temporary flag marking a room “under consideration” instead of blindly locking access to it. But those are architectural solutions, not language options.

Conclusion: Oracle read consistency is not “all good” or “all bad” but an important property of the platform that needs to be understood well and is critical to cross-platform code migration.

Public (and Private) Synonyms in Oracle and Microsoft SQL Server

“Public synonyms are evil.” It’s not exactly my personal discovery, but I had accepted it as gospel until my day, week, and year were saved by public synonyms.

In many database environments—I would say all Oracle environments that I’ve had a chance to work with, but none that I designed—using CREATE PUBLIC SYNONYM for every object was routine because “we’ve always done it that way.”

In these environments, public synonyms had only one function: to allow reference to an object without specifying its owner. And this is one poorly thought-out reason to make public synonyms.

However, Oracle public synonyms can be extremely useful and give team productivity benefits that significantly outweigh all their drawbacks, if implemented and managed correctly and with a reason. Yes, I said “team productivity.” But how? For this, we need to understand how name resolution works in Oracle.

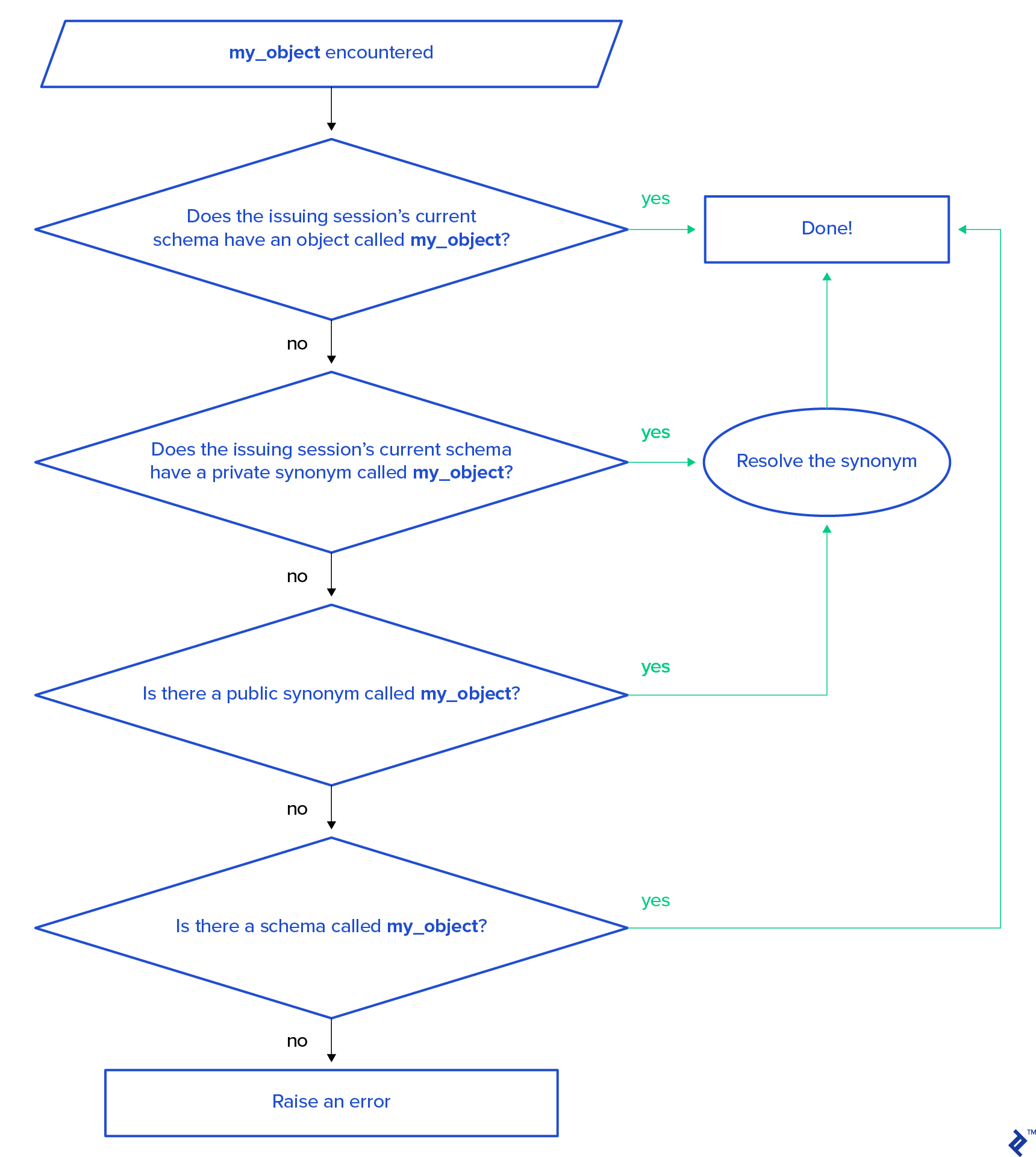

When the Oracle parser is finding a name (a non-reserved keyword), it tries to match it to an existing database object in the following order:

Note: The error raised will be ORA-00942: table or view does not exist for DML statements, or PLS-00201: identifier 'my_object' must be declared for stored procedures or function calls.

In this name resolution order, it is easy to see that when a developer is working in their own schema, any local object with the same name as a public synonym will hide this public synonym. (Note: Oracle 18c implemented the “login-only” schema type, and this discussion does not apply to it.)

Public Synonyms for Scaling Teams: Oracle Change Control

Let’s now look at a hypothetical team of 100 developers working on the same database (which is something I’ve experienced). Further, let’s assume they’re all working locally on their personal workstations and doing non-database builds independently, all linked to the same database development environment. Resolution of code merging in non-database code (be it C#, Java, C++, Python, or anything else) will be done at change-control check-in time and will take effect with the next code build. But database tables, code, and data need to be changed back and forth multiple times during the ongoing development. Each developer does this independently, and it takes effect immediately.

For this, all database objects are created in a common application schema. This is the schema that the application references. Each developer:

- Connects to the database with their personal user account/schema

- Always starts with an empty personal schema

- References the common schema only through name resolution to a public synonym, as described above

When a developer needs to make any changes to the database—create or alter a table, change procedure code, or even modify a set of data to support some test scenario—they create a copy of the object in their personal schema. They do this by getting DDL code using the DESCRIBE command and running it locally.

From this moment, this developer’s code will see the local version of the object and data, which won’t be visible to (nor having an impact on) anyone else. After development is completed, the modified database code is checked into source control, and conflicts are resolved. Then, the final code (and data, if needed) is implemented in the common schema.

After this, the whole development team can see the same database again. The developer who just delivered the code drops all the objects from his/her personal schema and is ready for a new assignment.

This ability to facilitate independent parallel work for multiple developers is the main benefit of public synonyms—an importance that is hard to overstate. However, in practice, I continue to see teams creating public synonyms in Oracle implementations “just because we always do it.” In contrast, in teams using SQL Server, I don’t see the creation of public synonyms established as a common practice. The functionality exists but is not used often.

In SQL Server, the current default schema for a user is defined in the user configuration and can be changed any time if you have “alter user” privileges. The same exact methodology as described above for Oracle can be implemented. However, if this method is not used, public synonyms should not be copied over.

As Microsoft SQL Server doesn’t associate a new user account with its own schema by default (as Oracle does), the association should be part of your standard “create user” script.

Below is an example of a script that creates dedicated user schemas and assigns one to a user.

First, create schemas for new users that need to be onboarded to the database named DevelopmentDatabase (each schema must be created in its own batch):

use DevelopmentDatabase;

GO

CREATE SCHEMA Dev1;

GO

CREATE SCHEMA Dev2;

GO

Second, create the first user with its assigned default schema:

CREATE LOGIN DevLogin123 WITH PASSWORD = 'first_pass123';

CREATE USER Dev1 FOR LOGIN DevLogin123 WITH DEFAULT_SCHEMA = Dev1;

GO

At this point, the default schema for user Dev1 would be Dev1.

Next, create the other user with no default schema:

CREATE LOGIN DevLogin321 WITH PASSWORD = 'second_pass321';

CREATE USER Dev2 FOR LOGIN DevLogin321;

GO

The default schema for user Dev2 is dbo.

Now alter user Dev2 to change its default schema to Dev2:

ALTER USER Dev2 WITH DEFAULT_SCHEMA = Dev2;

GO

Now the default schema for user Dev2 is Dev2.

This script demonstrates two ways to assign and change a default schema for a user in Microsoft SQL Server databases. As SQL Server supports multiple methods of user authentication (the most common is Windows authentication) and user onboarding may be handled by system administrators rather than by DBAs, the ALTER USER method of assigning/changing default schema will be more usable.

Note: I made the name of the schema the same as the name of a user. It doesn’t have to be this way in SQL Server, but it is my preference because (1) it matches how it’s done in Oracle and (2) it simplifies user management (addressing the biggest objection on a DBA’s part to doing it right in the first place)—you know the name of a user, and you automatically know the user’s default schema.

Conclusion: Public synonyms are an important tool for building a stable and well-protected multi-user development environment. Unfortunately, in my observation in the industry, it’s more often used for the wrong reasons—leaving teams suffering the confusion and other downsides of public synonyms without realizing their benefits. Changing this practice to derive real benefits from public synonyms can bring real benefits to a team’s development workflow.

Database Access Management and Change Management Processes

As we just talked about support for parallel development by large teams, it’s worth addressing one separate and oft-misunderstood topic: change-control processes.

Change management often becomes a form of red tape controlled by team leads and DBAs, despised by rebellious developers who want to deliver everything if not “yesterday” then “now.”

As a DBA, I always put protective barriers on the way into "my" database. And I have a very good reason for this: A database is a shared resource.

In a source control context, change management is generally accepted since it allows a team to revert from new-but-broken code to old-but-working code. But in a database context, change management can seem like a set of unreasonable barriers and restrictions placed by DBAs: It’s pure madness that needlessly slows development!

Let’s leave this developer’s rant aside: I’m a DBA and I won’t throw stones at myself! As a DBA, I always put protective barriers on the way into “my” database. And I have a very good reason for this: A database is a shared resource.

Every development team—and each of their developers—has a very specifically defined objective and very specific deliverable. The only objective that is on a DBA’s desk every day is the stability of the database as a shared resource. A DBA has the unique role in an organization to oversee all development efforts across all teams and to control a database that all developers access. It’s the DBA who ensures that all projects and all processes are running without interfering with each other and that each has the resources needed to function.

The problem is when both development and DBA teams sit locked in their respective ivory towers.

Developers don’t know, have no access, and don’t even care what happens on the database as long as it’s running just fine for them. (It’s not their deliverable, nor will it affect their performance evaluation.)

The DBA team keeps the database close to the chest, protecting it from developers who “know nothing” about it, because their team objective is database stability. And the best way to ensure stability is to prevent destructive changes—often resulting in an attitude of protecting the database from any changes as much as possible.

These conflicting attitudes toward a database can, as I’ve seen, lead to animosity between development and DBA teams and result in an unworkable environment. But DBAs and the development team must work together to achieve a common objective: deliver a business solution, which is what brought them together in the first place.

Having been on both sides of the developer-DBA divide, I know that the problem is easy to solve when DBAs better understand the common tasks and objectives of development teams. On their side, developers need to see a database not as an abstract concept but as a shared resource—and there, a DBA should assume the role of an educator.

The most common error that non-developer DBAs make is restricting developer access to the data dictionary and to code optimization tools. Access to Oracle DBA_ catalog views, dynamic V$ views, and SYS tables seems to many DBAs as “DBA privileged” when, in fact, these are critical development tools.

The same holds true for SQL Server, with one complication: Access to some system views cannot be granted directly, yet it’s only part of the SYSADMIN database role, and this role should never be granted outside of the DBA team. This can be solved (and should be solved in the case of a project’s migration from Oracle to SQL Server) by creating views and stored procedures that execute under SYSADMIN privileges but are accessible by non-DBA users. This is development DBA’s job to do as a new SQL Server development environment is configured.

Data protection is one of a DBA’s main responsibilities. Despite this, it’s quite common for development teams to have full access to unfiltered production data to allow for data-related ticket troubleshooting. These are the same developers who have limited access to data structure—structure that has been created by them or for them in the first place.

When proper working relationships between development and DBA teams are established, the creation of a good change-control process becomes intuitive. The specifics and challenge of database-side change management is the rigidity and fluidity of a database at the same time - the structure is rigid, the data is fluid.

It often happens that change management on structure modification—i.e., on data definition language, or DDL—is well-established while data changes have little to none in the way of change management. The justification is simple - data changes all the time.

But if we look at this more closely, we’ll see that in any system, all data falls into one of two categories: application data and user data.

Application data is a data dictionary that defines the behavior of an application and is as critical for its processes as any application code. Changes to this data should be under strict change-control processes, just as with any other application change. In order to create transparency in the change-control process for application data changes, the application data and the user data should be explicitly separated.

In Oracle, it should be done by placing application and user data each in its own schema. In Microsoft SQL Server, it should be done by placing each into a separate schema or – much better – into a separate database. Making these choices should be a part of migration planning: Oracle has two-level name resolution (schema/owner – object name) whereas SQL Server has three-level name resolution (database – schema/owner – object name).

A common source of confusion between Oracle and SQL Server worlds are—perhaps surprisingly—the terms database and server:

| SQL Server Term | Oracle Term | Definition |

|---|---|---|

| server | database (used interchangeably with server in common parlance, unless referring specifically to server hardware, OS, or network elements; there can be one or more databases on a physical/virtual server) | A running instance that can "talk" to other instances through network ports |

| database (part of a server, contains multiple schemas/owners) | schema/owner | The topmost-level grouping |

This terminology mixup should be clearly understood in cross-platform migration projects because term misinterpretation can result in incorrect configuration decisions that are hard to address retroactively.

The correct separation of application and user data allows a DBA team to address its second most important concern: user data security. As user data resides separately, it will be very straightforward to implement a break-glass procedure for user data access on an as-needed basis.

Conclusion: Change-control processes are critical in any project. In software engineering, change management on the database side is often neglected because data is seen to be “too fluid.” But it is exactly because data is “fluid” and “persistent” at the same time that a well-designed change-control process should be the cornerstone of proper database environment architecture.

On the Use of Code Migration Tools

The standard first-party tools, Oracle Migration Workbench and SQL Server Migration Assistant, can be helpful in code migrations. But what needs to be taken into account is the 80/20 rule: When code will be migrated 80% correctly, resolving the remaining 20% will take 80% of your migration effort.

The greatest risk in the use of migration tools is by far the “silver bullet” perception. One may be tempted to think, “It will do the job, and I’ll just need to do a bit of cleanup and tidying.” I observed a project that failed due to such attitude from the conversion team and its technical leadership.

On the other hand, it took me four working days to accomplish the basic conversion of a mid-size Microsoft SQL Server 2008 system (about 200 objects) using Notepad++’s bulk-replace functionality as the main editing tool.

None of the critical migration elements that I’ve addressed so far can be resolved by migration tools.

Sure, do use migration assistance tools, but remember that these provide just editing assistance. The resulting output text needs to have review, modification, and—in some cases—rewriting to become production-worthy code.

The development of artificial intelligence tools may address these migration tool deficiencies in the future, but I would expect that differences between databases will blur out before then and any migration process itself will become unnecessary. So, as long as these types of projects are needed, we’ll need to do it the old way, using old-fashioned human intelligence.

Conclusion: Using migration assistance tools is helpful but it’s not a “silver bullet,” and any conversion project still requires a detailed review of the above points.

Oracle/SQL Server Migrations: Always Take a Closer Look

Oracle and Microsoft SQL Server are the two most proliferated RDBMS platforms in the enterprise environment. Both have basic compliance with the ANSI SQL standard, and small segments of code can be moved across with very little modification, or even as-is.

This similarity creates a deceptive impression that migration across the two platforms is a simple, straightforward task and that the same application can easily be adopted from using one RDBMS back end to another.

In practice, such platform migrations are far from trivial and have to take into account the fine elements of each platform’s inner workings and, above all, the way they implement support for the most critical element of data management: transactions.

While I covered two RDBMS platforms that are at the core of my expertise, the same warning—“looks alike doesn’t mean it works alike”—should be applied to moving code between any other SQL-compliant database management systems. And in all cases, the first point of attention should be on how the implementation of transaction management differs between the source and target platforms.

Further Reading on the Toptal Blog:

Understanding the basics

What is data consistency in Oracle?

In Oracle, data consistency is based on multi-versioning: Any version of data that refers to a single point in time should be in a state such that it has no violations of active database constraints.

What is read consistency in Oracle?

Oracle read consistency is an SQL statement–level guarantee that all data will be returned in a consistent state—as it was at the point in time when the statement was submitted for execution. To support this, Oracle manages multiple versions of data corresponding to multiple points in time.

What is a database consistency check?

A database consistency check is a process to validate that the database is in a consistent state and has no missing or corrupted data blocks. It should be performed on backups and on databases whose functionality is being restored after a hardware failure.

What is data consistency in SQL?

In SQL-compliant databases, data consistency refers to a state of the data where it has no violation of any active database constraints. This is the basic requirement that must be satisfied at the end of any transaction.

What are private synonyms and public synonyms in Oracle?

Oracle private synonyms are created in a specific schema and can be accessed through schema references the same way as any other schema object. On the other hand, public synonyms are created in the PUBLIC group and can be accessed without any schema reference.

How do you change synonyms in Oracle?

In Oracle, to change which object a synonym refers to, the synonym needs to be dropped and recreated.

What is an Oracle instance?

An Oracle instance is the whole collection of background process and allocated memory that constitutes the Oracle database as an application that stores and manipulates data.

Leonid Draginsky

Westerville, OH, United States

Member since June 18, 2020

About the author

Leonid has 20+ years as a multi-platform developer and DBA, with special expertise in Oracle and SQL Server cross-platform migrations.

Expertise

PREVIOUSLY AT