Four Pitfalls of Sentiment Analysis Accuracy

Manually gathering information about user-generated data is time-consuming, to say the least. That’s why more organizations are turning to automatic sentiment analysis methods—but basic models don’t always cut it. In this article, Toptal Freelance Data Scientist Rudolf Eremyan gives an overview of some sentiment analysis gotchas and what can be done to address them.

Manually gathering information about user-generated data is time-consuming, to say the least. That’s why more organizations are turning to automatic sentiment analysis methods—but basic models don’t always cut it. In this article, Toptal Freelance Data Scientist Rudolf Eremyan gives an overview of some sentiment analysis gotchas and what can be done to address them.

Rudolf has years of experience in NLP and machine learning. His AI-based tools are used by Georgia’s largest companies, such as TBC Bank.

PREVIOUSLY AT

People are using forums, social networks, blogs, and other platforms to share their opinion, thereby generating a huge amount of data. Meanwhile, users or consumers want to know which product to buy or which movie to watch, so they also read reviews and try to make their decisions accordingly.

Manually gathering information about user-generated data is time-consuming. That’s why more and more companies and organizations are interested in automatic sentiment analysis methods to help them understand it.

What Is Sentiment Analysis?

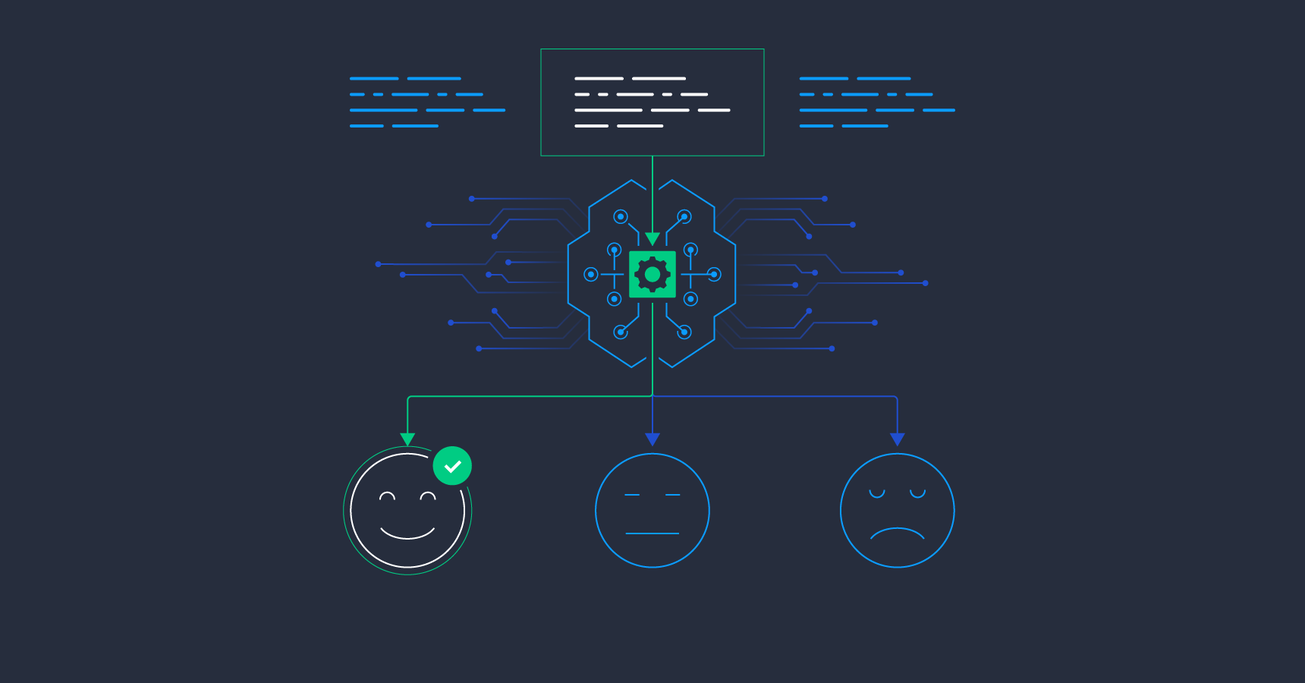

Sentiment analysis is the process of studying people’s opinions and emotions, generally using language clues. At first glance, it’s just a text classification problem, but if we dive deeper, we will find out that there are a lot of challenging problems which seriously affect sentiment analysis accuracy. Below, I will explore some pitfalls that you face working on the general sentiment analysis problem:

- Irony and sarcasm

- Types of negations

- Word ambiguity

- Multipolarity

We’ll go through each topic and try to understand how the described problems affect sentiment classifier quality and which technologies can be used to solve them.

Sentiment Analysis Challenge No. 1: Sarcasm Detection

In sarcastic text, people express their negative sentiments using positive words. This fact allows sarcasm to easily cheat sentiment analysis models unless they’re specifically designed to take its possibility into account.

Sarcasm occurs most often in user-generated content such as Facebook comments, tweets, etc. Sarcasm detection in sentiment analysis is very difficult to accomplish without having a good understanding of the context of the situation, the specific topic, and the environment.

It can be hard to understand not only for a machine but also for a human. The continuous variation in the words used in sarcastic sentences makes it hard to successfully train sentiment analysis models. Common topics, interests, and historical information must be shared between two people to make sarcasm available.

First, let’s look at sarcasm from the perspective of linguistics, where sarcasm is widely studied. In one of the most-cited pieces of research in this field, author Elisabeth Camp proposes the following four types of sarcasm:

- Propositional: Sarcasm appears to be a non-sentiment proposition but has an implicit sentiment involved.

- Embedded: Sarcasm has an embedded sentiment incongruity in the form of words and phrases themselves.

- Like-prefixed: A like-phrase provides an implied denial of the argument being made.

- Illocutionary: Non-speech acts (body language, gestures) contributing to the sarcasm.

Camp’s research was published in 2012. In 2017, researchers from Stanford University announced their own pretty interesting research “Having 2 hours to write a paper is fun!”: Detecting Sarcasm in Numerical Portions of Text where they talked about another type of sarcasm called numerical sarcasm. Numerical sarcasm is very frequent in social networks. The idea behind it is related to changes in numerical values which then affect text polarity. For example:

- "This phone has an awesome battery back-up of 38 hours." (Non-sarcastic)

- "This phone has an awesome battery back-up of 2 hours." (Sarcastic)

- "It's +25 outside and I am so hot." (Non-sarcastic)

- "It's -25 outside and I am so hot." (Sarcastic)

- "We drove so slowly---only 20 km/h." (Non-sarcastic)

- "We drove so slowly---only 160 km/h." (Sarcastic)

As we can see, these sentences differ only in the number used—hence, numerical sarcasm.

There are different approaches for automatic sarcasm detection, including:

- Rule-based

- Statistical

- Machine learning algorithms

- Deep learning

Approaches based on deep learning are gaining in popularity. Kumar, Somani, and Bhattacharyya concluded in 2017 that a particular deep learning model (the CNN-LSTM-FF architecture) outperforms previous approaches, reaching the highest level of accuracy for numerical sarcasm detection.

But deep neural networks (DNNs) were not only the best for numerical sarcasm—they also outperformed other sarcasm detector approaches in general. Ghosh and Veale in their 2016 paper use a combination of a convolutional neural network, a long short-term memory (LSTM) network, and a DNN. They compare their approach against recursive support vector machines (SVMs) and conclude that their deep learning architecture is an improvement over such approaches.

Sentiment Analysis Challenge No. 2: Negation Detection

In linguistics, negation is a way of reversing the polarity of words, phrases, and even sentences. Researchers use different linguistic rules to identify whether negation is occurring, but it’s also important to determine the range of the words that are affected by negation words.

There is no fixed size for the scope of affected words. For example, in the sentence “The show was not interesting,” the scope is only the next word after the negation word. But for sentences like “I do not call this film a comedy movie,” the effect of the negation word “not” is until the end of the sentence. The original meaning of the words changes if a positive or negative word falls inside the scope of negation—in that case, opposite polarity will be returned.

The simplest approach for dealing with negation in a sentence, which is used in most state-of-the-art sentiment analysis techniques, is marking as negated all the words from a negation cue to the next punctuation token. The effectiveness of the negation model can be changed because of the specific construction of language in different contexts.

There are several forms to express a negative opinion in sentences:

- Negation can be morphological where it is either denoted by a prefix (“dis-”, “non-”) or a suffix (“-less”).

- Negation can be implicit, as in “with this act, it will be his first and last movie”—it carries a negative sentiment, but no negative words are used.

- Negation can be explicit, as in “this is not good.”

Having samples with different types of described negations will increase the quality of a dataset for training and testing sentiment classification models within negation. According to the latest research on recurrent neural networks (RNNs), various architectures of LSTM models outperform all other approaches in detecting types of negations in sentences.

In the paper Effect of Negation in Sentiment Analysis, a sentiment analysis model evaluated 500 reviews that were collected from Amazon and Trustedreviews.com. The authors show a comparison of the models with and without negation detection. Their evaluation demonstrates how considering negation can significantly increase the accuracy of a model.

Sentiment Analysis Challenge No. 3: Word Ambiguity

Word ambiguity is another pitfall you’ll face working on a sentiment analysis problem. The problem of word ambiguity is the impossibility to define polarity in advance because the polarity for some words is strongly dependent on the sentence context.

Lexicon-based sentiment analysis approaches are popular among existing methods. An opinion lexicon contains opinion words with their polarity value. There are some public opinion lexicons available on the internet: SentiWordNet, General Inquirer, and SenticNet, among others. Because word polarity varies in different domains, it is impossible to develop a universal opinion lexicon that has a polarity for every word. For example:

- “The story is unpredictable.”

- “The steering wheel is unpredictable.”

These two examples show how context affects opinion word sentiment. In the first example, the word polarity of “unpredictable” is predicted as positive. In the second, the same word’s polarity is negative.

Sentiment Analysis Challenge No. 4: Multipolarity

Sometimes, a given sentence or document—or whatever unit of text we would like to analyze—will exhibit multipolarity. In these cases, having only the total result of the analysis can be misleading, very much like how an average can sometimes hide valuable information about all the numbers that went into it.

Picture when authors talk about different people, products, or companies (or aspects of them) in an article or review. It’s common that within a piece of text, some subjects will be criticized and some praised.

Here, the total sentiment polarity will be missing key information. This is why it’s necessary to extract all the entities or aspects in the sentence with assigned sentiment labels and only calculate the total polarity if needed.

Let’s consider an example which consists of multiple polarities: “The audio quality of my new laptop is so cool but the display colors are not too good.”

Some sentiment analysis models will assign a negative or a neutral polarity to this sentence. To deal with such situations, a sentiment analysis model must assign a polarity to each aspect in the sentence; here, “audio” is an aspect assigned a positive polarity and “display” is a separate aspect with a negative polarity.

For a more in-depth description of this approach, I recommend the interesting and useful paper Deep Learning for Aspect-based Sentiment Analysis by Bo Wanf and Min Liu from Stanford University.

Improving Sentiment Analysis Accuracy: These Aren’t Edge Cases

In this article, we talked about popular problems of sentiment analysis classification: sarcasm, negations, word ambiguity, and multipolarity. Knowing about each of these will help you avoid possible problems: Taking into account the situations we’ve discussed will significantly increase sentiment analysis accuracy in a classification model. I hope you’ve found this article a useful introduction to the topic.

Further Reading on the Toptal Blog:

Understanding the basics

What is sentiment analysis?

Sentiment analysis is the process of studying people’s opinions and emotions.

What is the use of sentiment analysis?

People are using forums, social networks, blogs, and other platforms to share their opinion, thereby generating a huge amount of data. Companies and organizations are interested in automatically analyzing this user-generated data in order to efficiently learn about it at scale.

What is subjectivity in sentiment analysis?

A subjective sentence expresses personal feelings, views, or beliefs.

What is a lexicon used for?

A lexicon contains opinion words with their polarity value. Lexicon-based sentiment analysis models will sum up polarity values for lexicon words that appear in a sentence and define sentiment according to the total polarity score.

What is sentiment classification?

Sentiment classification is a process of automatically detecting the polarity of a sentence. Most of the time, there are three possible outputs used in sentiment classification: positive, neutral, or negative.

Rudolf Eremyan

Tbilisi, Georgia

Member since August 2, 2018

About the author

Rudolf has years of experience in NLP and machine learning. His AI-based tools are used by Georgia’s largest companies, such as TBC Bank.

PREVIOUSLY AT