A Road to Better Agile Testing

The yearly World Quality Report created by Capgemini shows that 42% of survey respondents list a “lack of professional test expertise” as a challenge in applying testing to Agile development. While the advent of Agile has brought the increased speed of iterations for software development, in some cases this has come at the cost of quality.

The yearly World Quality Report created by Capgemini shows that 42% of survey respondents list a “lack of professional test expertise” as a challenge in applying testing to Agile development. While the advent of Agile has brought the increased speed of iterations for software development, in some cases this has come at the cost of quality.

Vytas Butkus

Vytas is a professional project and product manager leading products and projects in education, 3D graphics, eCommerce, and adtech.

Expertise

The yearly World Quality Report created by Capgemini shows that 42% of survey respondents list a “lack of professional test expertise” as a challenge in applying testing to Agile development. While the advent of Agile has brought the increased speed of iterations for software development, in some cases, this has come at the cost of quality.

Fierce competition is pressuring teams to constantly deliver new product updates, but this sometimes comes with its own cost, including decreased attention toward testing. Some, like Rob Mason, go even further and argue that Agile is killing software testing. Recently, Facebook has changed its motto from “move fast and break things” to “move fast with stable infrastructure” in an attempt to resolve the temptations to sacrifice quality.

So, how can testing be better integrated into the new world of Agile software development? An Agile testing process.

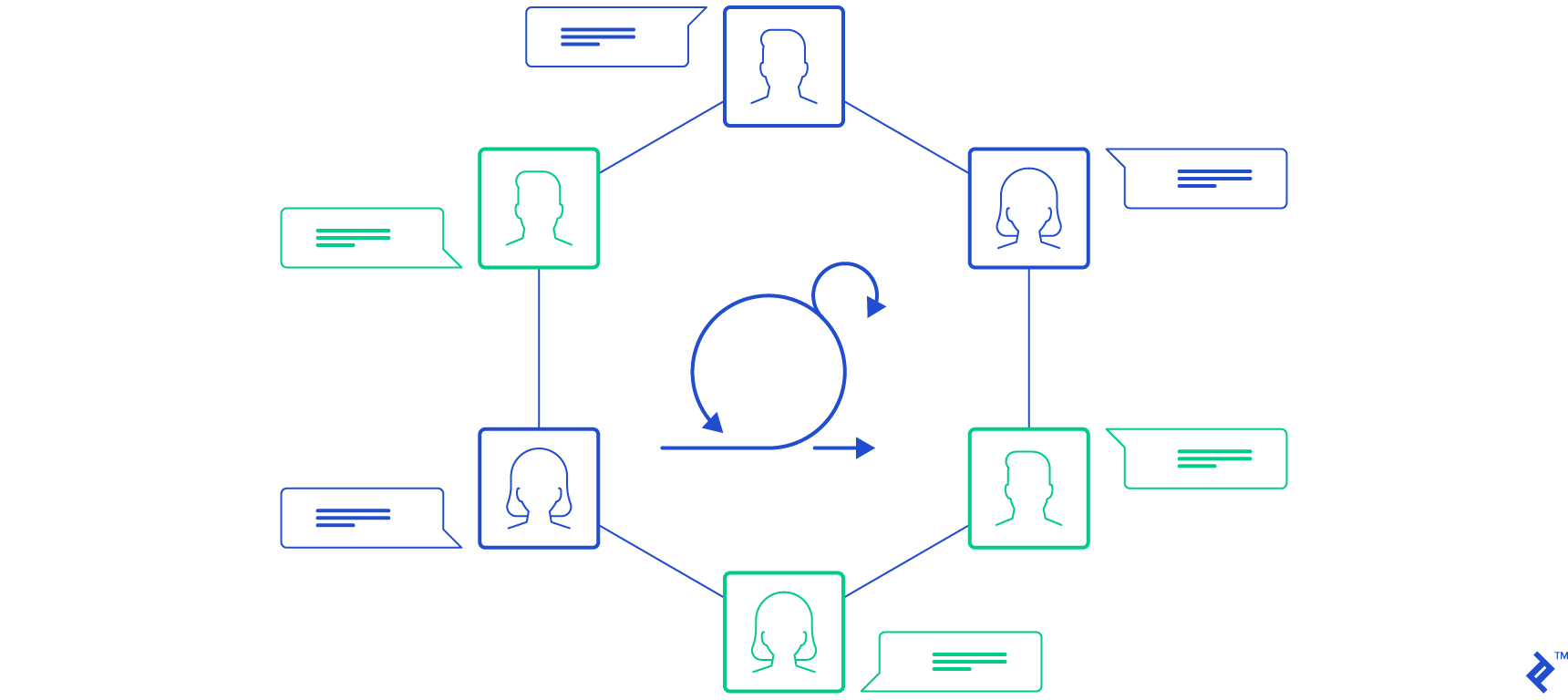

Traditional testing is quite cumbersome and depends on a lot of documentation. Testing in Agile methodology is an approach to the testing process that mimics the principles of Agile software development whereby:

- Testing is done much more often,

- Testing relies less on documentation and more on team member collaboration, and

- Some testing activities are undertaken not only by testers but also by developers.

Over the past seven years, I’ve transitioned many teams to Agile testing methods and worked side-by-side with testers to help their processes fit the new methodology. In this article, I’ll share some of the most impactful tips I’ve learned on how to improve the testing process in Agile. While it is natural to have friction between speed and quality within Agile practices, this article will cover a few techniques that can be used to increase testing quality without compromising agility. Most of the suggestions outlined here will require involvement from the team so it will be beneficial to have both developers and testers participate in planning.

Formalize a Release Test Cycle Process

One issue with testing is the absence of the release test cycle, no release schedule, or irregular testing requests. The on-demand test requests make the QA process difficult, especially if testers are handling multiple projects.

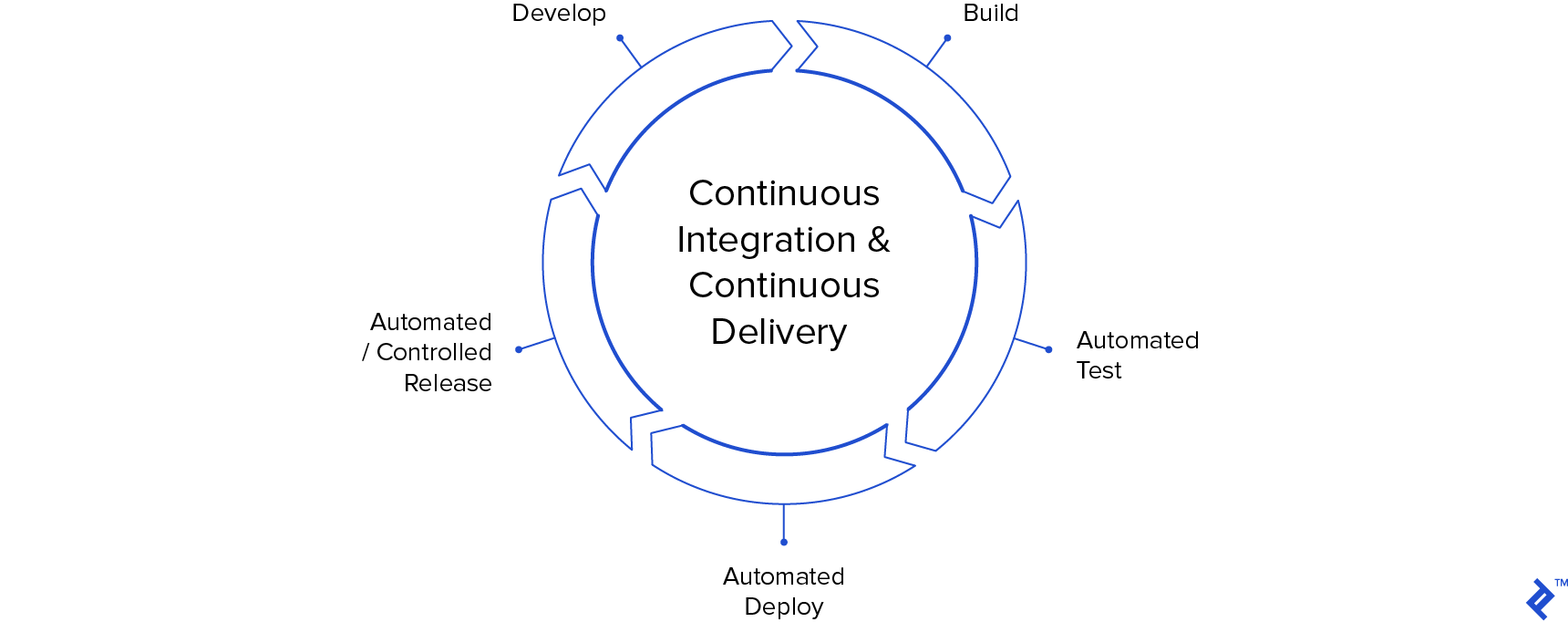

Many teams only do a single build after each sprint, which is not ideal for Agile projects. Moving to once-a-week releases could be beneficial, gradually transitioning to multiple builds per week. Ideally, development builds and testing should happen daily, meaning that developers push code to the repository every day and builds are scheduled to run at a specific time. To take this one step further, developers would be able to deploy new code on demand. To implement this, teams can employ a continuous integration and continuous deployment (CI/CD) process. CI/CD limits the possibility of a failed build on the day of a major release.

When CI/CD and test automation are combined, early detection of critical bugs is possible, enabling developers to have enough time to fix critical bugs before the scheduled client release. One of the principles of Agile states that working software is the primary measure of progress. In this context, a formalized release cycle makes the testing process more agile.

Empower Testers with Deployment Tools

One of the common friction points for testing is having the code deployed to a staging environment. This process depends on technical infrastructure which your team might not be able to affect. However, if there is some flexibility, tools can be created for non-technical people like testers or project managers that would allow them to deploy the updated codebase for testing themselves.

For example, on one of my teams, we used Git for version control and Slack for communication. The developers created a Slackbot that had access to Git, deployment scripts, and one virtual machine. Testers were able to ping the bot with a branch name acquired from GitHub or Jira and have it deployed in a staging environment.

This setup freed up a lot of time for the developers while reducing the communication waste and constant interruptions when testers had to ask developers to deploy a branch for testing.

Experiment with TDD and ATDD

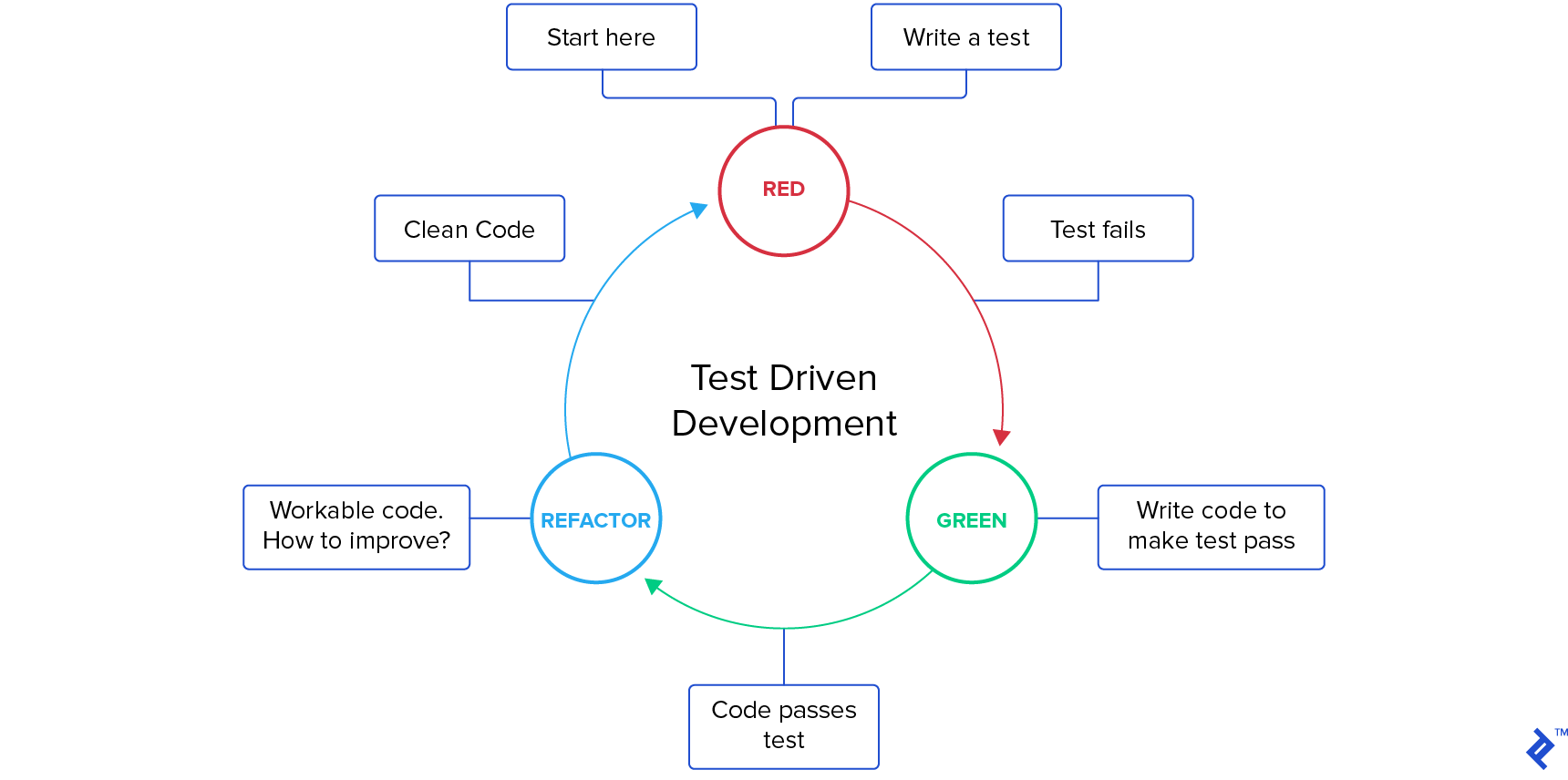

Test-driven development (TDD) is a type of software development process that puts a lot of emphasis on quality. Traditionally, a developer writes code and then someone tests it and reports if any bugs were found. In TDD, developers write unit tests first before even writing any code that would complete a user story. The tests initially fail until the developer writes the minimal amount of code to pass the tests. After that, the code is refactored to meet the quality requirements of the team.

Acceptance test-driven development (ATDD) follows a similar logic as TDD but, as the name implies, focuses on acceptance tests. In this case, acceptance tests are created prior to development in collaboration with developers, testers, and requester (client, product owner, business analyst, etc.). These tests help everyone on the team understand the client’s requirements before any code is written.

Techniques like TDD and ATDD make testing more agile by moving testing procedures to the early stages of the development lifecycle. When writing test scenarios early on, developers need to understand the requirements really well. This minimizes unnecessary code creation and also resolves any product uncertainties at the beginning of the development cycle. When product questions or conflicts are surfaced only in the later stages, the development time and costs increase.

Discover Inefficiencies by Tracking Task Card Movement

On one of my teams, we had a developer who was extremely fast, especially with small features. He would get a lot of comments during code review, but our Scrum master and I wrote that off as lack of experience. However, as he started coding more complex features, the problems became more apparent. He had developed a pattern of passing code to testing before it was fully ready. This pattern typically develops when there is a lack of transparency in the development process—e.g., it is not clear how much time different people spend on a given task.

Sometimes, developers rush their work in an attempt to get features out as soon as possible and “outsource” quality to the testers. Such a setup only moves the bottleneck further down the sprint. Quality assurance (QA) is the most important safety net that the team has, but that can mean that the existence of QA gives developers the ability to forego quality considerations.

Many modern project management tools have the capabilities to track the movement of task cards on a Scrum or Kanban board. In our case, we used Jira to analyze what happened with the tasks of the developer in question and made comparisons with other developers on the team. We found out that:

- His tasks spent almost twice the amount of time in the testing column of our board;

- His tasks would much more often be returned from QA for a second or third round of fixing.

So apart from testers having to spend more time on his tasks, they also had to do it multiple times. Our opaque process made it seem like the developer was really fast; however, that proved to be false when we took into account the testing time. Moving user stories back and forth is obviously not a lean approach.

To resolve this, we started by having an honest discussion with this developer. In our case, he was simply not aware of how detrimental his working pattern was. It was just the way he got used to working in his previous company, which had lower quality requirements and a bigger tester pool. After our conversation and with the help of a few pair programming sessions with our Scrum master, he gradually transitioned to a higher quality approach to development. Due to his fast coding abilities, he was still a high performer, but the removed “waste” of the QA process made the whole testing process much more agile.

Add Test Automation to the QA Team Skillset

Testing in non-Agile projects involves activities like test analysis, test design, and test execution. These activities are sequential and require extensive documentation. When a company transitions to Agile, more often than not, the transition focuses mostly on the developers and not as much on the testers. They stop creating extensive documentation (a pillar of traditional testing) but continue to perform manual testing. However, manual testing is slow and typically cannot cope with the fast feedback loops of Agile.

Test automation is a popular solution to this problem. Automated tests make it much easier to test new and small features, as the testing code can run in the background while developers and testers focus on other tasks. Moreover, as the tests are run automatically, the testing coverage can be much larger compared to manual testing efforts.

Automated tests are pieces of software code similar to the codebase that is being tested. This means that people writing automated tests will need technical skills to be successful. There are many different variations of how automated testing is implemented across different teams. Sometimes developers themselves take on the role of testers and increase the testing codebase with every new feature. In other teams, manual testers learn to use test automation tools or an experienced technical tester is hired to automate the testing process. Whichever path the team takes, automation leads to much more agile testing.

Manage Testing Priorities

With non-Agile software development, testers are usually allocated on a per-project basis. However, with the advent of Agile and Scrum, it has become common for the same QA professionals to operate across multiple projects. This overlapping responsibility can create conflicts in schedules and lead to testers missing critical ceremonies when a tester prioritizes one team’s release testing over another’s sprint planning session.

The reason why testers sometimes work on multiple projects is obvious—there is rarely a constant flow of tasks for testing to fill a full-time role. Therefore, it might be hard to convince stakeholders to have a dedicated testing resource allocated to a team. However, there are some reasonable tasks that a tester can do to fill their downtime when not engaging in testing activities.

Client Support

One possible setup is to have the tester spend their sprint downtime helping the client support team. By constantly facing the problems that clients have, the tester has a better understanding of the user experience and how to improve it. They are able to contribute to the discussions during planning sessions. Moreover, they become more attentive during their testing activities as they are better familiarized with how the clients actually use their software.

Product Management

Another technique for managing tester priorities is to essentially make them junior product managers that carry out manual testing. This is also a viable solution to filling a tester’s off-duty time because junior product managers spend a lot of time creating requirements for the user stories and therefore have intimate knowledge of most tasks.

Test Automation

As we have previously discussed, manual testing is often inferior to automation. In this context, the push for automation can be coupled with having a tester dedicate their full attention to the team and utilize their spare time learning to work with test automation tools like Selenium.

Summary: Quality Agile Testing

Making testing more agile is an inevitability that a lot of software development teams are now facing. However, quality should not be compromised by adopting a “test as fast as you can” mindset. It is imperative that an Agile transition includes a shift to Agile testing, and there are a few ways to achieve this:

- Formalize a release test cycle process.

- Empower testers with deployment tools.

- Experiment with test-driven development and acceptance test-driven development.

- Discover inefficiencies by tracking task card movement.

- Add test automation to the QA team skillset.

- Manage tester priorities.

Every year, software is getting better and user expectations are rising. Moreover, as clients get used to high-quality products of top software brands like Google, Apple, and Facebook, these expectations transfer into other software products as well. Thus, the emphasis on quality is likely to be even more important in the coming years. These testing and overall development process improvements can make testing more agile and ensure a high level of product quality.

Understanding the basics

What are the agile testing levels?

There are a few testing levels that can be used in Agile: unit, integration, system, and acceptance.

What is agile testing and why is it important?

Agile testing is a transition to from highly documented waterfall testing procedures to more flexible and responsive testing. Agile testing wraps itself around agile software development to support the agile team in delivering small product increments in shorter timeframes.

Do we have test plans in Agile?

A test plan can be used in Agile, but it should be a general guide and not a rigid, unchanging document that takes a lot of time to create. Agile promotes working software over comprehensive documentation.

What is an agile test strategy?

An agile testing strategy should state how software is tested in the agile team.