AI Investment Primer: Laying the Groundwork (Part I)

Over the last few years, the world has witnessed an explosion of interest surrounding Artificial Intelligence. Nevertheless, there still exists a significant gap in understanding and knowledge about AI applications, particularly amongst the investor community. This article, the first of a two-part series on the topic, is a collection of thoughts and advice by Toptal Finance Expert Carolyn Deng, based on her experience having founded an AI startup as well as having worked as VC Investor.

Over the last few years, the world has witnessed an explosion of interest surrounding Artificial Intelligence. Nevertheless, there still exists a significant gap in understanding and knowledge about AI applications, particularly amongst the investor community. This article, the first of a two-part series on the topic, is a collection of thoughts and advice by Toptal Finance Expert Carolyn Deng, based on her experience having founded an AI startup as well as having worked as VC Investor.

A Wharton MBA and CFA, Carolyn has executed 20+ VC/PE deals, managed a $700M portfolio and is a fundraising, growth and M&A specialist.

Expertise

PREVIOUSLY AT

Executive Summary

What is AI?

- Artificial Intelligence (AI), can be simply explained as intelligence demonstrated by machines, in contrast to the natural intelligence displayed by humans and other animals.

- Machine learning is a subset of techniques used in AI, and deep learning is a subset of techniques used in machine learning.

- There have been three significant waves of AI development. The first in the 50s and 60s, the second in the 80s and 90s, and the third started a decade ago and has gained prominence since 2016 (AlphaGo).

What is special about this wave of AI?

- This wave of AI is driven by the growth and popularity of deep learning.

- While deep learning techniques have been around since the 60s, the required computing power and data were not advanced enough to support mass commercial application until the last few years.

- The reason why deep learning is so exciting is that, simply put, deep learning allows for much more powerful performance than other learning algorithms.

Key components of successful AI applications.

- AI applications need to solve a well-defined (specific) and desirable (targeting urgent and clear customer pain points) problem. Facial recognition, machine translation, driverless cars, search engine optimization, are all well defined desirable problems. However, the lack of well-defined desirable problems is why it is difficult to produce, for instance, a general house-cleaning robot.

- Machine learning algorithms require access to clean and well-labeled data. This data collection exercise can be difficult or easy, depending on which commercial application you are developing.

- An AI business needs to develop robust and scalable algorithms. To achieve this, there are three must-haves: a large amount of well-labeled data, the right talent, and the confidence that deep learning is the correct technology to solve the problem.

- Successful AI applications require a lot of computing power. The more advanced the artificial intelligence algorithm (e.g. deep learning neural networks), the more computational power is required, the more costly the operation at hand.

Over the last few years, the world has witnessed an explosion of interest surrounding Artificial Intelligence (AI). A concept once confined primarily to the Sci-Fi genre, AI has become part of our everyday lives. We read about it all the time in the news, see videos of scary-looking robots dancing to the tune of Uptown Funk, and hear about how AI applications are creeping into even the most unexpected spheres of our daily lives. But is it a hype?

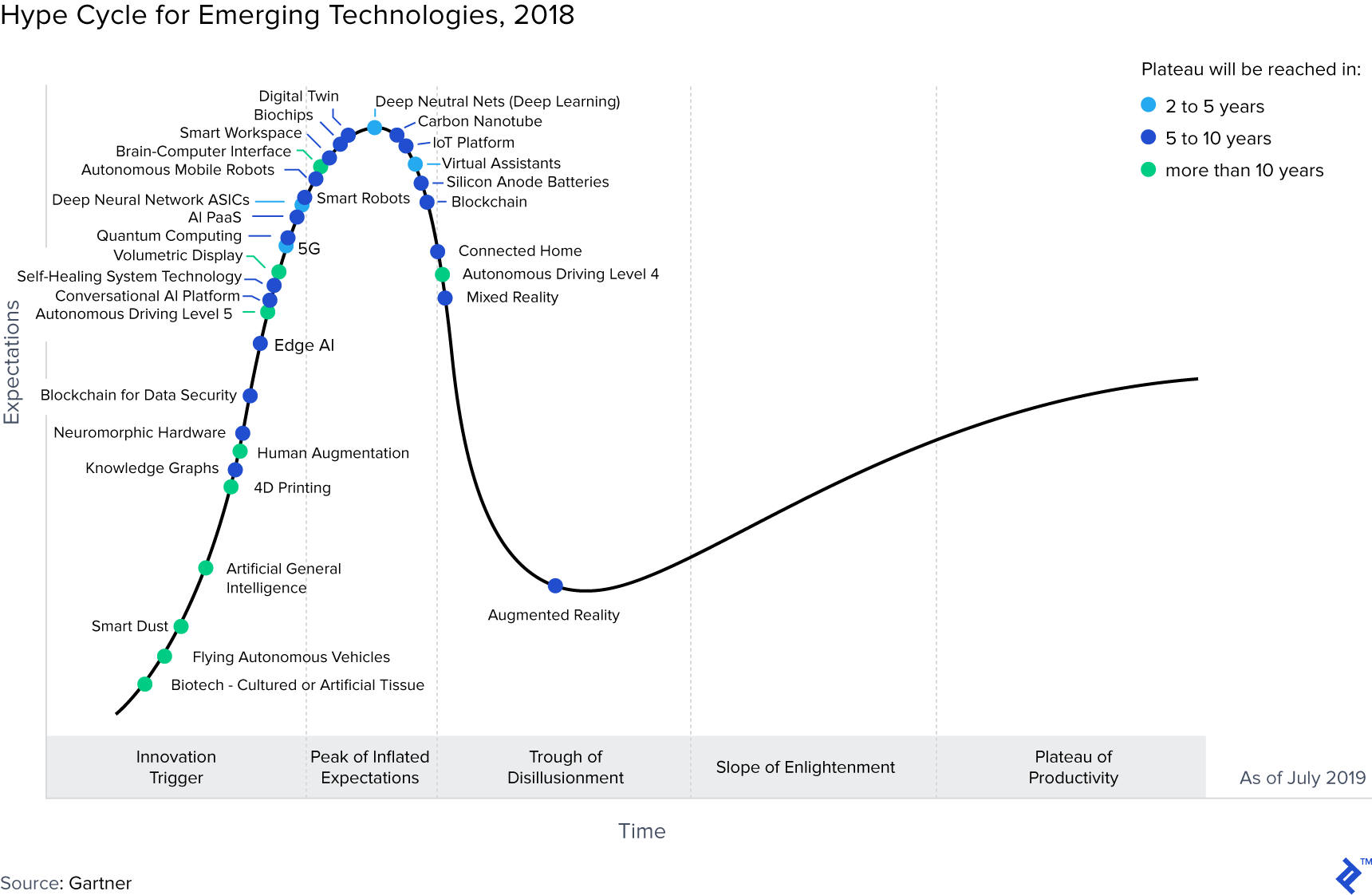

It could be. According to Gartner’s Hype Cycle for Emerging Technologies, Democratized AI trends including AI PaaS (platform as a service), Artificial General Intelligence, Autonomous Driving, Deep Learning, are all at various points on the curve, with Deep Neural Nets being at the peak of inflated expectations. However, we are also already benefiting from AI every day. From Siri to Cortana to Alexa, we can now converse with smart assistants. From Google’s AI-powered search engine to Instagram filters, we now enjoy the convenience of quick, more relevant responses to our needs. In China, where AI innovation is thriving, companies such as Face++’s facial recognition technology are powering instant ID authentication for banks, whilst apps such as TikTok push short videos to millions of teenagers (in fact courting considerable controversy in so doing).

I personally believe that although there are certainly some over-hyped expectations and businesses, AI is the future. I founded my own early stage AI startup to capture this once-in-a-lifetime opportunity to participate in the technology revolution. As an ex-VC investor, I am also constantly looking out for investment opportunities in AI. I, therefore, believe that, despite the undeniable noise surrounding the space, the huge surge in AI investment is also warranted.

But with this in mind, it surprises me that, particularly amongst the investment community, there is still a large gap in understanding. Investors are keen to put money to work, but they often lack important basic knowledge that, in my opinion, is required to be an effective investor in this space. The purpose of this article is, therefore, to share and provide some useful context and information for those interested in investing in this exciting field. Given the breadth of the topic at hand, I’ve split my thoughts into two parts, the first aimed at discussing a few essential elements one needs to know to get started on the AI journey - a 101 of sorts. The second part of this series will be more practical and will dive deeper into the topic of how to evaluate AI investments and the different ways to invest.

N.B. This post is not meant to be technical. It is aimed at investors and the broader financial community, and therefore non-technical readers.

What is AI?

There are actually many definitions of AI, so when I’m asked to define it I often default to good-old Wikipedia, which, for non-technical audiences, I think provides a satisfactory definition:

Artificial intelligence (AI), sometimes called machine intelligence, is intelligence demonstrated by machines, in contrast to the natural intelligence displayed by humans and other animals.

In other words, any non-natural intelligence is “artificial” intelligence, regardless of how it’s achieved. Techniques used to achieve AI include if-then rules, logic, decision trees, regressions, and machine learning including deep learning. One of my favorite, and fun, tools for explaining how AI works is this video about how a computer learns to play Super Mario.

When talking about AI, you will invariably hear these three key terms: AI, machine learning, and deep learning. They sometimes are used interchangeably, but they are different. Simply put, machine learning is a subset of techniques used in AI. Deep learning is a subset of techniques used in machine learning.

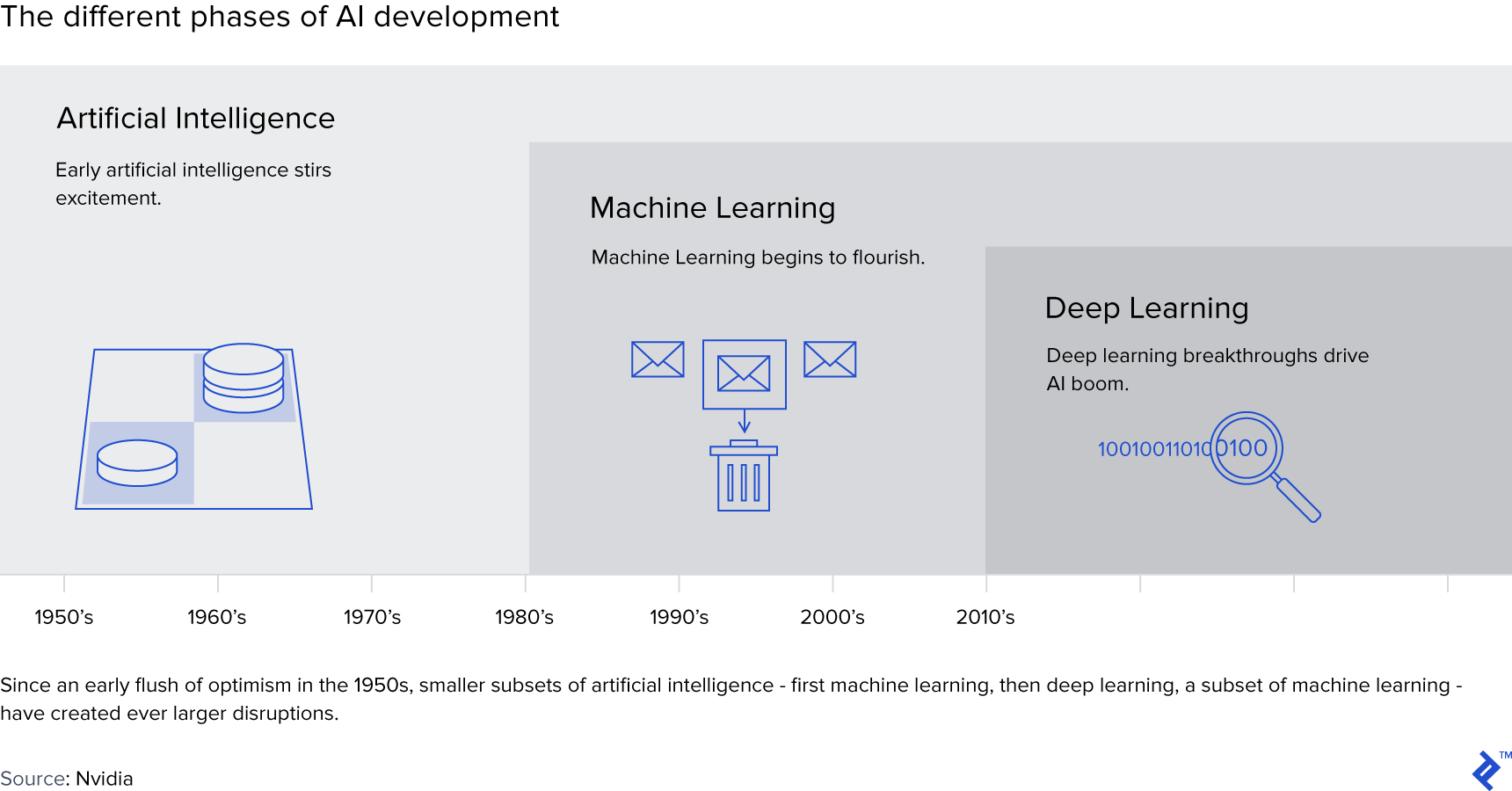

The Nvidia blog does a nice job of summarising the relationship between the three terms. It also provides a handy overview of the three waves of AI development. The first wave of AI was in the 50s and 60s and saw some of the first major milestones such as when the IBM 701 won the checkers game over checker master Robert Nealey. In the 80s and 90s, Deep Blue beat the human master Kasparov at Chess. In March 2016, AlphaGo beat the #1 Go Player Lee Sedol. Every time AI beat human masters in games, it sparked a new hype stage for AI. Then as the technology could not deliver applications that meet the public’s expectations, the AI hype would turn into AI winter, with dwindled investments and research grants.

As previously mentioned, machine learning is a subset of AI. According to Nvidia, machine learning at its most basic is “the practice of using algorithms to parse data, learn from it, and then make a determination or prediction about something in the world. So rather than hand-coding software routines with a specific set of instructions to accomplish a particular task, the machine is ‘trained’ using large amounts of data and algorithms that give it the ability to learn how to perform the task.” A very common example of machine learning is spam filter. Google’s spam filter can recognize spam by identifying trigger words such as “Prince”, “Nigeria”, and “luxury watch”. It can also continue to “learn” from users’ manual classification of spam. For example, an email with the message “send $1000 to get this exclusive cancer drug to the following bank account” was missed by Google’s spam filter. Once a user labels it as a spam, Gmail analyzes all of the keywords in that particular email, and “learns” to treat emails containing the combination words of “$1000”, “drug”, and “bank account” as spam going forward. There are many mathematical models employed by professionals to do machine learning, e.g. regressions, logistics, Bayesian networks, clustering.

What is special about this wave of AI?

This wave of AI is driven by the popularity of deep learning. As a subset of machine learning, deep learning was not invented recently. In fact, according to Wikipedia, “the first general, working learning algorithm for supervised, deep, feedforward, multilayer perceptrons was published by Alexey Ivakhnenko and Lapa in 1965”. However, as the computing power and the data were not advanced enough to support the mass commercial application of deep learning techniques, it didn’t gain popularity until 2006 when Geoffrey Hinton et al published their seminal paper, “A Fast Learning Algorithm for Deep Belief Nets.” Despite the AI winter of the 90’s and the first half of 2000’s, a few scholars, including the three academic gurus of deep learning, Geoffrey Hinton, Yann LeCun, and Yoshua Bengio, continue to work on deep learning in the academic field. The rapid breakthrough of computing power, for example, cloud computing and GPUs, coupled with the availability of big data through the digital economy, have made deep learning algorithms possible to implement in the last decade. For example, Google’s self-driving car research started in 2009.

Technically speaking, deep learning can be defined as as “a class of machine learning algorithms that:

- use a cascade of multiple layers of nonlinear processing units for feature extraction and transformation. Each successive layer uses the output from the previous layer as input.

- learn in supervised (e.g., classification) and/or unsupervised (e.g., pattern analysis) manners.

- learn multiple levels of representations that correspond to different levels of abstraction; the levels form a hierarchy of concepts.”

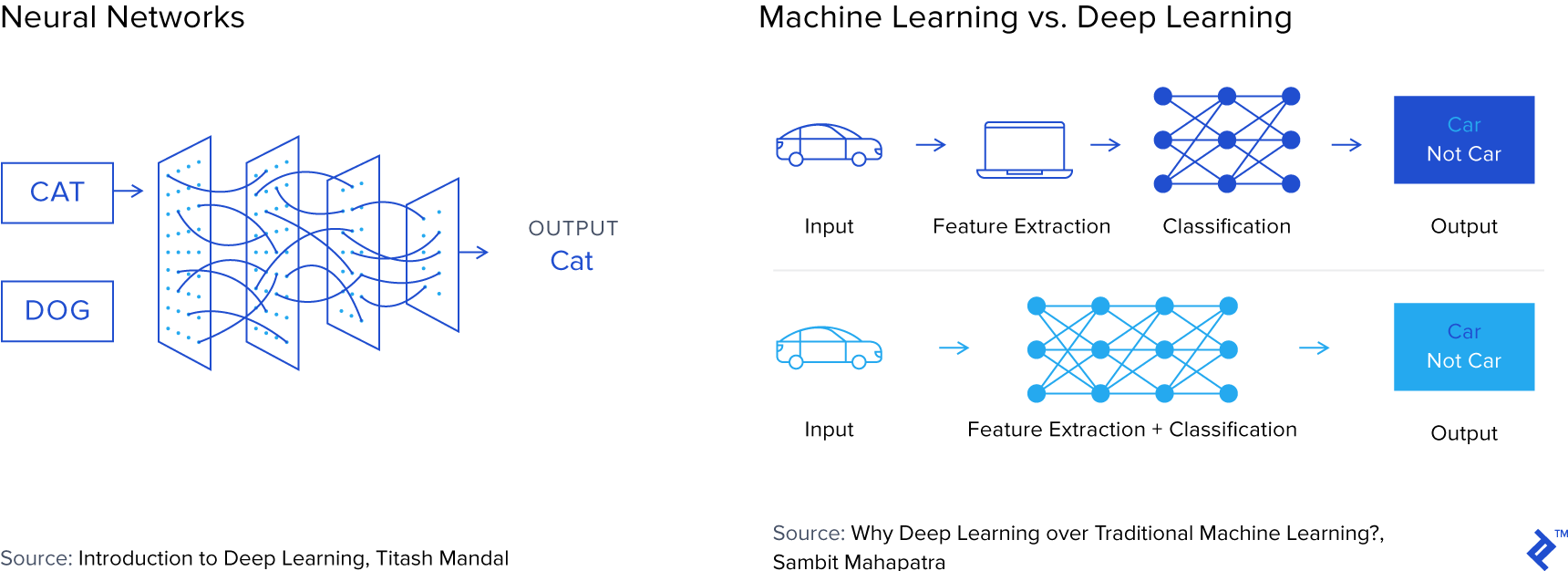

The key is “multiple layers”, compared to traditional machine learning. For example, how would you distinguish a cat from a dog? If you were to use machine learning, you might extract a few features that are common to both dog and cats, such as two ears, a furry face, the distance between eyes and nose and mouth, etc. And you might get a result saying the picture is 50% dog, 50% cat - not very useful. Using deep learning, however, you don’t even know what the distinguishing features of a cat vs. a dog are, but the machine, through multiple layers of creating new features and hundreds (or thousands) of statistical models, would provide a more accurate output - e.g. 90% dog, 10% cat. The two charts below illustrate how a neural network “learns”, and the difference between classic machine learning and neural networks.

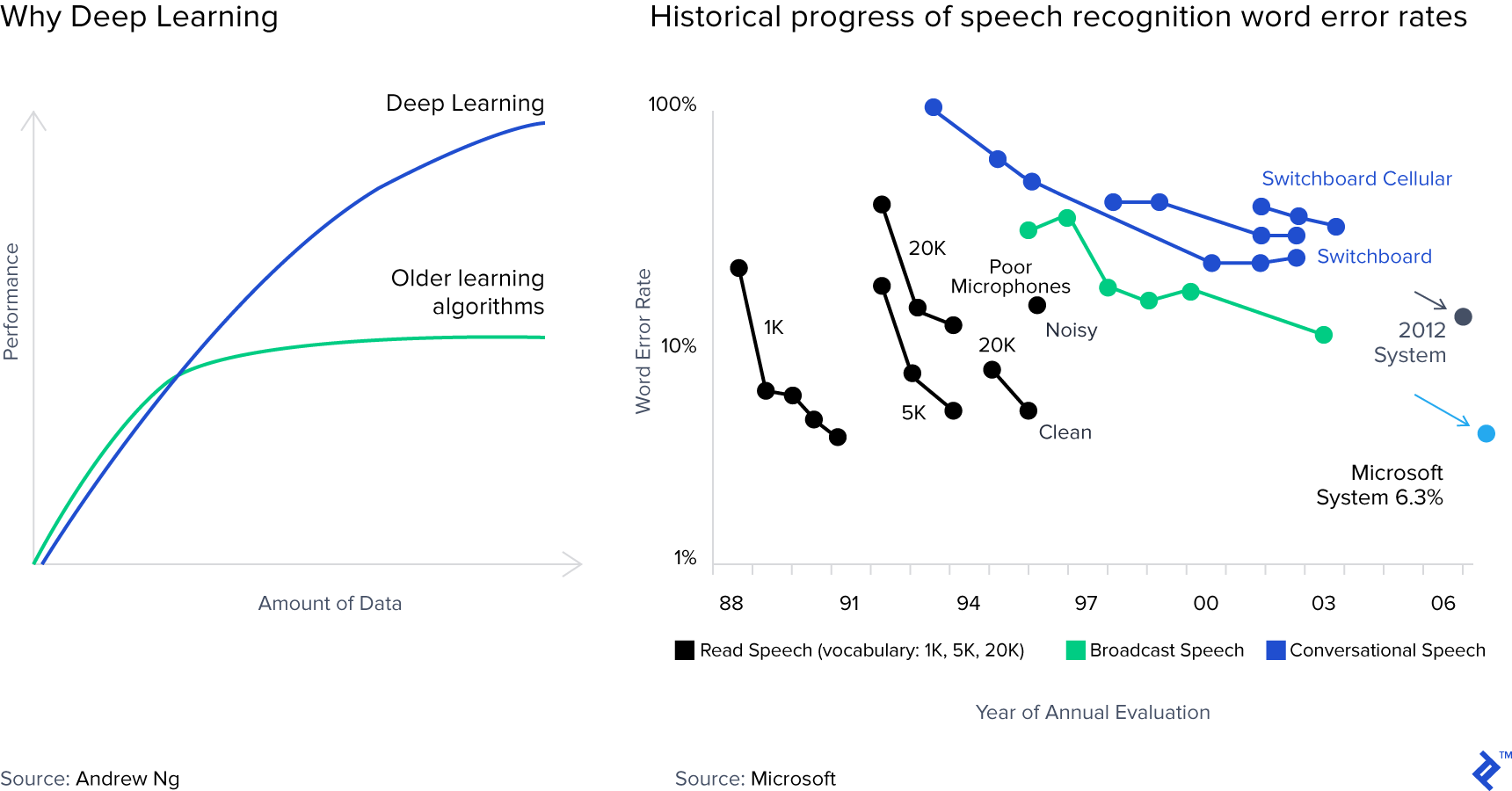

Readers might be left scratching their head after reading the above, and rightly so. But going back to our original purpose: from the standpoint of an investor, what is so special about deep learning? One could answer this question with various further complicated technical explanations, but really simply put, the graph below on the left does a great job of making it really clear: deep learning allows for much more powerful performance than other learning algorithms. Take the example of speech recognition as detailed by the Microsoft blog (chart below on the right): the original 1988 speech recognition error rate was 60-70%, whilst the new Microsoft system using deep learning was only 6.3% in 2014.

Key components of successful AI applications

I believe there are 4 key components to a machine learning (including deep learning) product’s success: well-defined desirable problems, data, the algorithm(s), and computing power.

First and foremost, the AI application needs to solve a well-defined (specific) and desirable (targeting urgent and clear customer pain points) problem. Think about the different games that the computer was taught to play over the 3 different waves of AI: checker, chess, Go. They were very well defined problems and therefore easier for a computer to solve. Facial recognition, machine translation, driverless cars, search engine optimization, are all well defined desirable problems. However, the lack of well-defined desirable problems is why it is so difficult to produce, for instance, a general house cleaning robot. Simple household tasks, e.g. collecting cups and putting laundry in the basket, require solving too many problems. For example, it requires the machine to identify what objects need to be picked up (cups, dirty laundry and not clean laundry, etc.), where to go, and how to go there (avoid obstacles in the household and travel to the desired location), handling each object with the desired power so it doesn’t break the cup or the laundry, etc.

Second, developing a machine learning algorithm requires access to clean and well-labeled data. This is because these algorithms are built by feeding different statistical models a large quantity of data that are well labeled, to establish the necessary predictive relationships. This data collection exercise can be difficult or easy, depending on which commercial application you are developing. For example, to collect the necessary data required to develop computer vision algorithm for wine grape fields, my startup needed field images from different locations with different varieties and more difficult still - different seasons. With each season being one year, it will take years to get satisfactory products. In contrast, if you want to develop a good facial recognition algorithm in China, to collect e.g. 10 million images, you just need to set up a camera on a busy street in Beijing for a week and task completed. Another example would be the #1 AI-powered personalized news aggregator in China, Toutiao, which learns about your personal news preferences and only shows you the most relevant news to you. Collecting data, in this case, is again much easier, e.g. the number of articles you read in each news category, the amount of time you spend on each article, etc.

Third, an AI business needs to develop robust and scalable algorithms. To achieve this, there are three must-haves: a large amount of well-labeled data (as discussed above), the right talent, and the confidence that deep learning is the correct technology to solve the problem. AI business needs to have the right talent to develop the necessary algorithms, but these are highly specialized, expensive, and scarce. For example, when I was looking to hire for my startup, I discovered that, at a minimum, I needed data scientists (usually PhDs) to develop algorithm prototypes, engineers to design frameworks, programmers (TensorFlow, Python, C++ etc) to code into scalable programs, and people to put them together (product manager, UX, UI, etc).

Another consideration is computing power. Why? Because deep learning neural networks require a lot more computation than the other AI methods. For example, for the same task of identifying a dog in an image, training the model using non-deep learning algorithm might need, say, 10 statistical models given a dataset of 1GB. The deep neural networks model might need, say, 1000 statistical models running through a dataset of 100 GB. The results are better using networks, but the computational power required is far greater. As a result, these models require not just one computer (like what we do on our personal computer), but distributed computing with each GPU handling, say, 5% of the computation, so that 20 GPUs together can handle the computational volume required. This, in turn, means having to build your own GPU cluster servers or renting the computing power from platforms such as AWS. Computing power from either cloud computing or your own servers is costly, although in fairness the unit cost of computing should be continuously decreasing (as per Moore’s law).

Conclusion

Many believe now is the best time to see AI breakthroughs and startups, because the digitalization of many industries and consumer internet makes a large amount of purposefully collected, cleanly organized, digital data available. The development of Nvidia’s GPU and Intel’s FPGA makes it much cheaper and faster to conduct the necessary calculations. The current AI wave of innovation is therefore driven by important advances in deep learning.

But for an AI application to be successful, one needs a well defined desirable problem, data, algorithm, and significant computing power. For executives reading this article considering using AI to empower their business, the four key components mentioned above also apply.

How can you learn more about AI? There are plenty of books, seminars, Coursera courses, research papers, and organizations such as Deep Learning to learn about AI. Because the focus of this article is for investors who want to know the basics of AI, I didn’t touch many of the hot AI topics topics such as the potential of AI as a threat, the industry’s future outlook, AI investments, the pros and cons of different algorithms (e.g. CNN), prototyping vs. scaling, major programming languages, etc. In Part 2 of this series, I will delve into how to evaluate AI companies from an investor perspective.

Understanding the basics

What companies are leading in AI?

Many of the giant tech companies are involved in AI: Google (Alphabet), Microsoft, Amazon, Apple, IBM, Facebook, etc. Each firm is leading in different aspects. For example, Google’s Deep Mind is leading in break-through research. Facebook rolled out facial recognition (face-tagging) long before others have.

What is AI as a service?

AI as a service is a subset of software as a service (SaaS). AI as a service means a company can license the AI products that other firms have developed, and pay for usage. The services offered can include model training, computing power, and off-the-shelf AI-powered software, etc.

What is the importance of artificial intelligence?

It is important for everyone to understand artificial intelligence, because it is already being applied in a wide variety of technology products, and will continue to change our lives going forward. AI can complete many tasks better, faster, and in more customized to different users compared to manual processing.

Carolyn Deng, CFA

Singapore, Singapore

Member since July 20, 2017

About the author

A Wharton MBA and CFA, Carolyn has executed 20+ VC/PE deals, managed a $700M portfolio and is a fundraising, growth and M&A specialist.

Expertise

PREVIOUSLY AT