Future UI and the End of Design Sandboxes

As design moves from target destinations to systems of interactive components, it’s important for designers to master creating products that adapt and can thrive in unpredictable contexts.

As design moves from target destinations to systems of interactive components, it’s important for designers to master creating products that adapt and can thrive in unpredictable contexts.

Bree’s a passionate designer and problem-solver with 10+ years experience in product and UXUI design for web and native mobile applications.

PREVIOUSLY AT

Anyone working in design and technology—and probably anyone living in the modern world—will have noticed the swiftness with which we went from using terms like the “World Wide Web” to the “Internet of Things.”

In a short time, we’ve gone from designing and developing web pages—points on the map of the information superhighway accessed through browsers—to future UIs: a vast array of interconnected applications delivering content and experiences across a range of form factors, resolutions, and interaction patterns.

Technology and the screens we use today are disappearing into our surroundings. The “Internet of Things” (IoT), coupled with artificial intelligence (AI) using voice assistants, already surrounds us. We are at the dawn of an “ambient intelligence” (AmI) world where a multitude of devices work in concert to support people in carrying out their everyday life activities. Individual screen-based UIs are slowly disappearing, and designers have to grapple with a fragmented system of design components.

How will designers design for an AI-enabled ambient intelligence world where screens have disappeared? How will this change their role?

Designer Roles of Tomorrow Are Shifting

The object of the digital designers’ practice was once placing an intricate series of boxes, images, text, and buttons onto a relatively reliable browser screen (and eventually a mobile app screen).

This was the breadth of the experience delivered to people. But now, designers must consider that products exist as separate entities within complex systems of components that interact with, swap information with, and sometimes even disappear behind other parts.

As Paul Adams of Intercom writes in his piece The end of apps as we know them, “In a world of many different screens and devices, content needs to be broken down into atomic units so that it can work agnostic of the screen size or technology platform.”

And this goes beyond just content. Today, designers must consider how every feature or service offered to people exists within, and cooperates with, other components of multiple systems and platforms.

How Will People Interact with Information Tomorrow?

At the beginning of the mobile revolution, designers focused on creating apps that deliver an experience similar to websites: well-designed and controlled, boxed-in environments that people interacted with to consume content or accomplish a task, and then exited.

Most of the interaction happened within the confines of a screen, and designers had a good deal of control over the delivery.

However, the range of mobile devices has exploded from screen-based mini-computers to wearable wrist-wrapping message centers and devices that remotely control your home thermostat. Today, people get disjointed snippets of information from multiple sources delivered through notifications and alerts. The paradigm has shifted dramatically.

What Are Future UIs Going to Look Like?

The proliferation of diverse, interconnected technologies has also meant that a single product will be experienced and interacted with differently in different contexts.

An alert to a message might appear on a person’s smartwatch while they’re en route to a meeting.

Rather than having to find, and then open the messaging app, the user has the option to respond within the notification and send out a pre-composed quick reply with one tap, and engage with the message in more depth later, on another device.

What this means is that an atomic unit—a micro-component of interaction—has to be delivered through varied UIs (and user experiences) tailored to the context of communication.

Chances are, the user is not composing a long-form email reply while jogging. They probably won’t open a laptop for the sole purpose of using the one-tap response that was so handy on their wearable. They may never even read the notification on the screen, but instead, listen to the content to a voice-activated virtual assistant.

Paul Adams writes, “In a world where notifications are full experiences in and of themselves, the screen of app icons makes less and less sense. Apps as destinations makes less and less sense. Why open up separate apps for your multi-destination flights and hotels in a series of vendor apps when a travel application like TripIt can present all of that information at once—and even allow you to complete your pre-boarding check-in? In some cases, the need for screens of apps won’t exist in a few years, other than buried deep in the device UI as a secondary navigation.”

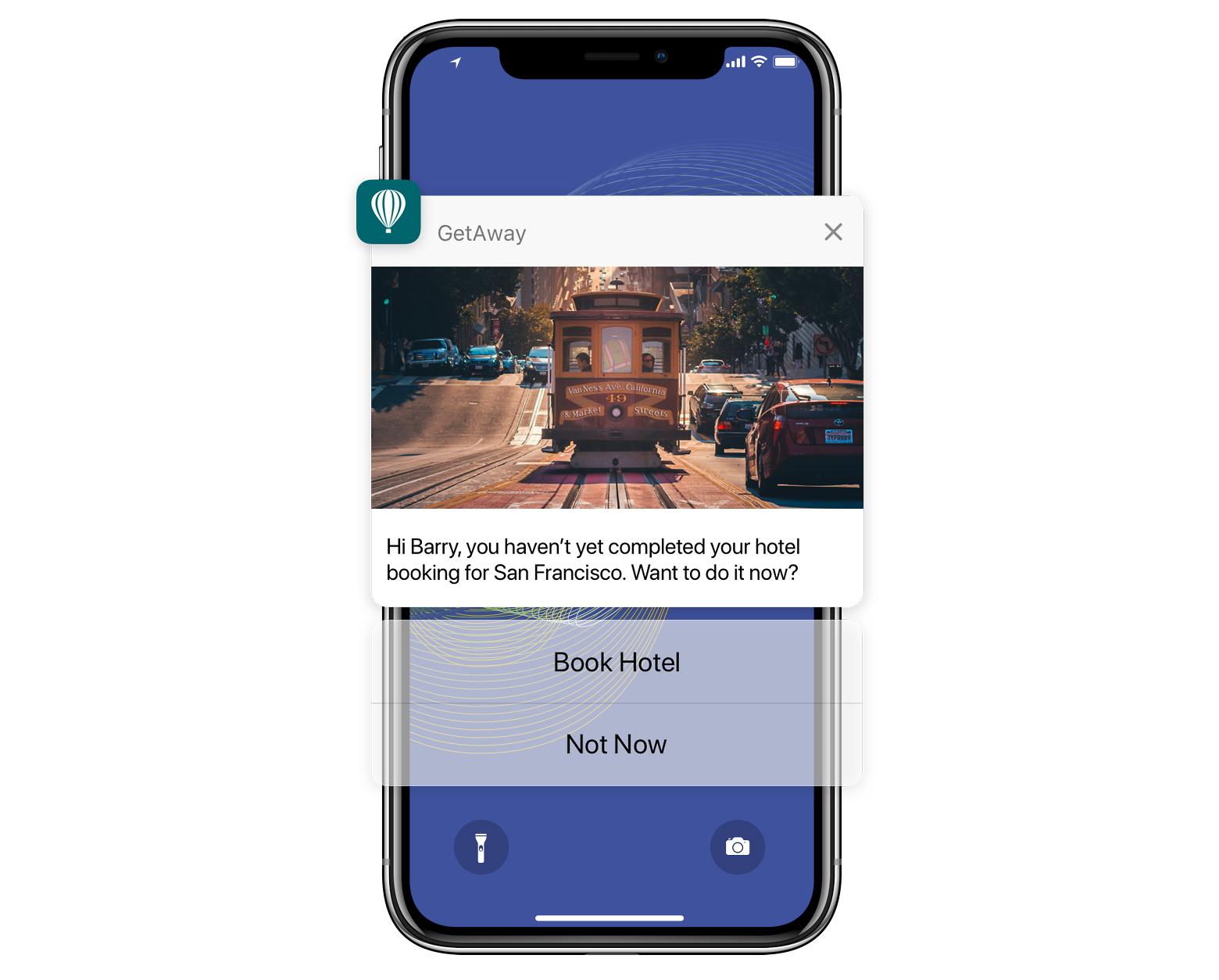

System-level, interactive notifications will enable people to take action immediately, rather than having to use a notification as a gateway to open an app where people are pulled abruptly from one context into another.

Shifting Paradigms of Interaction Transform Future UIs

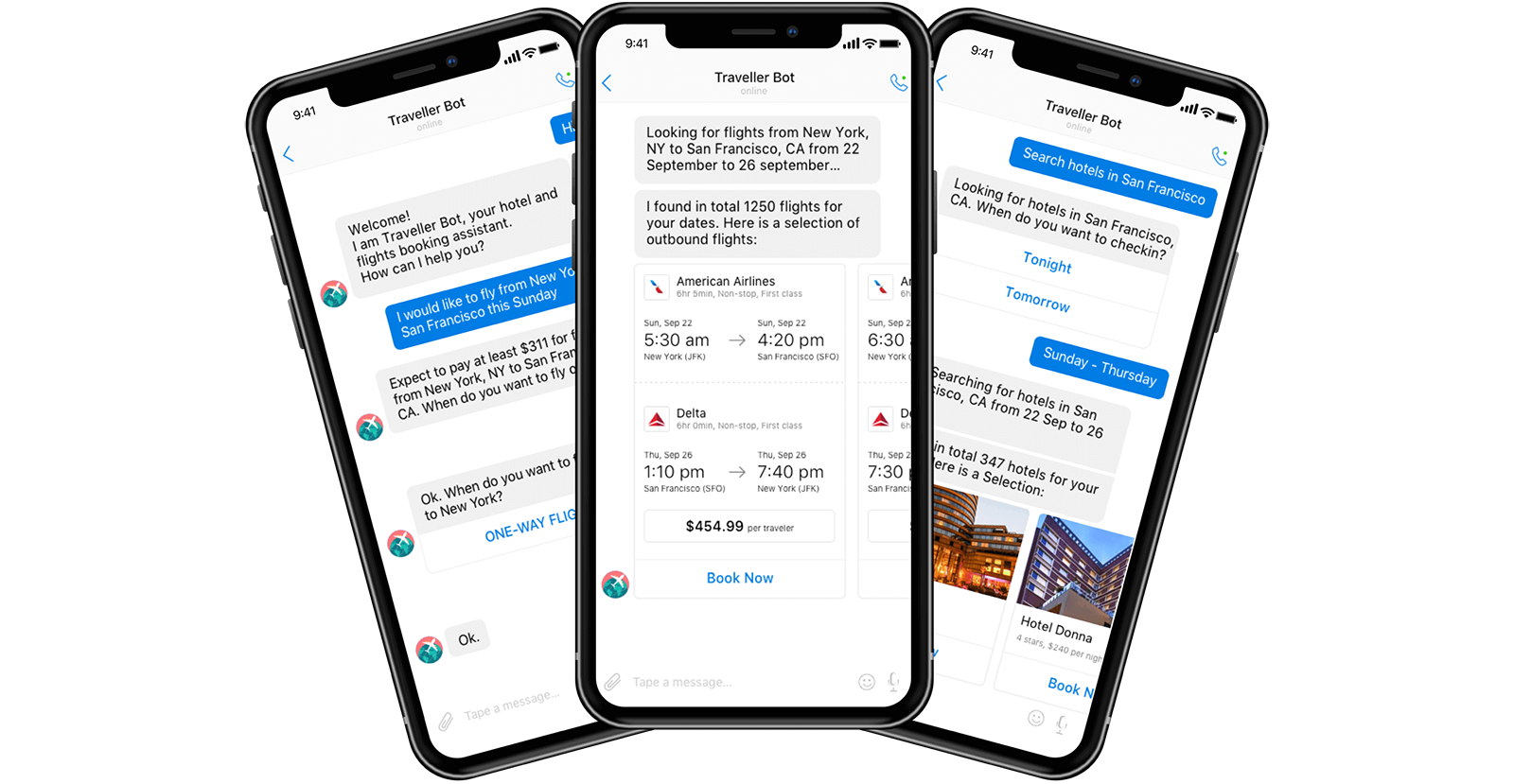

Apps like the popular WeChat allow people to do things like transfer money, call a cab and receive updates on its arrival time, search for a restaurant and book a table, or find a hotel nearby - all through a text-based interface that removes the need for a user to navigate to individual apps to accomplish a series of tasks.

In The Future of UI? Old School Text Messages, Kyle Vanhemert looks to the proliferation of text-based platforms that frequently replace the need for a graphical user interface. The services that apps provide will increasingly be accessed outside of the apps themselves.

Designing for the world of IoT means abdicating even more control over the interface—creating products and services that might not be accessed through a screen at all.

When the touch-based screen revolutionized mobile devices, designers suddenly had a treasure trove of new modes of interaction to leverage, like swipes, pinches, taps, double-taps, long-taps, tap-and-drag that went well beyond the point-and-click.

Now people are swiping and pinching in the air or waving tagged accessories in front of sensor-equipped devices where designers can’t expressly guide them with the standard visual cues—what is the designer to do now?

In a sense, this means designing predictable, reusable interaction patterns.

How Does This Change How Products Are Implemented?

The mobile app as the unit of design—at least in the way it has been looked at so far—is disappearing.

The standalone application is becoming the secondary, or even tertiary, destination for people to receive the content and services that products offer. How can designers realign their approaches to best meet people’s needs?

Designing less for pixels and more for bits of content and moments of interaction is the new paradigm.

In a world where the amount of available information and the number of competing services is expanding exponentially, the best way to make an impact is for quality information to be shared and distributed across an array of platforms and contexts.

What this means is that ideally, people are going to receive content in ways that cannot be precisely controlled, often within multiple wrappers.

Designers will be asking themselves a whole new set of questions.

- How can content be formatted to adjust to an array of viewports and even screen-minimal devices?

- Is there a way to package a key functionality of services that can exist somewhere else?

- What are the most appropriate and useful interaction patterns that can be offered as actions to take within a notification?

As people are spending less time inside destinations, such as mobile apps, how can designers create experiences that can be interacted with and completed elsewhere?

Can a push notification for an upcoming calendar event give people a set of standard controls to book a flight, make travel suggestions, or order flowers without requiring the user to open the app itself?

How can designers create app functionalities that work with other apps to provide specific services to people but are also compelling enough to exceed the competition?

What Does the Design Process of Tomorrow Look Like?

As moments of experiences move from device to device in a non-linear fashion, and the component is the new unit of design, how is the new object of a designer’s practice defined? It’s going to be more and more about designing experiences, not UIs.

The component in question will be defined differently in each case, with different implications for every product. Let’s look at some examples that may help designers when thinking about their products as system components, rather than as neatly-boxed destinations.

In What Context Will People Receive a Notification?

Let’s start with notifications as a simple pattern.

Imagine a design for a ride-hailing service app (similar to Lyft or Uber) that echoes across multiple devices and notifies people via rich push notifications on their hired car’s position, estimated arrival time, and delays. In this scenario, the user has an iPhone, an Apple Watch, and earphones plugged into his ears.

First, the user orders the car via voice interaction on their Apple Watch. He raises his wrist to wake the watch, asks Siri for a car, and receives a voice confirmation that a car is on its way via his earphones.

It is unlikely that people would open the app and monitor the screen continually. So notifications must be packaged to be pushed to the operating system and the user via connected devices.

A few minutes later, he checks his iPhone to see the car’s position on the map and how long the wait will be. When the car is only a minute away, the app sends a voice notification to his earphones and a vibrating notification to his Apple Watch. He glances at his iPhone to confirm the car and driver’s information.

This app is looking more like a component within the operating system.

If People Don’t Open Our App, What Are We Designing?

Components don’t only interact with the operating system—they may even be pulled into other apps.

For example, in a scenario where the user has a travel itinerary which may be affected by a delay, a travel app like TripIt could manage rich push notifications that the user can interact with without having to open the app itself.

If the travel app has other people with whom the itinerary is shared, the notification could be designed to present the user with an option to send an updated ETA to friends waiting at the airport in the event of a delay.

The mobile device’s notification component is merged with the travel app’s notification system, the user’s actions cascade back down the chain, and a change is made to the travel app’s status without the user ever having to open it.

If this step is taken further to include something like a voice-assistant (via a VUI), how are notifications and actions packaged and delivered where a screen-based interaction can’t be anticipated?

Apps are becoming organisms in large ecosystems that interface with other organisms and need to adapt accordingly.

The UX Components of Design Have Been Redefined in Future UIs

The unit of design from target destinations, such as a web page or a sandboxed application, is being redefined to system components that interact with each other and with people.

In many cases, these interactions are happening almost invisibly. While apps as a concept are not being eliminated, they are no longer the main event or destination.

Designers have to evolve, pivot, and redirect their focus to create products and services that adapt to thrive in multiple ecosystems. Future UIs will be spread across an array of unpredictable contexts and devices, and in the expanding ambient intelligence world, there is much for designers to contemplate.

Further Reading on the Toptal Blog:

Understanding the basics

What is design data?

In data-driven design, data drives several design decisions; its goal is to inform designers of a user’s typical experience. Designers can’t know what users want or need through intuition alone. For that, they need proper user research, real user testing, or data.

Are mobile apps the future?

IoT and its broad reach are driving the mobile market. Apps are here to stay, but they are evolving. Future UIs will need to change, and the services mobile apps provide will increasingly be accessed outside of the apps themselves—they will “speak” with each other in the same way devices are doing already.

What do app designers do?

App designers usually work closely with user experience (UX) designers and user interface (UI) designers to apply their designs to mobile interfaces. Their primary focus is on native mobile applications, but they also must be able to create designs for mobile and hybrid apps.

What is meant by component design?

Component-based design is where a UI is broken out into clearly named, manageable parts: Layout: white space, grids, etc. Identity: typography, colors, etc. Components: navigation menu Elements: buttons, links, etc. Compositions: where multiple components are collected Pages: arrangement of compositions & components

What is a UI component?

UI design components are the elements used to build user interfaces for apps and websites. They provide consistent elements with which users interact as they navigate a digital product, e.g., text links, buttons, dropdown lists, scroll bars, progress bars, menu items, checkboxes, radio buttons, and more.

Bree Chapin

New York, NY, United States

Member since May 15, 2016

About the author

Bree’s a passionate designer and problem-solver with 10+ years experience in product and UXUI design for web and native mobile applications.

PREVIOUSLY AT