Ethereum Oracle Contracts: Can We Trust the Oracle?

The whole point of smart contracts is that they need to be more secure and efficient than traditional contracts. So where do smart contract oracles fit in?

In the final installment of our three-part series, Toptal Blockchain Developer John R. Kosinski explains the role of oracles in the evolution of trust.

The whole point of smart contracts is that they need to be more secure and efficient than traditional contracts. So where do smart contract oracles fit in?

In the final installment of our three-part series, Toptal Blockchain Developer John R. Kosinski explains the role of oracles in the evolution of trust.

As a full-stack dev for nearly two decades, John’s worked with IoT, Blockchain, web, and mobile projects using C/C++, .NET, SQL, and JS.

Expertise

PREVIOUSLY AT

This article is the final part of a three-part series on the usage of Ethereum oracle contracts.

- Part one was an introduction to getting the code set up, running, and testable with truffle framework.

- Part two took a look a bit into the code and used it as a launching point for discussion on some of Solidity’s code and design features.

Now, in this final part I’d like to ask the questions: what did we just do, and why? And try to provide some hopefully thought-provoking avenues of thought. The jury is still very much out on how the use of oracles relates to trustlessness, and what the word “trustlessness” as it related to blockchain, actually means in real-world usage. As we bring some of these ideas from their conceptual form to practical use, we will be forced to wrestle with and come to terms with questions like this. So let’s begin.

Recap: Why do we need an Ethereum oracle?

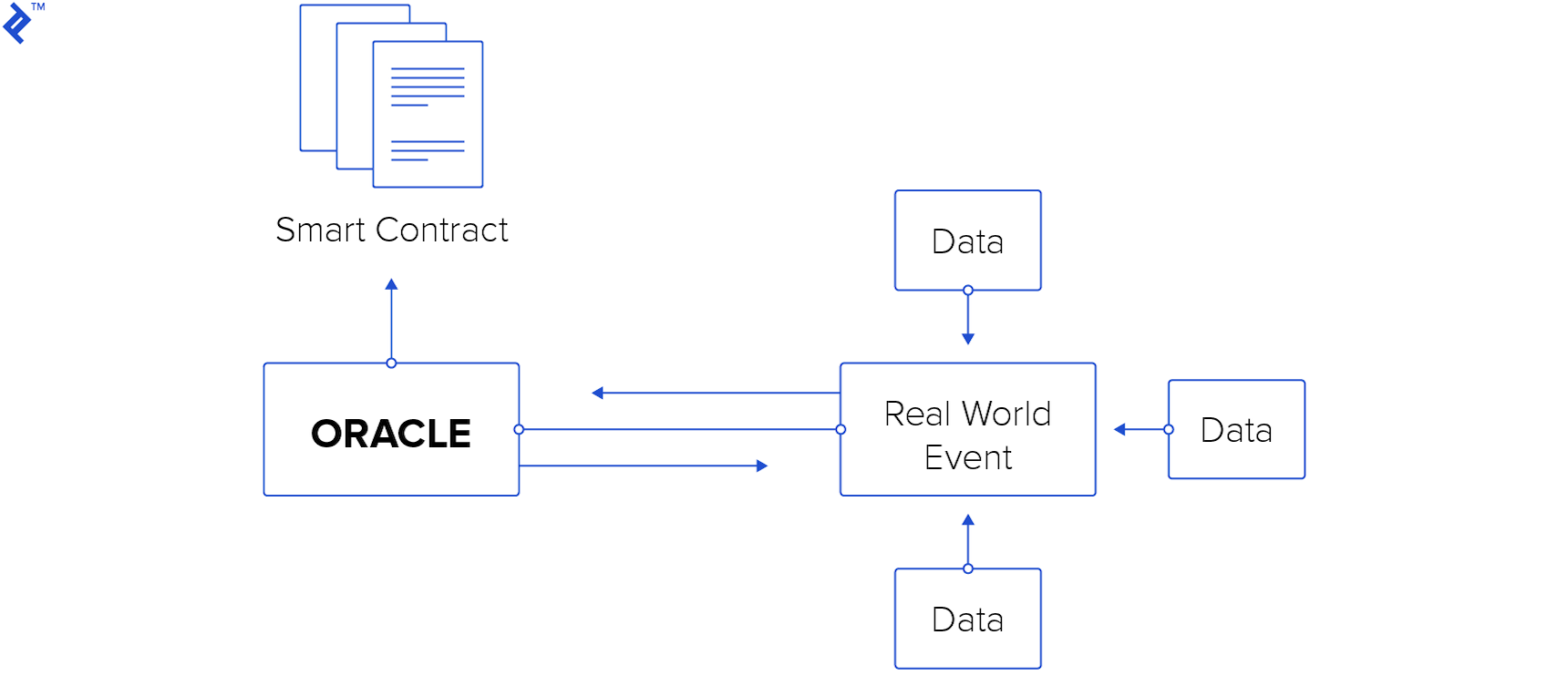

This gets to the very heart of the nature of executing code on the blockchain. In order to satisfy the requirements of immutability and determinism, and as an artifact of the way in which the code is actually executed by nodes on the chain, a smart contract is unable to directly reach outside of the blockchain to do well, anything.

For most programmers, this fact introduces a very unnatural way of thinking. If we need data from somewhere, normally we just connect to that somewhere and pull it. A smart contract that needs weather report data? Just connect to a weather feed. But no; a blockchain smart contract absolutely cannot do this; if some data is not on the blockchain already, the contract code has no access to it at the time of execution. So then, the solution is to already have the needed data in existence on the blockchain, at the time of contract execution. This requires external machinery which, rather than pulling data into the chain, pushes data onto the chain, specifically for use by other contracts. That external machinery is the oracle. The data pushed onto the chain is pushed into an oracle contract, which then presumably has made provisions for sharing it with other contracts. An example of that setup is precisely what we’ve built and examined in the previous two parts of this trilogy of articles.

Glaring Security Holes

To me, the central issue involved in all public blockchain is the T word: trust. At its purest, what these systems are doing is their best to guarantee (no perfect guarantee is possible in this world, but as close as possible) that we don’t have to blindly trust any parties. The astute reader may have already questioned some of the glaring security holes in the Boxing Bets example. I would like to focus on those which will be most important to our discussion of trustlessness and how it relates to the use of oracles with smart contracts.

1. The owner/maintainer of the betting contract may be corrupt

Starting on line 58 of BoxingBets.sol we have the following function:

/// @notice sets the address of the boxing oracle contract to use

/// @dev setting a wrong address may result in false return value, or error

/// @param _oracleAddress the address of the boxing oracle

/// @return true if connection to the new oracle address was successful

function setOracleAddress(address _oracleAddress) external onlyOwner returns (bool) {

boxingOracleAddr = _oracleAddress;

boxingOracle = OracleInterface(boxingOracleAddr);

return boxingOracle.testConnection();

}

It should be pretty clear what this allows. The contract owner (and only the contract owner) can, at any time and without any restrictions, change the oracle that’s being used to serve boxing matches and determine the winners. Why is that a problem? If it’s not already obvious to you, this allows the contract owner to deliberately abuse the contract for his own profit.

Example: The next upcoming boxing match is Soda Popinski vs. Glass Joe. Soda is the clear favorite by a wide margin. Everybody bets on Soda. Tons of money riding on it. I, the contract owner, decide to pull a fast one. Just before the match is decided, I change the oracle to my own malicious one, which is identical to the official oracle except that it’s hard-coded to declare Glass Joe as the winner. It declares Glass Joe, I make all the money, and nobody can stop me. After that maybe nobody will trust my contract anymore, but suppose I don’t care; maybe I wrote and published the contract just for the sole purpose of pulling that heist.

What are some alternatives?

1. Don’t allow the oracle to be changed

The problem that we’ve identified above, arises from the fact that we allow the oracle to be changed by the contract owner. So, suppose we just hard-code the oracle address and don’t allow it to be changed at all? Well, we actually can do that, it’s not out of the question. But then the question arises, what if that oracle quits - stops providing data for whatever reason? Then we’ll need to get a new oracle. Or what if that oracle, initially trusted, turns out to be corrupt and no longer trusted? Again, we will want to change to a new oracle. If we hard-coded the oracle, then the only way to change the oracle is to release a new contract that uses a different oracle. Ok, again, we can do this. It’s not out of the question. Note, of course, that smart contracts can’t be readily updated as easily as can, say, a website. Wouldn’t that be easy? If you notice a bug or security hole, you just patch it and no one’s the wiser. The smart contract deployment model is a bit closer to the shrink-wrapped software model; once the software is in the user’s hands, it’s there and you can’t fix it. You must prompt the user to upgrade themselves manually. Smart contracts are a bit similar. Once that contract is on the blockchain, it’s immutable as with the rest of the blockchain, except in parts where you’ve written logic to make it mutable.

This is not necessarily a blocker though; there are lots of models and schools of thoughts now on how to make a smart contract modifiable. This topic would make a decent article in and of itself, but for now you can check out this Hackernoon article, as well as this piece on smart contract strategies.

What would that look like from a user’s perspective? Let’s say that I’m considering placing a bet on the upcoming Don Flamenco match. I clearly can see that the contract uncomplicatedly hard-codes an oracle that I already know and trust (well, trust enough to place a bet of a certain size). So that’s it. Pretty simple. If the contract owner releases a new version of the contract with a new oracle, I still (should) have the freedom to continue using the old one. Well, maybe. It depends on how the upgrade was handled. If the contract was disabled or destroyed, I might be out of luck. But in the vanilla case, it should still stand.

2. Lock in the oracle for the duration

We can add some complication to the code (not really desirable for an example to be too complex, but for a real-world solution we may want the benefit that this complexity brings) in order to mitigate shenanigans like the one described above. I think a very reasonable thing to do, would be to add code that would “lock in” the oracle for the duration of the bet. To put it another way, the contract’s logic could guarantee in a very clear & simple way, that whatever oracle was present when I made the bet must be the same oracle that is used to determine the winner. So that even if the oracle changes in the meantime, for other matches, for my match, and for my bet it must remain the same all throughout. This goes hand in hand with allowing the user to know who the oracle is.

Let’s have a quick example to illustrate this. I am a user. I am considering placing a bet on the upcoming Little Mac fight. There is a facility in the contract that allows me to inspect the oracle that will be used to determine the winner for this match. I verify that the contract is a well-known one presented by Nintendo Sports. I feel confident enough in that oracle. (To add a bit more complexity, maybe the contract allows users to choose from an array of available oracles for a given match). Now I can inspect the oracle’s code, and see that the oracle’s logic guarantees that that same oracle will be used to determine the match’s outcome. So I, as the bettor, have that assurance at least. It doesn’t preclude the fact that my oracle could be bad (i.e. corrupt or non-trustworthy) but it at least assures me that it can’t be changed in the background.

A risk here would be that the oracle goes “out of business” (stops being maintained or updated) between the time that I place the bet, and the match is decided. Money could be locked into the contract, and become irretrievable. For that, we could (maybe) put a time-activated clause into the contract, wherein if a match is undecided by a certain time or date (could be part of the match definition), it’s considered “dead” and all money locked inside is returned to the bettors.

3. Let the oracle be user-defined

An even more complicated (but possibly more interesting) would be to leave the oracle address “blank”, in a sense, to allow users to specify their own oracles, and form their own betting pools (through the contract) around those oracles. Groups of users who are using the same oracle could bet together following the contract’s logic. That puts the onus on the user to choose an oracle that they trust, and share that oracle with other like-minded users. It in effect fragments the betting community, so it would only work if there was a large user base; otherwise, there would be too few bettors involved to really make the betting interesting and profitable. If I’m the only person betting with my favorite oracle, there’s not much incentive there! But on the other hand, it takes the responsibility of choosing a trustworthy oracle from the contract owner, and he can wash his hands of that. If some users find an oracle untrustworthy, they will simply stop using it and switch to another, and no one will get mad at the contract owner. He simply provided the betting arena and performed his service honorably.

Adding to the complication of this strategy is the fact that we’d have to somehow let an organic batch of oracles ‘grow’ in the wild, and ones which exactly fit this solution. We’d have to broadcast to the world the exact interface to which potential oracles must adhere, and hope that a decent enough number of them would spring up to actually give the user some choice. Maybe we could seed the batch with one or two of our own. If it doesn’t take, then we have no betting DApp. But if it does, I have to admit that the idea of user-selected, organically-grown oracles in the wild is an interesting and attractive solution.

2. The owner/maintainer of the oracle might be corrupt

Corrupt that is, in the sense of untrustable; that the owner/maintainer/manager of the oracle is likely to proclaim an incorrect outcome for a match, in order to enrich himself.

Example: I am the owner/maintainer of the actual oracle which feeds boxing data onto the blockchain for the betting contract to use. My oracle is not directly involved in any bet-placing or management of betting. Its job is to simply provide data, which the betting contract (and maybe any number of other contracts) can use. However, I may personally place a bet using the betting contract that in turn uses my oracle; I am anonymous anyway, so I’m not afraid of any retribution. Once I have placed a bet on such a contract, there is a clear conflict of interest. Specifically, the honesty with which I update my oracle with true and accurate information may be in conflict with my betting actions.

So let’s say that in the upcoming Sandman/von Kaiser match, wherein von Kaiser is massively the underdog, I go ahead and place a massive bet on von Kaiser. When von Kaiser loses as expected, I use my oracle to falsely proclaim him the winner instead! The betting contract executes as it should (there’s no way to stop it at this point), I make a killing on the match, there is no recourse and no way to punish me, and life goes on. Perhaps, after that, people refuse to use my oracle anymore; perhaps I don’t care.

How can we prevent this?

Now we’re at a much bigger question and one which cuts to the very heart of so-called trustlessness as it relates to oracles. The oracle is trusted to be a neutral third party or even a trusted third party. The problem is that the oracle is run by humans. Still another issue is that the oracle exercises a great deal of control over how the client contract performs its duties since it provides the data upon which the contract acts. How can we ever know that we can trust a given oracle?

Why should we trust any oracle?

If the whole idea of blockchain smart contracts is to remove the need to trust anyone, so doesn’t the fact that we must “trust” an oracle fly in the face of trustlessness? Well, I would say: it’s time to grow up, son (or daughter)! There is no such thing as pure trustlessness, and blockchain does not provide a trustless environment. Blockchain is a layer that facilitates human interactions. Human interactions are still the core or end result, and human interactions cannot be trustless.

Link: The Oracle Problem

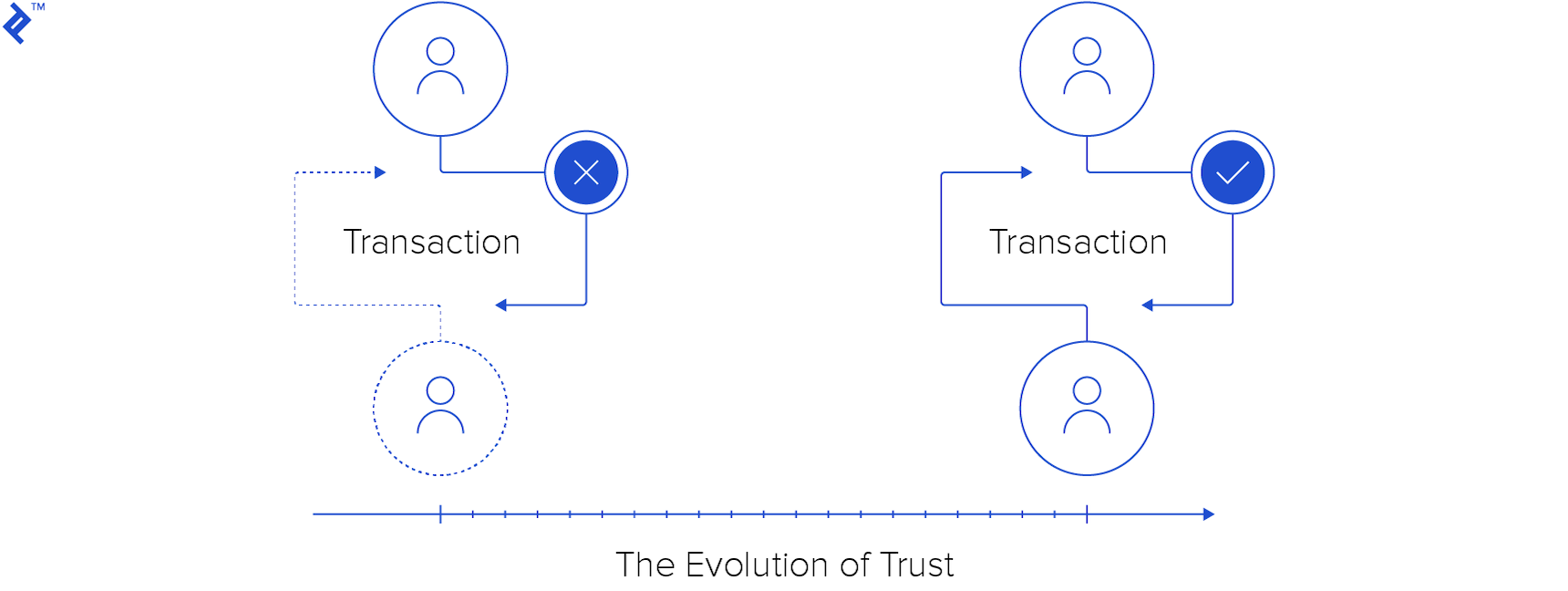

The Evolution of Trust

Back at the dawn of time, how could I trust another human being? Suppose I’m hunting mammoth, and that guy says that he will help me to hunt the mammoth, in exchange for half of the meat? How can he trust that I will provide the half? How can I trust that won’t hit me in the head and take the whole mammoth, once the hunt is over?

Back in those times, the threat of violence was likely at the heart of many kinds of socio-economic trust. If I try to steal that guy’s share, I feel sure that he will try to attack me, and that incurs risk for me. Even if I feel confident that I would win in a fight with him, I (as a caveman) know enough about fighting to know that anything can happen, and I could easily sustain a life-threatening injury even if I do technically win. It’s always a risk and an investment of energy. In that world, that could be enough to keep people honest.

In general, I won’t cheat because it’s not in my best interests, overall and on the average, to do so. In other words, the expected outcome of cheating is less than the expected outcome of cooperating.

If that’s not the case, or if the actors perceive that this is not the case, possibly one or more will simply choose not to participate. For example, if I see that the other guy is a 9-foot tall giant with horns and monster teeth, I might just choose to run away and not make any deal; the guy looks too dangerous for me to deal with, he could simply steal what he likes with impunity. If we are roughly equally matched, then we perceive that we are in a situation in which it is in neither party’s best interest to cheat, it is in both of our interests to cooperate, and therefore, assuming that both parties are sane and rational, both parties will cooperate.

As culture developed, so did human interactions. They became less violent, though the implicit threat of force did not go away. Cultural mores gave people greater incentive to cooperate and different types of disincentives to cheat.

Fast forward to a time of early civilization; I am making a bargain to buy 100 bushels of wheat. It’s sort of a primitive futures contract; I am paying today for grain that I will receive when it’s harvested next month. I give the guy my copper coinage, we shake hands, share a drink of barley beer, and part ways until next month for settlement of the contract. Seems ok.

I’ve put myself at that guy’s mercy; he has the full amount of my money, and I have nothing yet. So what makes me feel confident that he will deliver on the contract? A few things. He is a businessman; he regularly does business with the local populace. He has done business with people I know, and he has always delivered fairly and on time. He has a reputation for honesty. Furthermore, I know that he has a disincentive for cheating. He makes a living mainly based on the fact that he is known to be an honest trader. If he were to cheat me, I would let everyone know, and this would damage his good reputation, and thus his business. The amount of money that he makes from cheating me would be small in comparison to the future amount that he could stand to not make if his customer base were to abandon him. So I know that it’s not necessarily in his best interest to cheat me.

This is great; the threat of force or violence is absent from that above picture. Except for two things:

- The threat of force is not fully absent. Another disincentive to cheating could be the thought of what a cheated guy will do. I have weapons, and friends loyal to me. I, as an angry cheated party, could resort to force. That’s how clan wars start!

- There may also be a system of governance of some kind in place, which will assess the details of the case and possibly fine the merchant or put him in jail. Your standard government-backed disincentive is always backed by the threat of force, because the underlying punishment for refusing to pay the fine, refusing to go to jail, refusing to comply with all measures, is ultimately force. And that carries through to the present day. If I refuse to pay a fine, I may be arrested. If I refuse to be arrested, there will be force used against me, and I can be put in jail against my will or even killed (if my resistance is stubborn enough). There we see the threat of force only two steps away, for even a minor infraction!

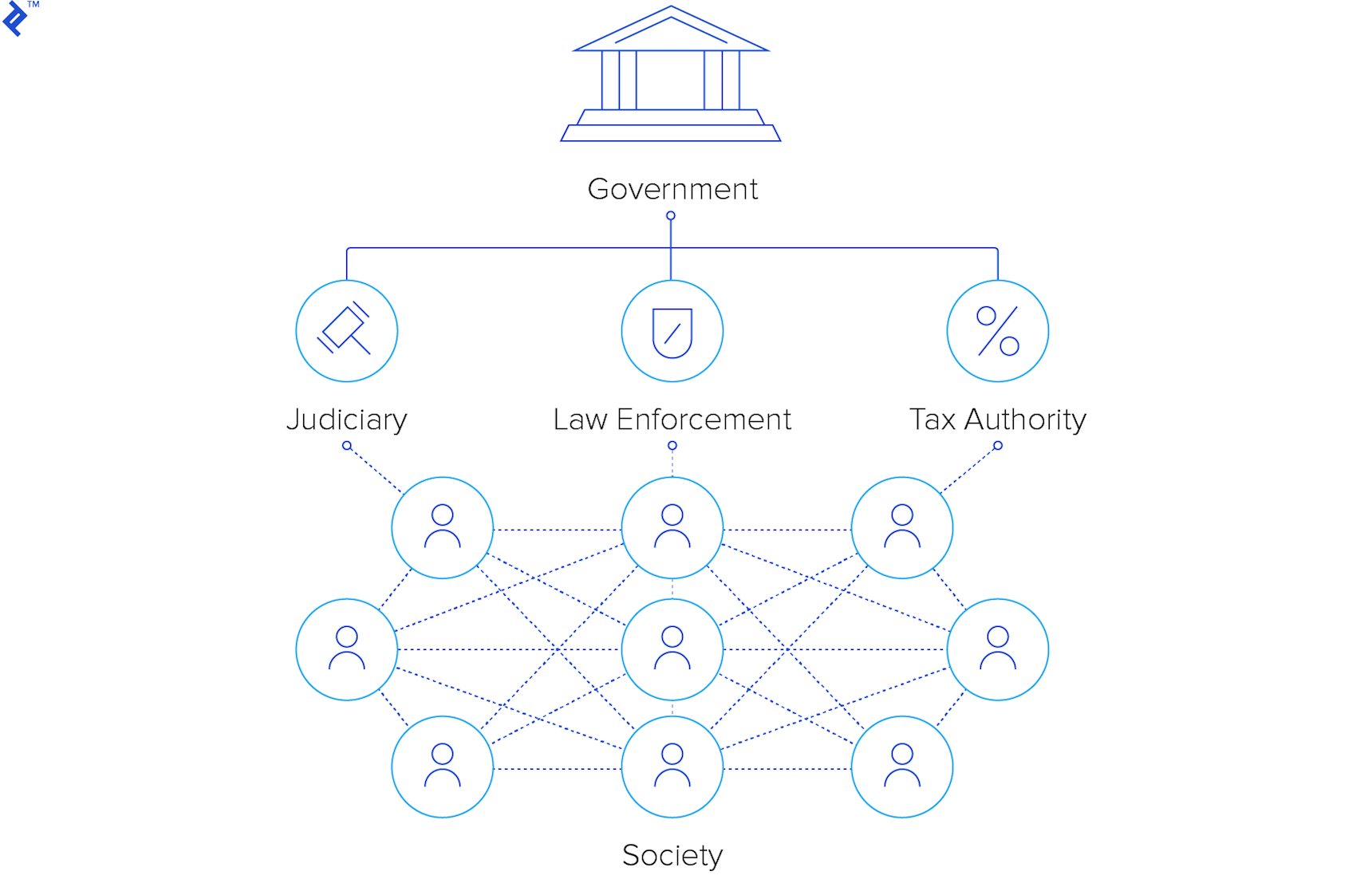

Decentralized Trust Today

Fast-forward to the present day. What are the disincentives for breaking a contract? Are they much different from the previous scenario?

Company X has a mail-in rebate for buying this product. Why do you trust that they will deliver on this? Same as in the previous example; the company has little to gain by cheating on a petty amount, and much to lose by damaging their reputation. This is the basis behind many trust scenarios and has been for a long long time. And again, as in the grain merchant example, there is the threat of force, though in this case, it’s not going to come to that. The company may be fined, or punished by a class-action suit, and the company must pay the fine or face worse punishments. Those punishments are backed by a government, which is in turn backed by the threat of force, both economic and military, though we could possibly consider that the threat of economic force is in turn backed by military force, i.e. violence.

Traditional Systems

The model of contracts backed by government-sanctioned application of force has served humanity well for thousands of years? Or has it? Well, it has, but it’s a natural progression. In the absence of government, groups of people form governments. It almost seems like you can’t stop people from forming governments; they will form.

So, what about blockchain smart contracts? How does blockchain and the smart contract model assure trustability, or disincentivize cheating? Don’t just say “trustlessness”, that’s not an answer! In our previous examples, cheating is disincentivized in some way.

Let’s take a closer look at how the blockchain fulfills (or replaces) that function.

Blockchain Systems: Bitcoin

To break this big question down into smaller questions, let’s start with bitcoin. How does bitcoin disincentivize cheating? I am free to run any bitcoin node software that I want, as long as it appears to adhere to the protocols of the bitcoin network. No one discourages me from running my own homespun bitcoin node that does what it wants while adhering to the network protocols; is there any way in which I can use that for illicit gains?

Sure, I can release any kind of transactions out into the bitcoin network for approval. I can release a transaction that sends all of your bitcoin to me, release it onto the network, wait for it to be added to a block, and wow, now all your bitcoin are belong to me? No, because of encryption.

I don’t have your private key, and such a transaction must be signed with your private key. So, I am blocked there by cryptography. Or am I? Who says that such a transaction must be signed? What will happen if I do try it? Well, what will happen of course is that the entire bitcoin network will reject my transaction. Why would anyone accept it? Aside from the fact that they’re all running standard nodes, which will reject it out of hand, why would they help me cheat? Doing so will certainly undermine the integrity of the bitcoin network and in doing so undermine their own crypto-wealth. So it makes no sense for them to help me, an anonymous stranger, to cheat another anonymous stranger. Even if one irrational actor somehow accepts my invalid transaction, the vast majority of the bitcoin network will reject it, and it has no chance. It’s beaten again, this time by sheer numbers.

What if, though, I am a powerful mining operation? Surely now I have more power to finagle things to my advantage. I do, but it still doesn’t give me anything close to absolute power. Even as a powerful miner, if I control less than 50% of the network then I can’t do much. I have some power to choose the order in which transactions are added to blocks, but that’s hardly the power to mint or steal coins. Even if I do control more than 50% of the network (assuming here that the reader is aware of the well-known 51% attack in relation to proof-of-work as in bitcoin), my main power would be double-spending. While kind of a cool power, it’s very questionable whether it would be in my best interest to do that, as it would undermine the integrity of bitcoin. It seems likely that I’d be better off using my control to mine all the coins, thus making more money, and upholding the ground on which that wealth stands. Thus, I am not beaten, but my impulse to cheat is stymied by a disincentive that’s organically built-in to the protocol. And that disincentive is basically supported by strength of numbers; the overwhelming consensus of the participants on the bitcoin network.

Blockchain Smart Contracts and Oracle Contracts

What are smart contracts? Suppose that I deployed a misleading contract to trick people into sending me their money? Or suppose I deployed a betting contract and used one of the attacks (if you can call them that) described earlier? I can do that, it might fool some people; I, as a dishonest actor, might gain some small profit from such an endeavor. The defense against it would be for each participant to carefully consider (as one should do with any contract) the contract to which they’re about to be a party, and the potential ways in which it can be abused. They should consider also the source - what, if anything, they know about the party that publishes and maintains the contract and any associated oracles or associated contracts. One would hope that a dishonest contract would not last for long before the network informally flagged it as dishonest, causing participants to voluntarily avoid it, cutting it off. The network is big, and word travels quickly.

Except at some point, you still need to trust a human being. The data to the betting contract is provided by an oracle. The oracle is maintained by a human. No matter how many layers you add on in an attempt to keep the network honest, it still comes back to a human at some point. So what type of oracle would you trust, given our betting example? I would trust an oracle that had more to lose than to gain, by cheating. Example: imagine that ESPN or a similar network were the sponsors of the oracle. You’d expect them, more than anyone, to provide honest data, since illicitly winning a petty amount in a boxing bet would be overshadowed by the resulting loss of reputation. In this case, your trust is well-placed for the same reason that we trust the honest grain merchant. That type of trust arrangement is ancient and well-established.

So, what have we gained in the use of smart contracts? How are smart contracts different from governance or previous methods of upholding contracts?

Wrapping Up

Just to make a point, to give food for thought and discussion, and to wrap up my article, I’m going to present a few simple observations, in place of hard conclusions. Because for a topic of this complexity, a concise conclusion feels like a sort of just-so story and an oversimplification. The observations that I am going to put forth (and please feel free to discuss/refute/contradict them) are these:

- Trust based on the assumption that the other party has more to gain from cooperating than from cheating is ancient, works in practical situations, and has not gone away. It is still inherent in certain cases in the blockchain world, though perhaps eliminated in many cases. In the case of our oracle example, it is still alive and well.

- Trust based on threat of force or violence has also been inherent in human society since time immemorial, but is remarkably absent from our smart contract model, and has been replaced by enforcement via clever combinations of encryption and large-numbers consensus.

I challenge fellow Ethereum developers to do two things:

- Think of any way, in the systems of public blockchains (such as bitcoin or ethereum), in which anything is enforced by either the implicit or explicit threat of force.

- Think of any major rule system in modern contract or financial law, which is not in some way enforced by either the explicit or implicit threat of force.

Something Old, Something New

And I think here we’ve arrived at the main difference, and indeed the true reason why we say that blockchain systems are “revolutionary”, in comparison to the systems of the past. In my way of thinking, it’s not trustlessness at all, but rather a more stable platform for trust and - most notably - one that is not at all reliant on threat of force or violence.

On the one hand, we have the old and time-tested assurance of mutual benefit in situations in which there is a lack of incentive for cheating. That’s nothing new. What’s new is the introduction of encryption-assisted consensus, which helps to discourage cheating and keep the system honest. And the synthesis of these two elements has produced something truly remarkable, possible for the first time in human history: a system that is usable for large, anonymous groups, in which nowhere is found the explicit or implicit threat of force as a disincentive or punishment. And that, I believe, is what is truly amazing. If that aspect is overlooked, what we have is a nifty new technology (which I admit, is cool enough as it is). But when that aspect is considered, it’s apparent that we’ve entered a new era of governance.

Understanding the basics

What is a smart contract?

A smart contract is a computer code that gets executed on top of the Ethereum Virtual Machine. Smart contracts can send and accept ether and data. Contracts are immutable in nature, unless programmed otherwise.

What is an oracle in blockchain?

A so-called blockchain oracle is a trusted data source that provides information about various states and occurrences that are used by smart contracts.

What is a smart contract oracle used for?

Smart contract oracles are used to provide a link between real-world events and digital contracts. This external data provided by oracles may (or may not) trigger smart contract executions.

John R. Kosinski

Chiang Mai, Thailand

Member since February 9, 2016

About the author

As a full-stack dev for nearly two decades, John’s worked with IoT, Blockchain, web, and mobile projects using C/C++, .NET, SQL, and JS.

Expertise

PREVIOUSLY AT