Gulp Under the Hood: Building a Stream-based Task Automation Tool

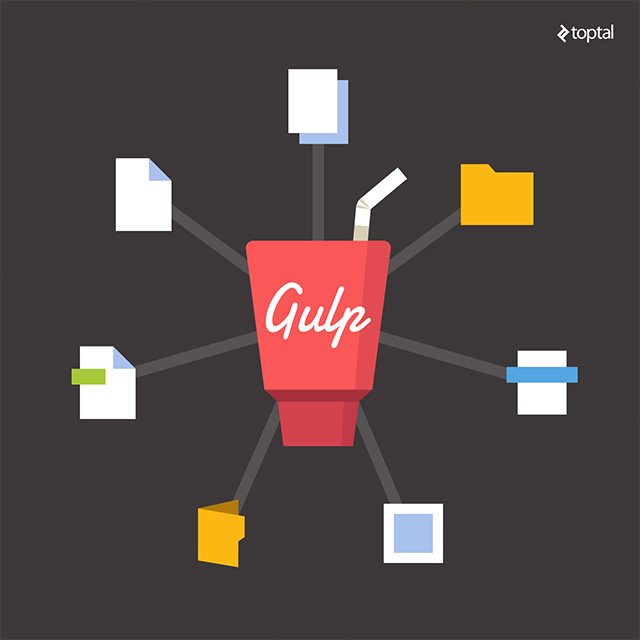

Streams are a powerful construct in Node.js and in I/O driven programming in general. Gulp, a tool for task automation, leverages streams in elegant ways to allow developers to enhance their build workflow.

In this article, Toptal engineer Mikhail Angelov gives us some insight into how Gulp works with streams by showing us step-by-step how to build a Gulp-like build automation tool.

Streams are a powerful construct in Node.js and in I/O driven programming in general. Gulp, a tool for task automation, leverages streams in elegant ways to allow developers to enhance their build workflow.

In this article, Toptal engineer Mikhail Angelov gives us some insight into how Gulp works with streams by showing us step-by-step how to build a Gulp-like build automation tool.

Mikhail holds a Master’s in Physics. He’s run the gamut with Node.js, Go, JavaScript SPAs, React.js, Flux/Redux, RIOT.js, and AngularJS.

Expertise

Front-end developers nowadays are using multiple tools to automate routine operations. Three of the most popular solutions are Grunt, Gulp and Webpack. Each of these tools are built on different philosophies, but they share the same common goal: to streamline the front-end build process. For example, Grunt is configuration-driven while Gulp enforces almost nothing. In fact, Gulp relies on the developer writing code to implement the flow of the build processes - the various build tasks.

When it comes to choosing one of these tools, my personal favorite is Gulp. All in all it’s a simple, fast and reliable solution. In this article we will see how Gulp works under the hood by taking a stab at implementing our very own Gulp-like tool.

Gulp API

Gulp comes with just four simple functions:

- gulp.task

- gulp.src

- gulp.dest

- gulp.watch

These four simple functions, in various combinations offer all the power and flexibility of Gulp. In version 4.0, Gulp introduced two new functions: gulp.series and gulp.parallel. These APIs allow tasks to be run in series or in parallel.

Out of these four functions, the first three are absolutely essential for any Gulp file. allowing tasks to be defined and invoked from the command line interface. The fourth one is what makes Gulp truly automatic by allowing tasks to be run when files change.

Gulpfile

This is an elementary gulpfile:

gulp.task('test', function{

gulp.src('test.txt')

.pipe(gulp.dest('out'));

});

It describes a simple test task. When invoked, the file test.txt in the current working directory should be copied to the directory ./out. Give it a try by running Gulp:

touch test.txt # Create test.txt

gulp test

Notice that method .pipe is not a part of Gulp, it’s node-stream API, it connects a readable stream (generated by gulp.src('test.txt')) with a writable stream (generated by gulp.dest('out')). All communication between Gulp and plugins are based on streams. This lets us write gulpfile code in such an elegant way.

Meet Plug

Now that we have some idea of how Gulp works, let’s build our own Gulp-like tool: Plug.

We will start with plug.task API. It should let us register tasks, and tasks should be executed if the task name is passed in command parameters.

var plug = {

task: onTask

};

module.exports = plug;

var tasks = {};

function onTask(name, callback){

tasks[name] = callback;

}

This will allow tasks to be registered. Now we need to make this task executable. To keep things simple, we will not make a separate task launcher. Instead we will include it in our plug implementation.

All we need to do is run the tasks named in command line parameters. We also need to make sure we attempt to do it in the next execution loop, after all tasks are registered. The easiest way to do it is run tasks in a timeout callback, or preferably process.nextTick:

process.nextTick(function(){

var taskName = process.argv[2];

if (taskName && tasks[taskName]) {

tasks[taskName]();

} else {

console.log('unknown task', taskName)

}

});

Compose plugfile.js like this:

var plug = require('./plug');

plug.task('test', function(){

console.log('hello plug');

})

… and run it.

node plugfile.js test

It will display:

hello plug

Subtasks

Gulp also allows to define subtasks at task registration. In this case, plug.task should take 3 parameters, the name, array of sub tasks, and callback function. Let’s implement this.

We will need to update the task API as such:

var tasks = {};

function onTask(name) {

if(Array.isArray(arguments[1]) && typeof arguments[2] === "function"){

tasks[name] = {

subTasks: arguments[1],

callback: arguments[2]

};

} else if(typeof arguments[1] === "function"){

tasks[name] = {

subTasks: [],

callback: arguments[1]

};

} else{

console.log('invalid task registration')

}

}

function runTask(name){

if(tasks[name].subTasks){

tasks[name].subTasks.forEach(function(subTaskName){

runTask(subTaskName);

});

}

if(tasks[name].callback){

tasks[name].callback();

}

}

process.nextTick(function(){

if (taskName && tasks[taskName]) {

runTask(taskName);

}

});

Now if our plugfile.js looks like this:

plug.task('subTask1', function(){

console.log('from sub task 1');

})

plug.task('subTask2', function(){

console.log('from sub task 2');

})

plug.task('test', ['subTask1', 'subTask2'], function(){

console.log('hello plug');

})

… running it

node plugfile.js test

… should display:

from sub task 1

from sub task 2

hello plug

Note that Gulp runs subtasks in parallel. But to keep things simple, in our implementation we are running subtasks sequentially. Gulp 4.0 allows this to be controlled using its two new API functions, which we will implement later in this article.

Source and Destination

Plug will be of little use if we don’t allow files to be read and written to. So next we will implement plug.src. This method in Gulp expects an argument that is either a file mask, a filename or an array of file masks. It returns a readable Node stream.

For now, in our implementation of src, we will just allow filenames:

var plug = {

task: onTask,

src: onSrc

};

var stream = require('stream');

var fs = require('fs');

function onSrc(fileName){

var src = new stream.Readable({

read: function (chunk) {

},

objectMode: true

});

//read file and send it to the stream

fs.readFile(path, 'utf8', (e,data)=> {

src.push({

name: path,

buffer: data

});

src.push(null);

});

return src;

}

Note that we use objectMode: true, an optional parameter here. This is because node streams work with binary streams by default. If we need to pass/receive JavaScript objects via streams, we have to use this parameter.

As you can see, we created an artificial object:

{

name: path, //file name

buffer: data //file content

}

… and passed it into the stream.

On the other end, plug.dest method should receive a target folder name and return a writable stream which will receive objects from .src stream. As soon as a file object will be received, it will be stored into the target folder.

function onDest(path){

var writer = new stream.Writable({

write: function (chunk, encoding, next) {

if (!fs.existsSync(path)) fs.mkdirSync(path);

fs.writeFile(path +'/'+ chunk.name, chunk.buffer, (e)=> {

next()

});

},

objectMode: true

});

return writer;

}

Let us update our plugfile.js:

var plug = require('./plug');

plug.task('test', function(){

plug.src('test.txt')

.pipe(plug.dest('out'))

})

… create test.txt

touch test.txt

… and run it:

node plugfile.js test

ls ./out

test.txt should be copied to the ./out folder.

Gulp itself works about the same way, but instead of our artificial file objects it uses vinyl objects. It is much more convenient, as it contains not just the filename and content but additional meta information as well, such as the current folder name, full path to file, and so on. It may not contain the entire content buffer, but it has a readable stream of the content instead.

Vinyl: Better Than Files

There is an excellent library vinyl-fs that lets us manipulate files represented as vinyl objects. It essentially lets us create readable, writable streams based on file mask.

We can rewrite plug functions using vinyl-fs library. But first we need to install vinyl-fs:

npm i vinyl-fs

With this installed, our new Plug implementation will look something like this:

var vfs = require('vinyl-fs')

function onSrc(fileName){

return vfs.src(fileName);

}

function onDest(path){

return vfs.dest(path);

}

// ...

… and to try it out:

rm out/test.txt

node plugFile.js test

ls out/test.txt

The results should still be the same.

Gulp Plugins

Since our Plug service uses Gulp stream convention, we can use native Gulp plugins together with our Plug tool.

Let’s try one out. Install gulp-rename:

npm i gulp-rename

… and update plugfile.js to use it:

var plug = require('./app.js');

var rename = require('gulp-rename');

plug.task('test', function () {

return plug.src('test.txt')

.pipe(rename('renamed.txt'))

.pipe(plug.dest('out'));

});

Running plugfile.js now should still, you guessed it, produce the same result.

node plugFile.js test

ls out/renamed.txt

Monitoring Changes

The last but not least method is gulp.watch This method allows us to register file listener and invoke registered tasks when files change. Let’s implement it:

var plug = {

task: onTask,

src: onSrc,

dest: onDest,

watch: onWatch

};

function onWatch(fileName, taskName){

fs.watchFile(fileName, (event, filename) => {

if (filename) {

tasks[taskName]();

}

});

}

To try it out, add this line to plugfile.js:

plug.watch('test.txt','test');

Now on each change of test.txt, the file will be copied into the out folder with its name changed.

Series vs Parallel

Now that all the fundamental functions from Gulp’s API is implemented, let’s take things one step further. The upcoming version of Gulp will contain more API functions. This new API will make Gulp more powerful:

- gulp.parallel

- gulp.series

These methods allow the user to control the sequence in which tasks are run. To register subtasks in parallel gulp.parallel may be used, which is the current Gulp behavior. On the other hand, gulp.series may be used to run subtasks in a sequential manner, one after another.

Assume we have test1.txt and test2.txt in the current folder. In order to copy those files to out folder in parallel let us make a plugfile:

var plug = require('./plug');

plug.task('subTask1', function(){

return plug.src('test1.txt')

.pipe(plug.dest('out'))

})

plug.task('subTask2', function(){

return plug.src('test2.txt')

.pipe(plug.dest('out'))

})

plug.task('test-parallel', plug.parallel(['subTask1', 'subTask2']), function(){

console.log('done')

})

plug.task('test-series', plug.series(['subTask1', 'subTask2']), function(){

console.log('done')

})

To simplify implementation, the subtask callback functions are made to return its stream. This will help us to track stream life cycle.

We will begin amending our API:

var plug = {

task: onTask,

src: onSrc,

dest: onDest,

parallel: onParallel,

series: onSeries

};

We will need to update onTask function as well, since we need to add additional task meta information to help our task launcher deal with subtasks properly.

function onTask(name, subTasks, callback){

if(arguments.length < 2){

console.error('invalid task registration',arguments);

return;

}

if(arguments.length === 2){

if(typeof arguments[1] === 'function'){

callback = subTasks;

subTasks = {series: []};

}

}

tasks[name] = subTasks;

tasks[name].callback = function(){

if(callback) return callback();

};

}

function onParallel(tasks){

return {

parallel: tasks

};

}

function onSeries(tasks){

return {

series: tasks

};

}

To keep things simple, we will use async.js, a utility library for dealing with asynchronous functions to run tasks in parallel or in series:

var async = require('async')

function _processTask(taskName, callback){

var taskInfo = tasks[taskName];

console.log('task ' + taskName + ' is started');

var subTaskNames = taskInfo.series || taskInfo.parallel || [];

var subTasks = subTaskNames.map(function(subTask){

return function(cb){

_processTask(subTask, cb);

}

});

if(subTasks.length>0){

if(taskInfo.series){

async.series(subTasks, taskInfo.callback);

}else{

async.parallel(subTasks, taskInfo.callback);

}

}else{

var stream = taskInfo.callback();

if(stream){

stream.on('end', function(){

console.log('stream ' + taskName + ' is ended');

callback()

})

}else{

console.log('task ' + taskName +' is completed');

callback();

}

}

}

We rely on node stream ‘end’ which is emitted when a stream has processed all messages and is closed, which is an indication that the subtask is complete. With async.js, we do not have to deal with a big mess of callbacks.

To try it out, let us first run the subtasks in parallel:

node plugFile.js test-parallel

task test-parallel is started

task subTask1 is started

task subTask2 is started

stream subTask2 is ended

stream subTask1 is ended

done

And run the same subtasks in series:

node plugFile.js test-series

task test-series is started

task subTask1 is started

stream subTask1 is ended

task subTask2 is started

stream subTask2 is ended

done

Conclusion

That’s it, we have implemented Gulp’s API and can use Gulp plugins now. Of course, do not use Plug in real projects, as Gulp is more than just what we have implemented here. I hope this little exercise will help you understand how Gulp works under the hood and let us more fluently use it and extend it with plugins.

Nizhny Novgorod, Nizhny Novgorod Oblast, Russia

Member since July 6, 2015

About the author

Mikhail holds a Master’s in Physics. He’s run the gamut with Node.js, Go, JavaScript SPAs, React.js, Flux/Redux, RIOT.js, and AngularJS.