Picasso: How to Test a Component Library

Testing can be a daunting task even for experienced teams with an abundance of resources. How do Toptal developers write tests and what do they use?

In this article, Toptal React Developer Boris Yordanov introduces you to Picasso, a component library designed by our developers for in-house use.

Testing can be a daunting task even for experienced teams with an abundance of resources. How do Toptal developers write tests and what do they use?

In this article, Toptal React Developer Boris Yordanov introduces you to Picasso, a component library designed by our developers for in-house use.

Boris works mainly with vanilla JavaScript and the most popular JavaScript frameworks like Angular, React, and Meteor.

Expertise

PREVIOUSLY AT

A new version of Toptal’s design system was released recently which required that we make changes to almost every component in Picasso, our in-house component library. Our team was faced with a challenge: How do we ensure that regressions don’t happen?

The short answer is, rather unsurprisingly, tests. Lots of tests.

We will not review the theoretical aspects of testing nor discuss different types of tests, their usefulness, or explain why you should test your code in the first place. Our blog and others have already covered those topics. Instead, we will focus solely on the practical aspects of testing.

Read on to learn how developers at Toptal write tests. Our repository is public, so we use real-world examples. There aren’t any abstractions or simplifications.

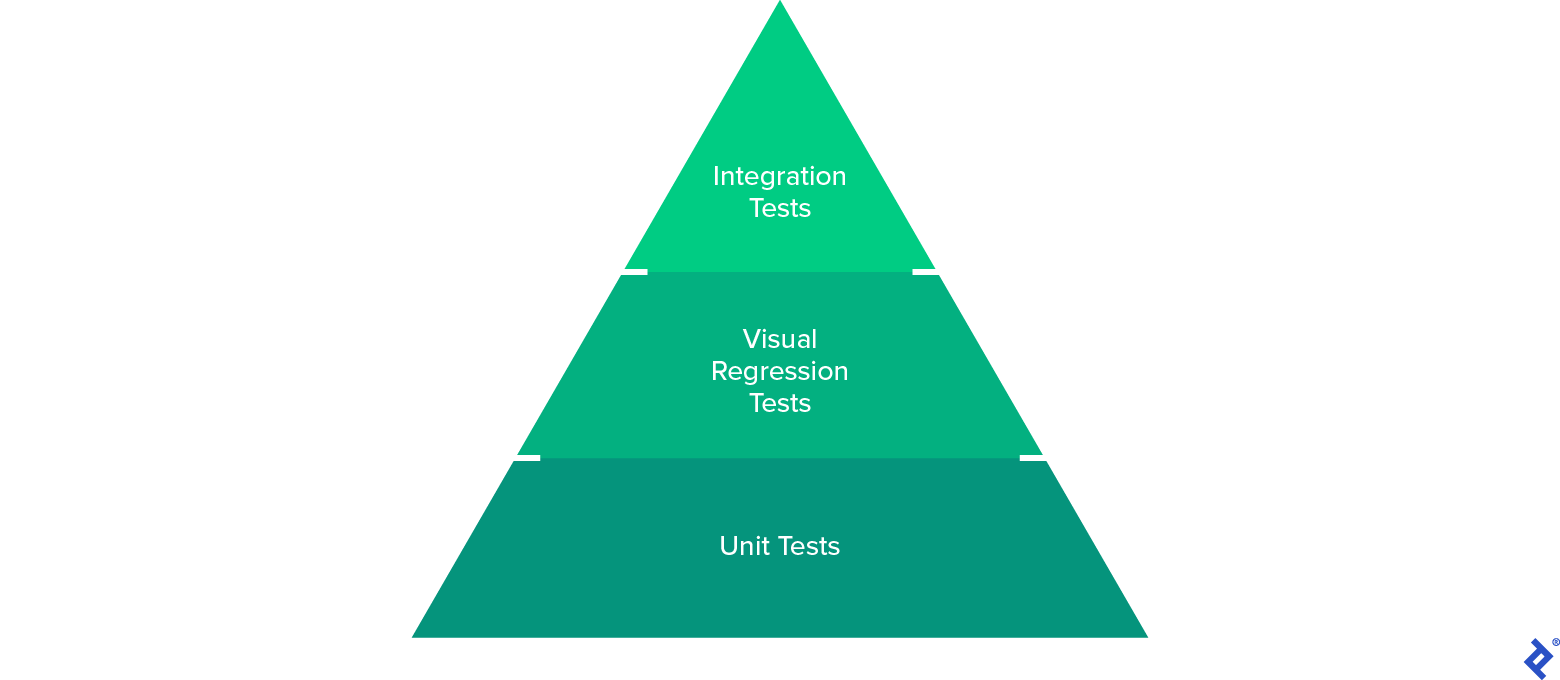

Testing Pyramid

We don’t have a testing pyramid defined, per se, but if we did it would look like this:

Toptal’s testing pyramid illustrates the tests we emphasize.

Unit Tests

Unit tests are straightforward to write and easy to run. If you have very little time to write tests, they should be your first choice.

However, they are not perfect. Regardless of which testing library you choose (Jest and React Testing Library [RTL] in our case), it won’t have a real DOM and it won’t allow you to check functionality in different browsers, but it will allow you to strip away complexity and test the simple building blocks of your library.

Unit tests don’t just add value by testing the behavior of the code but also by checking the overall testability of the code. If you can’t write unit tests easily, chances are you have bad code.

Visual Regression Tests

Even if you have 100% unit test coverage, that doesn’t mean the components look good across devices and browsers.

Visual regressions are particularly difficult to spot with manual testing. For example, if a button’s label is moved by 1px, will a QA engineer even notice? Thankfully, there are many solutions to this problem of limited visibility. You can opt for enterprise-grade all-in-one solutions such as LambdaTest or Mabl. You can incorporate plugins, like Percy, into your existing tests, as well as DIY solutions from the likes of Loki or Storybook (which is what we used prior to Picasso). They all have drawbacks: Some are too expensive while others have a steep learning curve or require too much maintenance.

Happo to the rescue! It’s a direct competitor to Percy, but it’s much cheaper, supports more browsers, and is easier to use. Another big selling point? It supports Cypress integration, which was important because we wanted to move away from using Storybook for visual testing. We found ourselves in situations where we had to create stories just so we could ensure visual test coverage, not because we needed to document that use case. That polluted our docs and made them more difficult to understand. We wanted to isolate visual testing from visual documentation.

Integration Tests

Even if two components have unit and visual tests, that’s no guarantee that they’ll work together. For example, we found a bug where a tooltip doesn’t open when used in a dropdown item yet works well when used on its own.

To ensure components integrate well, we used Cypress’s experimental component testing feature. At first, we were dissatisfied with the poor performance, but we were able to improve it with a custom webpack configuration. The result? We were able to use Cypress’s excellent API to write performant tests that ensure our components work well together.

Applying the Testing Pyramid

What does all this look like in real life? Let’s test the Accordion component!

Your first instinct might be to open your editor and start writing code. My advice? Spend some time understanding all the features of the component and write down what test cases you want to cover.

What to Test?

Here’s a breakdown of the cases our tests should cover:

- States – Accordions can be expanded and collapsed, its default state can be configured, and this feature can be disabled

- Styles – Accordions can have border variations

- Content – They can integrate with other units of the library

- Customization – The component can have its styles overridden and can have custom expand icons

- Callbacks – Every time the state changes, a callback can be invoked

How to Test?

Now that we know what we have to test, let’s consider how to go about it. We have three options from our testing pyramid. We want to achieve maximum coverage with minimal overlap between the sections of the pyramid. What’s the best way to test each test case?

- States – Unit tests can help us assess if the states change accordingly, but we also need visual tests to make sure the component is rendered correctly in each state

- Styles – Visual tests should suffice to detect regressions of the different variants

- Content – A combination of visual and integration tests is the best choice, as Accordions can be used in combination with many other components

- Customization – We can use a unit test to verify if a class name is applied correctly, but we need a visual test to make sure that the component and custom styles work in tandem

- Callbacks – Unit tests are ideal for ensuring the right callbacks are invoked

The Accordion Testing Pyramid

Unit Tests

The full suite of unit tests can be found here. We’ve covered all the state changes, the customization, and callbacks:

it('toggles', async () => {

const handleChange = jest.fn()

const { getByText, getByTestId } = renderAccordion({

onChange: handleChange,

expandIcon: <span data-testid='trigger' />

})

fireEvent.click(getByTestId('accordion-summary'))

await waitFor(() => expect(getByText(DETAILS_TEXT)).toBeVisible())

fireEvent.click(getByTestId('trigger'))

await waitFor(() => expect(getByText(DETAILS_TEXT)).not.toBeVisible())

fireEvent.click(getByText(SUMMARY_TEXT))

await waitFor(() => expect(getByText(DETAILS_TEXT)).toBeVisible())

expect(handleChange).toHaveBeenCalledTimes(3)

})

Visual Regression Tests

The visual tests are located in this Cypress describe block. The screenshots can be found in Happo’s dashboard.

You can see all the different component states, variants, and customizations have been recorded. Every time a PR is opened, CI compares the screenshots that Happo has stored to the ones taken in your branch:

it('renders', () => {

mount(

<TestingPicasso>

<TestAccordion />

</TestingPicasso>

)

cy.get('body').happoScreenshot()

})

it('renders disabled', () => {

mount(

<TestingPicasso>

<TestAccordion disabled />

<TestAccordion expandIcon={<Check16 />} />

</TestingPicasso>

)

cy.get('body').happoScreenshot()

})

it('renders border variants', () => {

mount(

<TestingPicasso>

<TestAccordion borders='none' />

<TestAccordion borders='middle' />

<TestAccordion borders='all' />

</TestingPicasso>

)

cy.get('body').happoScreenshot()

})

Integration Tests

We wrote a “bad path” test in this Cypress describe block that asserts the Accordion still functions correctly and that users can interact with the custom component. We also added visual assertions for extra confidence:

describe('Accordion with custom summary', () => {

it('closes and opens', () => {

mount(<AccordionCustomSummary />)

toggleAccordion()

getAccordionContent().should('not.be.visible')

cy.get('[data-testid=accordion-custom-summary]').happoScreenshot()

toggleAccordion()

getAccordionContent().should('be.visible')

cy.get('[data-testid=accordion-custom-summary]').happoScreenshot()

})

// …

})

Continuous Integration

Picasso relies almost entirely on GitHub Actions for QA. Additionally, we added Git hooks for code quality checks of staged files. We recently migrated from Jenkins to GHA, so our setup is still in the MVP stage.

The workflow is run on every change in the remote branch in sequential order, with the integration and visual tests being the last stage because they are most expensive to run (both in regard to performance and monetary cost). Unless all tests complete successfully, the pull request can not be merged.

These are the stages GitHub Actions goes through every time:

- Dependency installation

- Version control – Validates that the format of commits and PR title match conventional commits

- Lint – ESlint ensures good quality code

- TypeScript compilation – Verify there are no type errors

- Package compilation – If the packages can’t be built, then they won’t be released successfully; our Cypress tests also expect compiled code

- Unit tests

- Integration and visual tests

The full workflow can be found here. Currently, it takes less than 12 minutes to complete all the stages.

Testability

Like most component libraries, Picasso has a root component that must wrap all other components and can be used to set global rules. This makes it harder to write tests for two reasons—inconsistencies in test results, depending on the props used in the wrapper; and extra boilerplate:

import { render } from '@testing-library/react'

describe('Form', () => {

it('renders', () => {

const { container } = render(

<Picasso loadFavicon={false} environment='test'>

<Form />

</Picasso>

)

expect(container).toMatchSnapshot()

})

})

We solved the first problem by creating a TestingPicasso that preconditions the global rules for testing. But it’s annoying to have to declare it for every test case. That’s why we created a custom render function that wraps the passed component in a TestingPicasso and returns everything available from RTL’s render function.

Our tests are now easier to read and straightforward to write:

import { render } from '@toptal/picasso/test-utils'

describe('Form', () => {

it('renders', () => {

const { container } = render(<Form />)

expect(container).toMatchSnapshot()

})

})

Conclusion

The setup described here is far from perfect, but it’s a good starting point for those of you adventurous enough to create a component library. I’ve read a lot about testing pyramids, but it’s not always easy to apply them in practice. Therefore, I invite you to explore our codebase and learn from our mistakes and successes.

Component libraries are unique because they serve two kinds of audiences: the end users who interact with the UI and the developers who use your code to build their own applications. Investing time in a robust testing framework will benefit everyone. Investing time in testability improvements will benefit you as a maintainer and the engineers who use (and test) your library.

We didn’t discuss things like code coverage, end-to-end tests, and version and release policies. The short advice on those topics is: release often, practice proper semantic versioning, have transparency in your processes, and set expectations for the engineers that rely on your library. We may revisit these topics in more detail in subsequent posts.

Further Reading on the Toptal Blog:

- Top 10 Most Common Android Development Mistakes

- An Overly Thorough Guide to Underused Android Libraries

- Visual Regression Testing With Cypress: A Pragmatic Approach

- Tips and Tools for Optimizing Android Apps

- Who Knew Adobe CC Could Wireframe?

- Reusable State Management With RxJS, React, and Custom Libraries

Understanding the basics

Who is responsible for component testing?

A component library has two user groups: the engineers who build the UI with your library and the end-users who interact with it. Users care about good UI/UX, whereas developers need to be able to write integration tests between their apps and your components. As a maintainer, you must cater to both parties.

What is visual regression testing?

Changing a line of code can unexpectedly affect functionality that you didn’t expect (a regression). Sometimes, though, the regression is only visual; the UI still works, but it doesn’t look the same (a visual regression). Unit tests or E2E tests won’t catch the bug, but a visual regression test should.

Why is visual regression testing important?

Keeping the UI consistent between releases is important for UX. Most automated tests don’t interact with the UI, they manipulate it programmatically. They won’t notice if a button changes color or if an image overflows the page. A user would notice though, and so would a visual regression test.

Boris Yordanov

Spring Lake, NJ, United States

Member since July 15, 2017

About the author

Boris works mainly with vanilla JavaScript and the most popular JavaScript frameworks like Angular, React, and Meteor.

Expertise

PREVIOUSLY AT