Source Code QA: It’s Not Just for Developers Anymore

For product managers concerned about building a solid foundation for product development and eliminating risks, defining and implementing a systematic software QA process is essential.

For product managers concerned about building a solid foundation for product development and eliminating risks, defining and implementing a systematic software QA process is essential.

A product leader and technologist, Costas has 25+ years of experience supervising the full life cycle of sophisticated product development.

Twenty years ago, when I worked in the automotive industry, the director of one factory would often say, “We have one day to build a car, but our customer has a lifetime to inspect it.” Quality was of the utmost importance. Indeed, in more mature sectors like the automotive and construction industries, quality assurance is a key consideration that is systematically integrated into the product development process. While this is certainly driven by pressure from insurance companies, it is also dictated—as that factory director noted—by the resulting product’s lifespan.

When it comes to software, however, shorter life cycles and continuous upgrades mean that source code integrity is often overlooked in favor of new features, sophisticated functionality, and go-to-market speed. Product managers often deprioritize source code quality assurance or leave it to developers to handle, despite the fact that it is one of the more critical factors in determining a product’s fate. For product managers concerned about building a solid foundation for product development and eliminating risks, defining and implementing a systematic assessment of source code quality is essential.

Defining “Quality”

Before exploring the ways to properly evaluate and enact a source code QA process, it’s important to determine what “quality” means in the context of software development. This is a complex and multifaceted issue, but for the sake of simplicity, we can say quality refers to source code that supports a product’s value proposition without compromising consumer satisfaction or endangering the development company’s business model.

In other words, quality source code accurately implements the functional specifications of the product, satisfies the non-functional requirements, ensures consumers’ satisfaction, minimizes security and legal risks, and can be affordably maintained and extended.

Given how widely and quickly software is distributed, the impact of software defects can be significant. Problems like bugs and code complexity can hurt a company’s bottom line by hindering product adoption and increasing software asset management (SAM) costs, while security breaches and license compliance violations can affect company reputation and raise legal concerns. Even when software defects don’t have catastrophic results, they have an undeniable cost. In a 2018 report, software company Tricentis found that 606 software failures from 314 companies accounted for $1.7 trillion in lost revenue the previous year. In a just-released 2020 report, CISQ put the cost of poor quality software in the U.S. at $2.08 trillion, with another estimated $1.31 trillion in future costs incurred through technical debt. These numbers could be mitigated with earlier interventions; the average cost of resolving an issue during product design is significantly lower than resolving the same issue during testing, which is in turn exponentially less than resolving the issue after deployment.

Handling the Hot Potato

Despite the risks, quality assurance in software development is treated piecemeal and is characterized by a reactive approach rather than the proactive one taken in other industries. The ownership of source code quality is contested, when it should be viewed as the collective responsibility of different functions. Product managers must view quality as an impactful feature rather than overhead, executives should pay attention to the quality state and invest in it, and engineering functions should resist treating code-cleaning as a “hot potato.”

Compounding these delegation challenges is the fact that existing methodologies and tools fail to address the code quality issue as a whole. The use of continuous integration/continuous delivery methodologies reduces the impact of low-quality code, but unless CI/CD is based on a thorough and holistic quality analysis it cannot effectively anticipate and address most hazards. Teams responsible for QA testing, application security, and license compliance work in silos using tools that have been designed to solve only one part of the problem and evaluate only some of the non-functional or functional requirements.

Considering the Product Manager’s Role

Source code quality plays into numerous dilemmas a product manager faces during product design and throughout the software development life cycle. Τechnical debt is heavy overhead. It is harder and more expensive to add and modify features on a low-quality codebase, and supporting existing code complexity requires significant investments of time and resources that could otherwise be spent on new product development. As product managers continually balance risk against go-to-market speed, they must consider questions like:

- Should I use an OSS (open source software) library or build functionality from scratch? What licenses and potential liabilities are associated with the selected libraries?

- Which tech stack is safest? Which ensures a fast and low-cost development cycle?

- Should I prioritize app configurability (high cost/time delay) or implement customized versions (high maintenance cost/lack of scalability)?

- How feasible will it be to integrate newly acquired digital products while maintaining high code quality, minimizing risks, and keeping engineering costs low?

The answers to these questions can seriously impact business outcomes and the product manager’s own reputation, yet decisions are often made based on intuition or past experience rather than rigorous investigation and solid metrics. A thorough software quality evaluation process not only provides the data needed for decision-making, but also aligns stakeholders, builds trust, and contributes to a culture of transparency, in which priorities are clear and agreed-upon.

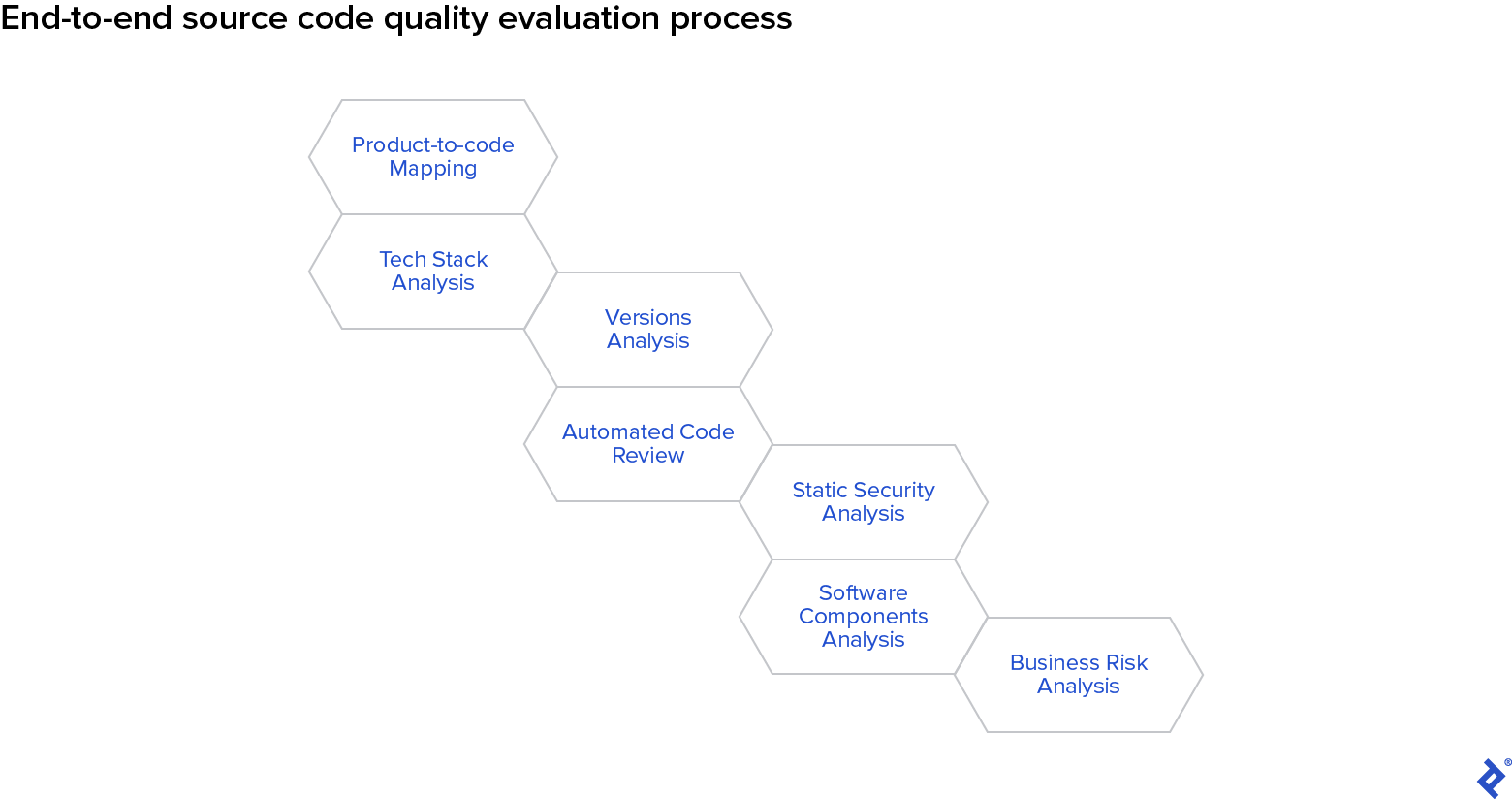

Implementing a 7-Step Process

A complete source code quality evaluation process results in a diagnosis that considers the full set of quality determinations rather than a few isolated symptoms of a larger problem. The seven-step method presented below is aligned with CISQ’s recommendations for process improvement and is meant to facilitate the following objectives:

- Find, measure, and fix the problem close to its root cause.

- Invest smartly in software quality improvement based on overall quality measurements.

- Attack the problem by analyzing the complete set of measurements and identifying the best, most cost-effective improvements.

- Consider the complete cost of a software product, including the costs of ownership, maintenance, and license/security regulation alignment.

- Monitor the code quality throughout the SDLC to prevent unpleasant surprises.

1. Product-to-code mapping: Tracing product features back to their codebase may seem like an obvious first step, but given the rate at which development complexity increases, it is not necessarily simple. In some situations, a product’s code is divided among several repositories, while in others, multiple products share the same repository. Identifying the various locations that house specific parts of a product’s code is necessary before further evaluation can take place.

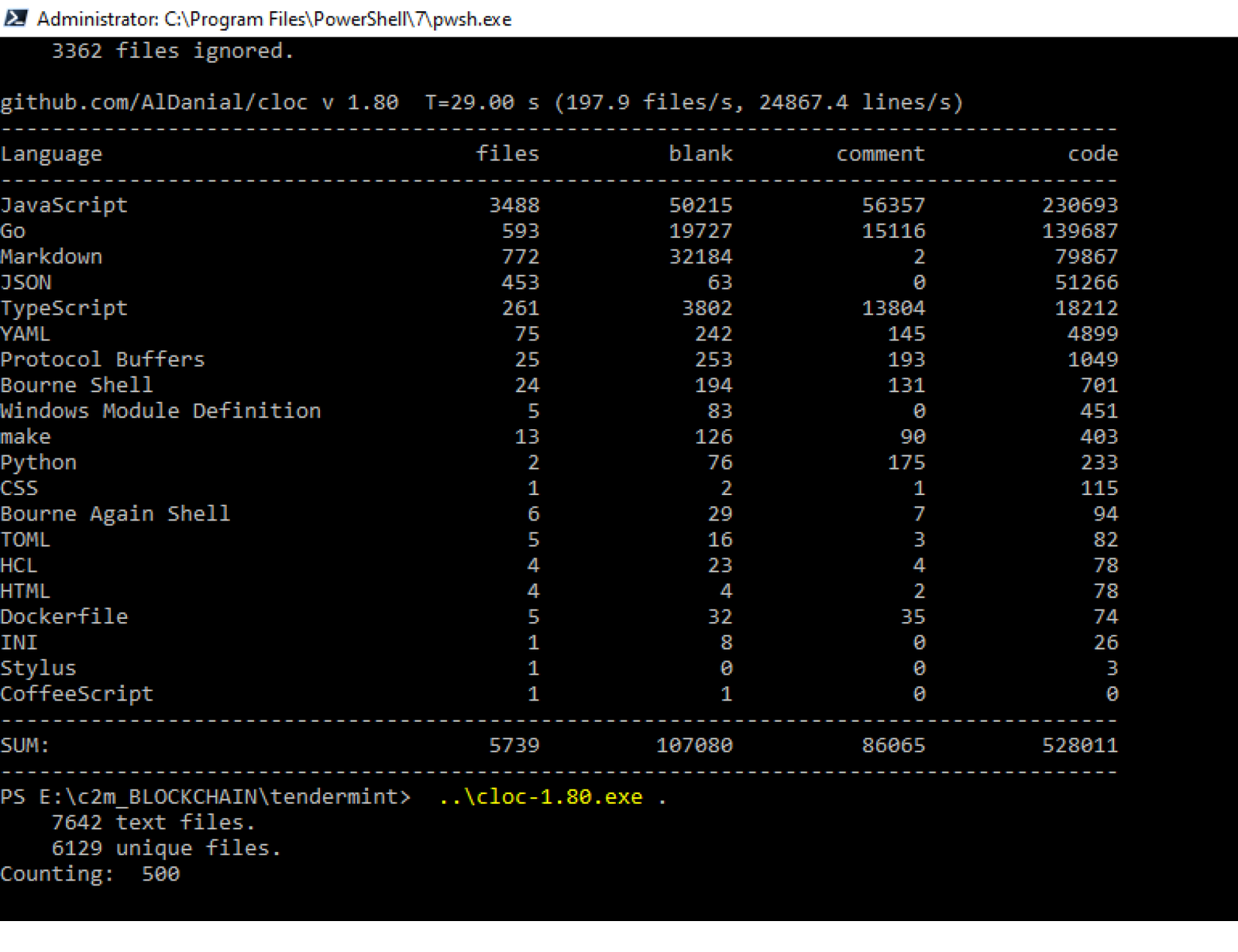

2. Tech stack analysis: This step takes into account the various programming languages and development tools used, the percentage of comments per file, the percentage of auto-generated code, the average development cost, and more.

Suggested tools: cloc

Alternatives: Tokei, scc, sloccount

3. Versions analysis: Based on the results of this portion of the audit, which involves identifying all versions of a codebase and calculating similarities, versions can be merged and duplications eliminated. This step can be combined with a bugspots (hot spots) analysis, which identifies the tricky parts of code that are most frequently revised and tend to generate higher maintenance costs.

Suggested tools: cloc, scc, sloccount

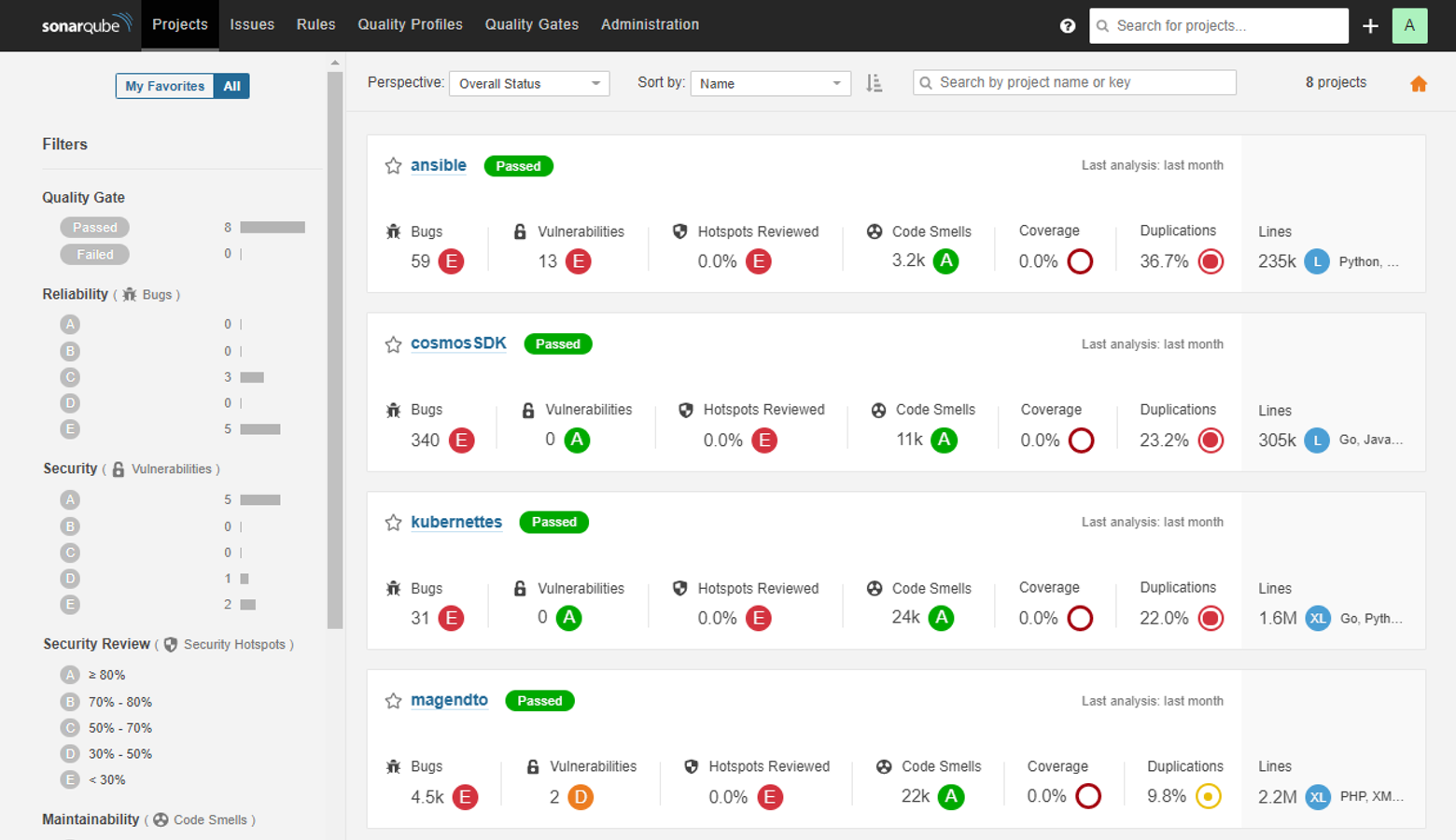

4. Automated code review: This inspection probes the code for defects, programming practice violations, and risky elements like hard-coded tokens, long methods, and duplications. The tool(s) selected for this process will depend on the results of the tech stack and versions analyses above.

Suggested tools: SonarQube, Codacy

Alternatives: RIPS, Veracode, Micro Focus, Parasoft, and many others. Another option is Sourcegraph, a universal code search solution.

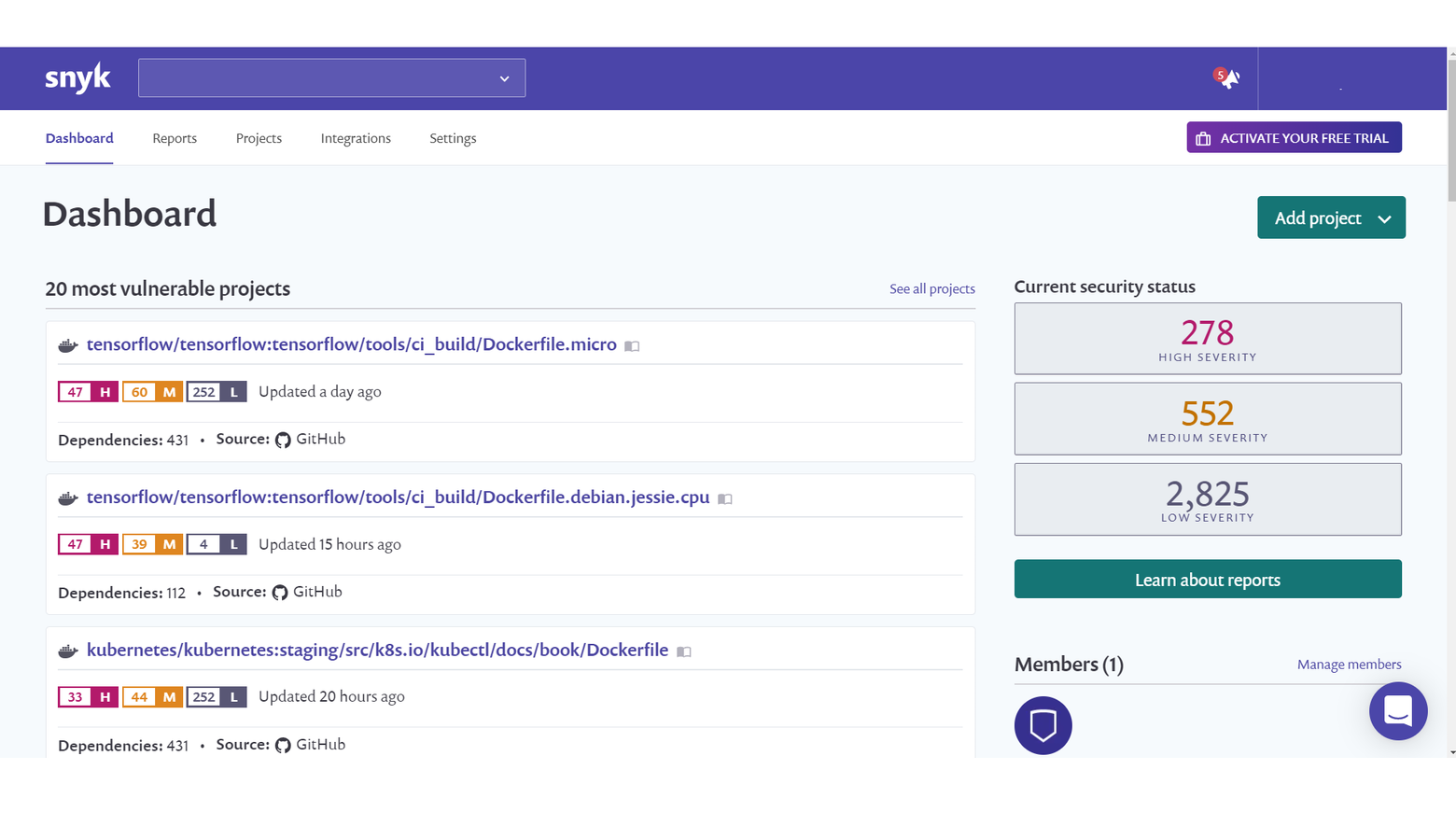

5. Static security analysis: This step, also known as static application security testing (SAST), explores and identifies potential application security vulnerabilities. The majority of available tools scan the code against the frequently occurring security concerns identified by organizations such as OWASP and SANS.

Suggested tools: WhiteSource, Snyk, Coverity

Alternatives: SonarQube, Reshift, Kiuwan, Veracode

6. Software components analysis (SCA)/License compliance analysis: This review involves identifying the open source libraries linked directly or indirectly to the code, the licenses that protect each of these libraries, and the permissions associated with each of these licenses.

Suggested tools: Snyk, WhiteSource, Black Duck

Alternatives: FOSSA, Sonatype, and others

7. Business risk analysis: This final measure involves consolidating the information gathered from the previous steps in order to understand the full impact of the source code quality status on the business. The analysis should result in a comprehensive report that provides stakeholders, including product managers, project managers, engineering teams, and C-suite executives, with the details they need to weigh risks and make informed product decisions.

Although the previous steps in this evaluation process can be automated and facilitated via a wide range of open source and commercial products, there are no existing tools that support the full seven-step process or the aggregation of its results. Because compilation of this data is a tedious and time-consuming task, it is either performed haphazardly or skipped entirely, potentially jeopardizing the development process. This is the point at which a thorough software inspection process often falls apart, making this last step arguably the most critical one in the evaluation process.

Selecting the Right Tools

Although software quality impacts the product and thus the business outcomes, tool selection is generally delegated to the development departments and the results can be difficult for non-developers to interpret. Product managers should be actively involved in selecting tools that ensure a transparent and accessible QA process. While specific tools for the various steps in the evaluation are suggested above, there are a number of general considerations that should be factored into any tool selection process:

- Supported tech stack: Keep in mind that the majority of available offerings support only a small set of development tools and can result in partial or misleading reporting.

- Installation simplicity: Tools whose installation processes are based on complex scripting may require a significant engineering investment.

- Reporting: Preference should be given to tools that export detailed, well-structured reports that identify major issues and provide recommendations for fixes.

- Integration: Tools should be screened for easy integration with the other development and management tools being used.

- Pricing: Tools rarely come with a comprehensive price list, so it is important to carefully consider the investment involved. Various pricing models typically take into account things like team headcount, code size, and the development tools involved.

- Deployment: When weighing on-premise versus cloud deployment, consider factors like security. For example, if the product being evaluated handles confidential or sensitive data, on-prem tools and tools using the blind-audit approach (FOSSID) may be preferable.

Keeping It Going

Once risks have been identified and analyzed methodically, product managers can make thoughtful decisions around prioritization and triage defects more accurately. Teams could be restructured and resources allocated to address the most emergent or prevalent issues. “Showstoppers” like high-risk license violations would take precedence over lower-severity defects, and more emphasis would be placed on activities that contribute to the reduction of codebase size and complexity.

This is not a one-time process, however. Measuring and monitoring software quality should happen continuously throughout the SDLC. The full seven-step evaluation should be conducted periodically, with quality improvement efforts beginning immediately following each analysis. The faster a new risk point is identified, the cheaper the remedy and the more limited the fallout. Making source code quality evaluation central to the product development process focuses teams, aligns stakeholders, mitigates risks, and gives a product its very best chance at success—and that’s every product manager’s business.

Further Reading on the Toptal Blog:

Understanding the basics

How do you ensure code quality?

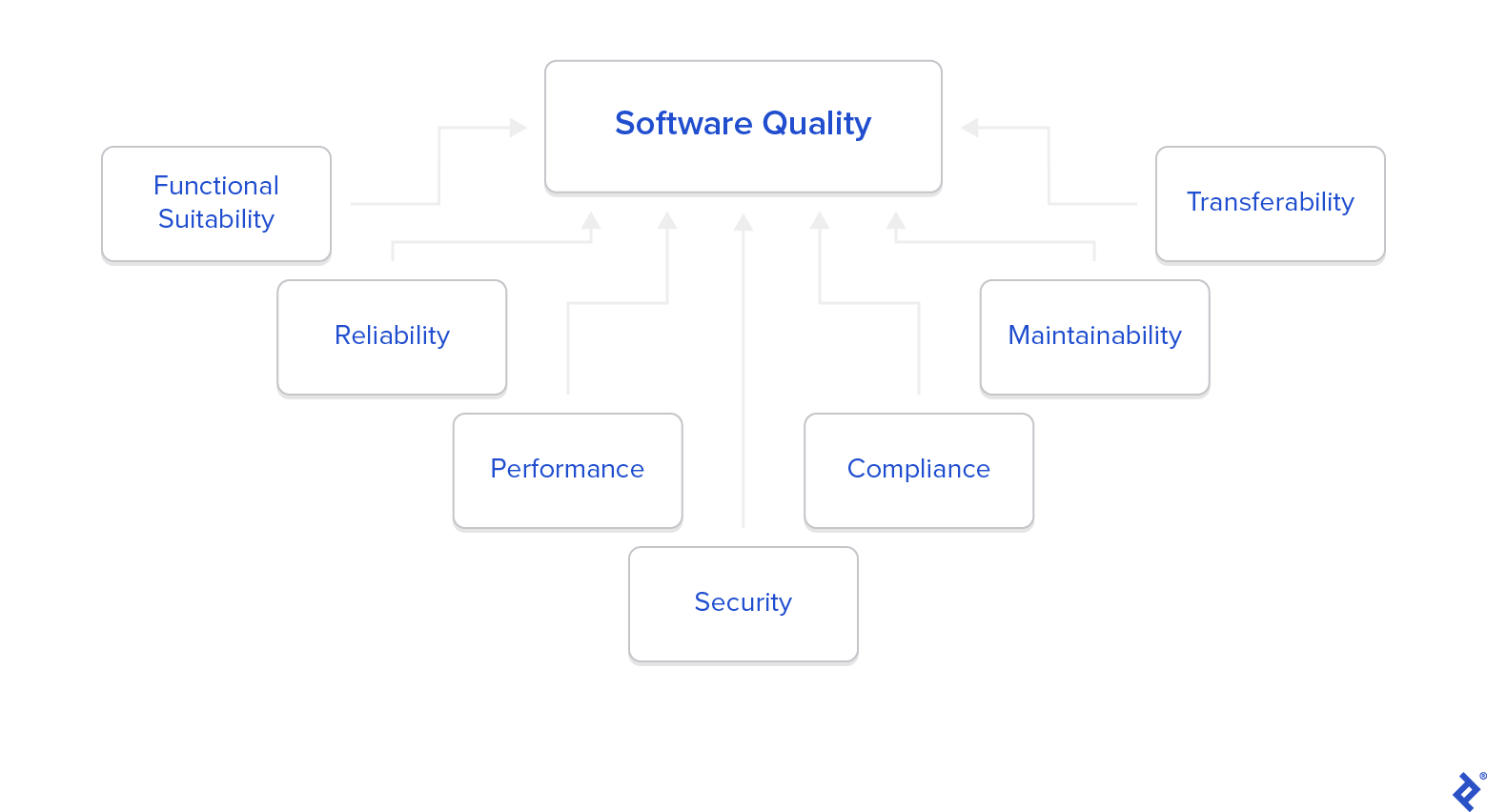

To ensure quality, the code QA process must consider all of the following: functional stability, reliability, performance, security, compliance, maintainability, and transferability.

Why is code review important?

Periodic code reviews enable teams to identify technical debt, bugs and defects, security risks, and license violations before they pose significant threats to the product or business.

What happens during code review?

A good code review uses a combination of tools to examine repositories, tech stack, versions, defects, security risks, license violations, and business risks.

Athens, Greece

Member since March 22, 2019

About the author

A product leader and technologist, Costas has 25+ years of experience supervising the full life cycle of sophisticated product development.