Shipping Your Product in Iterations: A Guide to Hypothesis Testing

Glancing at the App Store on any phone will reveal that most installed apps have had updates released within the last week. Software products today are shipped in iterations to validate assumptions and hypotheses about what makes the product experience better for users.

Glancing at the App Store on any phone will reveal that most installed apps have had updates released within the last week. Software products today are shipped in iterations to validate assumptions and hypotheses about what makes the product experience better for users.

Kumara has successfully delivered high-impact products in various industries ranging from eCommerce, healthcare, travel, and ride-hailing.

PREVIOUSLY AT

A look at the App Store on any phone will reveal that most installed apps have had updates released within the last week. A website visit after a few weeks might show some changes in the layout, user experience, or copy.

Today, software is shipped in iterations to validate assumptions and the product hypothesis about what makes a better user experience. At any given time, companies like booking.com (where I worked before) run hundreds of A/B tests on their sites for this very purpose.

For applications delivered over the internet, there is no need to decide on the look of a product 12-18 months in advance, and then build and eventually ship it. Instead, it is perfectly practical to release small changes that deliver value to users as they are being implemented, removing the need to make assumptions about user preferences and ideal solutions—for every assumption and hypothesis can be validated by designing a test to isolate the effect of each change.

In addition to delivering continuous value through improvements, this approach allows a product team to gather continuous feedback from users and then course-correct as needed. Creating and testing hypotheses every couple of weeks is a cheaper and easier way to build a course-correcting and iterative approach to creating product value.

What Is Hypothesis Testing in Product Management?

While shipping a feature to users, it is imperative to validate assumptions about design and features in order to understand their impact in the real world.

This validation is traditionally done through product hypothesis testing, during which the experimenter outlines a hypothesis for a change and then defines success. For instance, if a data product manager at Amazon has a hypothesis that showing bigger product images will raise conversion rates, then success is defined by higher conversion rates.

One of the key aspects of hypothesis testing is the isolation of different variables in the product experience in order to be able to attribute success (or failure) to the changes made. So, if our Amazon product manager had a further hypothesis that showing customer reviews right next to product images would improve conversion, it would not be possible to test both hypotheses at the same time. Doing so would result in failure to properly attribute causes and effects; therefore, the two changes must be isolated and tested individually.

Thus, product decisions on features should be backed by hypothesis testing to validate the performance of features.

Different Types of Hypothesis Testing

A/B Testing

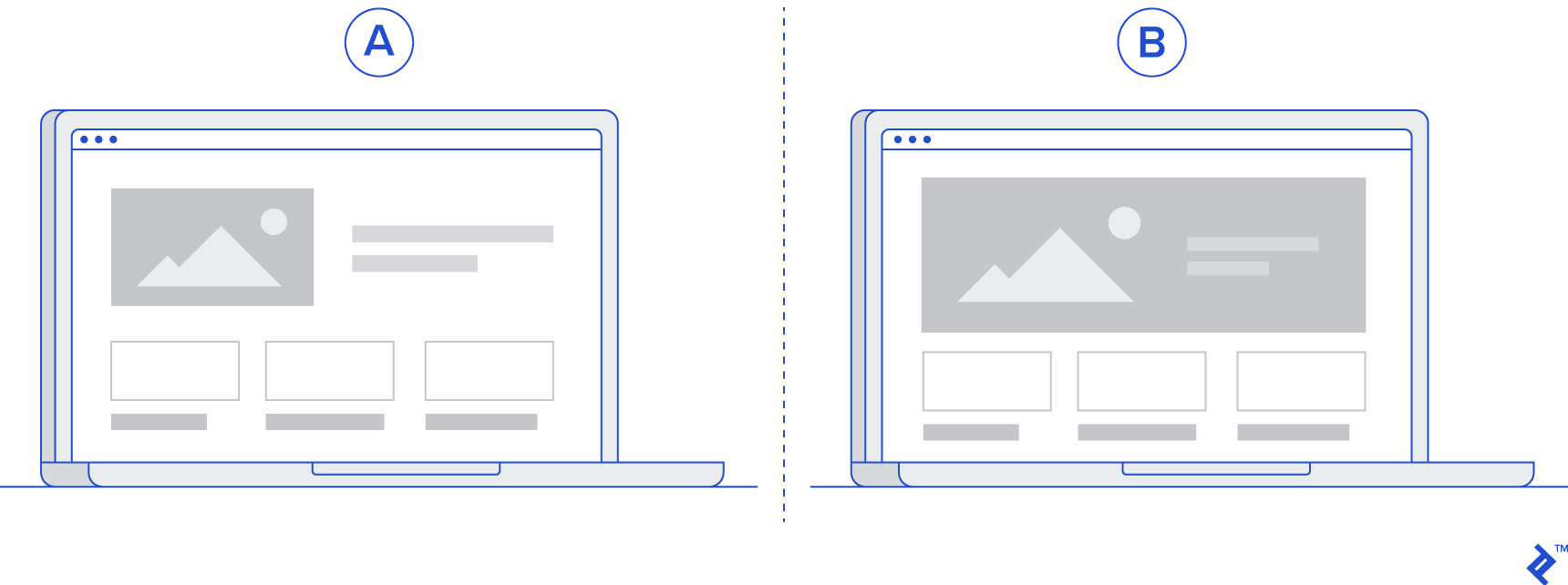

One of the most common use cases to achieve hypothesis validation is randomized A/B testing, in which a change or feature is released at random to one-half of users (A) and withheld from the other half (B). Returning to the hypothesis of bigger product images improving conversion on Amazon, one-half of users will be shown the change, while the other half will see the website as it was before. The conversion will then be measured for each group (A and B) and compared. In case of a significant uplift in conversion for the group shown bigger product images, the conclusion would be that the original hypothesis was correct, and the change can be rolled out to all users.

Multivariate Testing

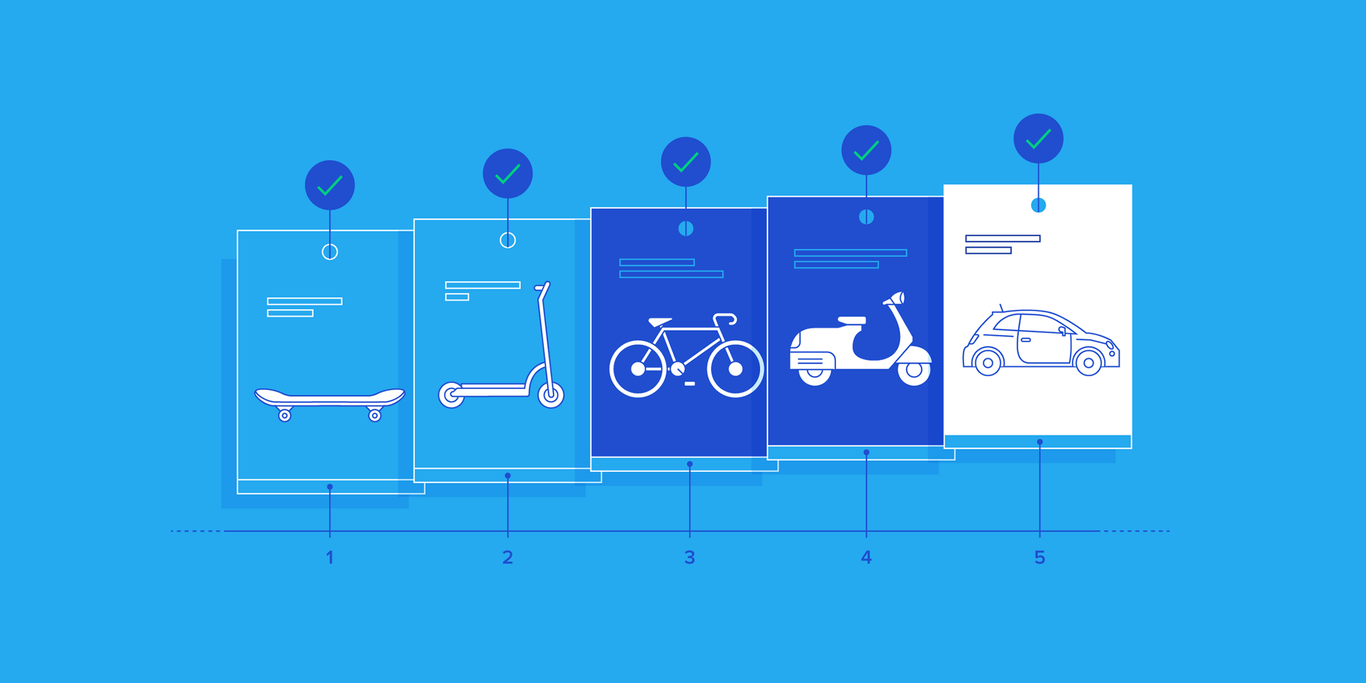

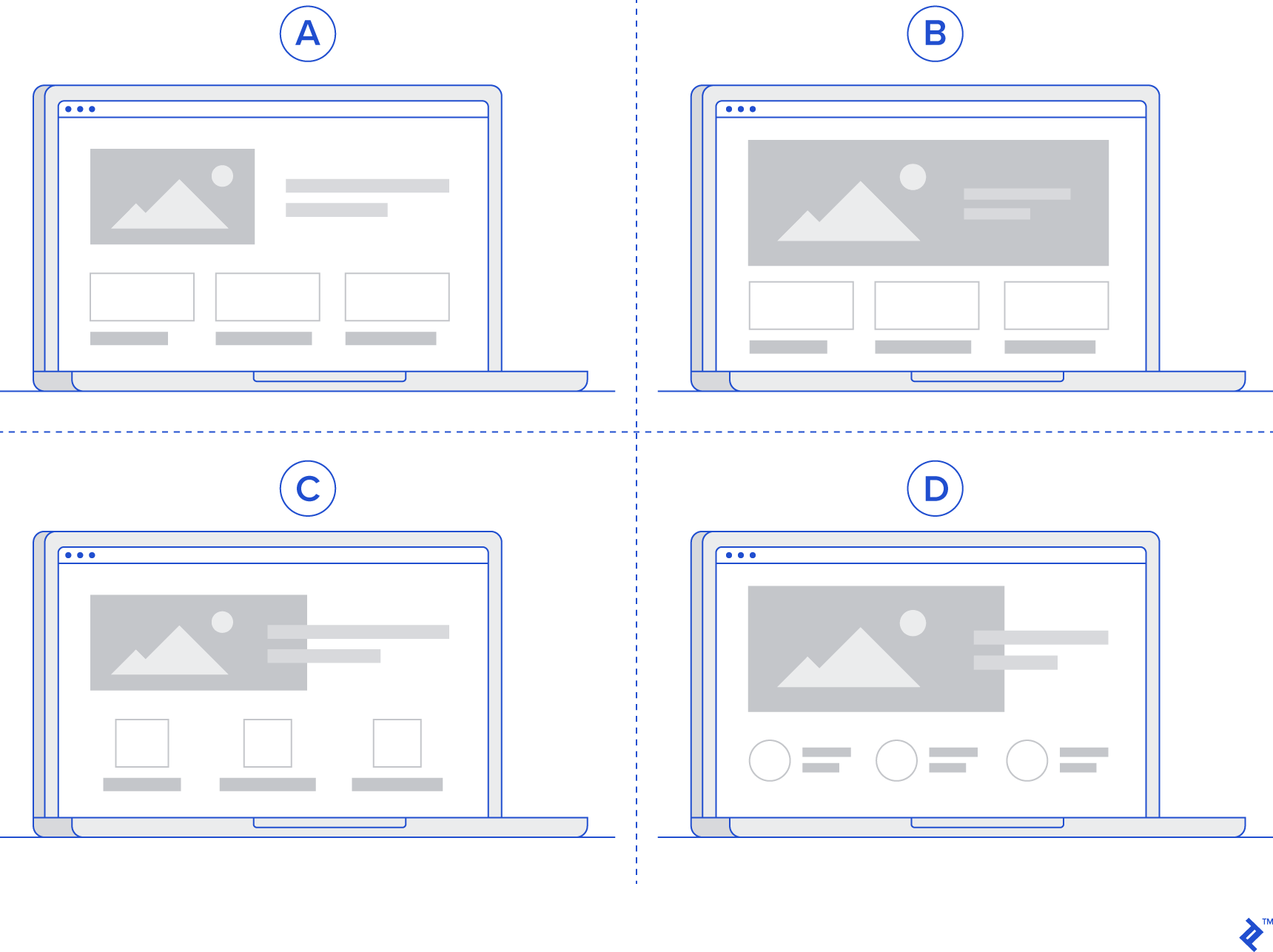

Ideally, each variable should be isolated and tested separately so as to conclusively attribute changes. However, such a sequential approach to testing can be very slow, especially when there are several versions to test. To continue with the example, in the hypothesis that bigger product images lead to higher conversion rates on Amazon, “bigger” is subjective, and several versions of “bigger” (e.g., 1.1x, 1.3x, and 1.5x) might need to be tested.

Instead of testing such cases sequentially, a multivariate test can be adopted, in which users are not split in half but into multiple variants. For instance, four groups (A, B, C, D) are made up of 25% of users each, where A-group users will not see any change, whereas those in variants B, C, and D will see images bigger by 1.1x, 1.3x, and 1.5x, respectively. In this test, multiple variants are simultaneously tested against the current version of the product in order to identify the best variant.

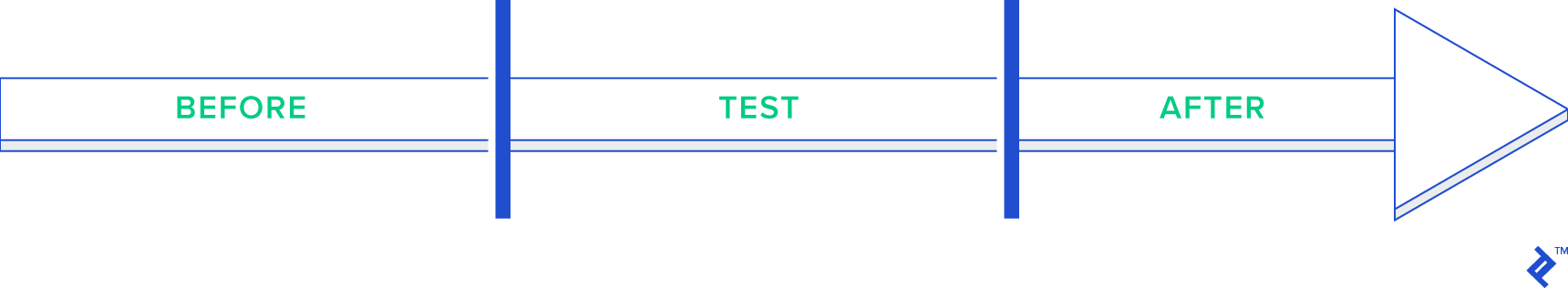

Before/After Testing

Sometimes, it is not possible to split the users in half (or into multiple variants) as there might be network effects in place. For example, if the test involves determining whether one logic for formulating surge prices on Uber is better than another, the drivers cannot be divided into different variants, as the logic takes into account the demand and supply mismatch of the entire city. In such cases, a test will have to compare the effects before the change and after the change in order to arrive at a conclusion.

However, the constraint here is the inability to isolate the effects of seasonality and externality that can differently affect the test and control periods. Suppose a change to the logic that determines surge pricing on Uber is made at time t, such that logic A is used before and logic B is used after. While the effects before and after time t can be compared, there is no guarantee that the effects are solely due to the change in logic. There could have been a difference in demand or other factors between the two time periods that resulted in a difference between the two.

Time-based On/Off Testing

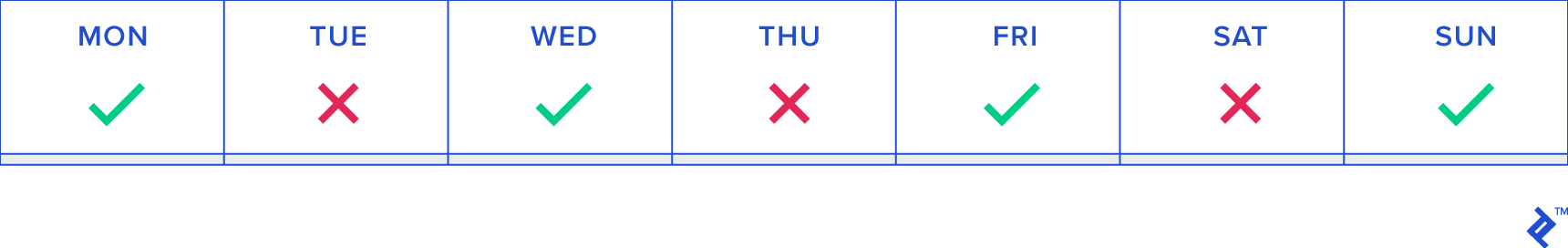

The downsides of before/after testing can be overcome to a large extent by deploying time-based on/off testing, in which the change is introduced to all users for a certain period of time, turned off for an equal period of time, and then repeated for a longer duration.

For example, in the Uber use case, the change can be shown to drivers on Monday, withdrawn on Tuesday, shown again on Wednesday, and so on.

While this method doesn’t fully remove the effects of seasonality and externality, it does reduce them significantly, making such tests more robust.

Test Design

Choosing the right test for the use case at hand is an essential step in validating a hypothesis in the quickest and most robust way. Once the choice is made, the details of the test design can be outlined.

The test design is simply a coherent outline of:

- The hypothesis to be tested: Showing users bigger product images will lead them to purchase more products.

- Success metrics for the test: Customer conversion

- Decision-making criteria for the test: The test validates the hypothesis that users in the variant show a higher conversion rate than those in the control group.

- Metrics that need to be instrumented to learn from the test: Customer conversion, clicks on product images

In the case of the product hypothesis example that bigger product images will lead to improved conversion on Amazon, the success metric is conversion and the decision criteria is an improvement in conversion.

After the right test is chosen and designed, and the success criteria and metrics are identified, the results must be analyzed. To do that, some statistical concepts are necessary.

Sampling

When running tests, it is important to ensure that the two variants picked for the test (A and B) do not have a bias with respect to the success metric. For instance, if the variant that sees the bigger images already has a higher conversion than the variant that doesn’t see the change, then the test is biased and can lead to wrong conclusions.

In order to ensure no bias in sampling, one can observe the mean and variance for the success metric before the change is introduced.

Significance and Power

Once a difference between the two variants is observed, it is important to conclude that the change observed is an actual effect and not a random one. This can be done by computing the significance of the change in the success metric.

In layman’s terms, significance measures the frequency with which the test shows that bigger images lead to higher conversion when they actually don’t. Power measures the frequency with which the test tells us that bigger images lead to higher conversion when they actually do.

So, tests need to have a high value of power and a low value of significance for more accurate results.

While an in-depth exploration of the statistical concepts involved in product management hypothesis testing is out of scope here, the following actions are recommended to enhance knowledge on this front:

- Data analysts and data engineers are usually adept at identifying the right test designs and can guide product managers, so make sure to utilize their expertise early in the process.

- There are numerous online courses on hypothesis testing, A/B testing, and related statistical concepts, such as Udemy, Udacity, and Coursera.

- Using tools such as Google’s Firebase and Optimizely can make the process easier thanks to a large amount of out-of-the-box capabilities for running the right tests.

Using Hypothesis Testing for Successful Product Management

In order to continuously deliver value to users, it is imperative to test various hypotheses, for the purpose of which several types of product hypothesis testing can be employed. Each hypothesis needs to have an accompanying test design, as described above, in order to conclusively validate or invalidate it.

This approach helps to quantify the value delivered by new changes and features, bring focus to the most valuable features, and deliver incremental iterations.

Further Reading on the Toptal Blog:

Understanding the basics

What is a product hypothesis?

A product hypothesis is an assumption that some improvement in the product will bring an increase in important metrics like revenue or product usage statistics.

What are the three required parts of a hypothesis?

The three required parts of a hypothesis are the assumption, the condition, and the prediction.

Why do we do A/B testing?

We do A/B testing to make sure that any improvement in the product increases our tracked metrics.

What is A/B testing used for?

A/B testing is used to check if our product improvements create the desired change in metrics.

What is A/B testing and multivariate testing?

A/B testing and multivariate testing are types of hypothesis testing. A/B testing checks how important metrics change with and without a single change in the product. Multivariate testing can track multiple variations of the same product improvement.

Kumara Raghavendra

Dubai, United Arab Emirates

Member since August 6, 2019

About the author

Kumara has successfully delivered high-impact products in various industries ranging from eCommerce, healthcare, travel, and ride-hailing.

PREVIOUSLY AT